Introduction

Data Quality Tools are specialized solutions that help organizations ensure data accuracy, consistency, completeness, and reliability across systems. These tools monitor, cleanse, validate, and enrich data so that it can be trusted for analytics, reporting, and operational decision-making.

In modern data-driven environments, poor data quality leads to incorrect insights, failed AI models, compliance risks, and revenue loss. With increasing adoption of cloud data warehouses, real-time pipelines, and AI applications, maintaining high-quality data has become critical. Data quality tools now offer automated validation, anomaly detection, AI-driven monitoring, and integration with modern data stacks.

Real-world use cases:

- Detecting and fixing inaccurate or duplicate data

- Monitoring data pipelines for anomalies

- Ensuring compliance with data governance standards

- Improving analytics and reporting accuracy

- Supporting machine learning and AI workflows

What buyers should evaluate:

- Data profiling and validation capabilities

- Integration with data warehouses and pipelines

- Automation and anomaly detection features

- Data cleansing and enrichment tools

- Real-time vs batch monitoring

- Scalability across datasets

- Ease of use and UI experience

- API and extensibility

- Governance and compliance features

- Pricing and deployment flexibility

Best for: Data engineers, analytics teams, data scientists, and enterprises managing large-scale data environments

Not ideal for: Organizations with minimal data usage or simple datasets

Key Trends in Data Quality Tools

- AI-driven anomaly detection and monitoring

- Real-time data quality validation

- Integration with modern data stacks and warehouses

- Data observability platforms gaining traction

- Automation of data quality workflows

- Embedded data quality in pipelines

- Focus on data governance and compliance

- Low-code and no-code interfaces

- Integration with BI and analytics tools

- Expansion into data reliability engineering

How We Selected These Tools Methodology

- Market adoption and industry recognition

- Coverage across data quality lifecycle

- Integration with modern data platforms

- Strength of validation and monitoring features

- Automation and analytics capabilities

- Scalability across large datasets

- Ease of deployment and usability

- Vendor innovation and maturity

- Support and documentation quality

- Fit across SMB and enterprise use cases

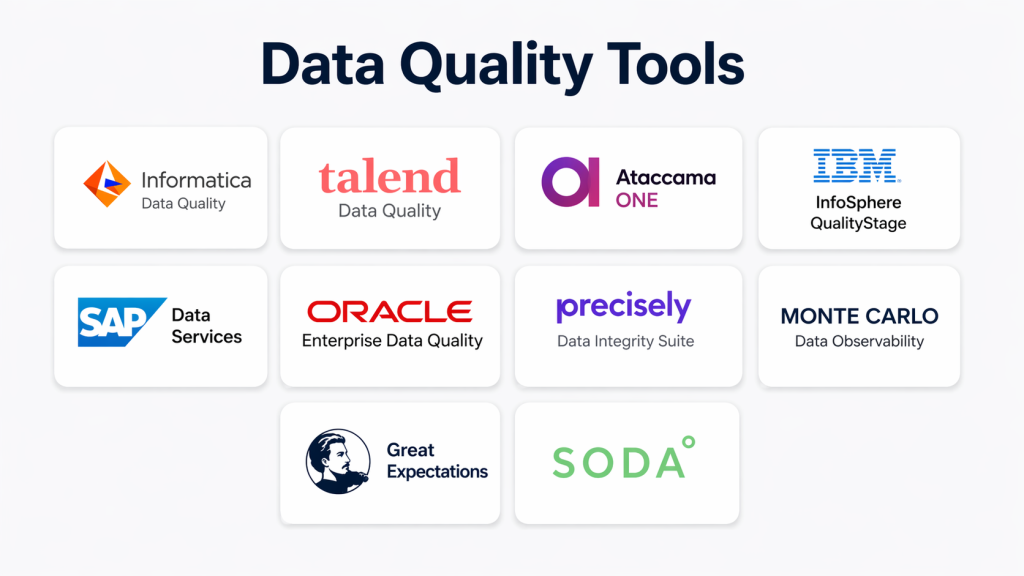

Top 10 Data Quality Tools

#1 — Informatica Data Quality

Short description:

Informatica Data Quality is an enterprise-grade platform for profiling, cleansing, and validating data. It supports large-scale data environments. It offers automation and monitoring. It integrates with enterprise systems. It is highly scalable. It is widely used by large organizations.

Key Features

- Data profiling

- Data cleansing

- Validation rules

- Monitoring

- Integration

Pros

- Comprehensive capabilities

- Scalable for enterprises

- Strong ecosystem

Cons

- Complex setup

- Expensive

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with enterprise data platforms and analytics tools.

- Data warehouses

- BI tools

- APIs

Support & Community

Strong enterprise support and global presence.

#2 — Talend Data Quality

Short description:

Talend Data Quality provides tools for profiling, cleansing, and monitoring data. It integrates with Talend pipelines. It supports automation. It is scalable. It offers open-source flexibility. It is widely used.

Key Features

- Data profiling

- Cleansing

- Monitoring

- Integration

- Automation

Pros

- Open-source option

- Flexible

- Scalable

Cons

- Learning curve

- Complex interface

Platforms / Deployment

- Cloud / Hybrid

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Talend integrates with data pipelines and cloud platforms.

- Data warehouses

- APIs

Support & Community

Active community and enterprise support.

#3 — Ataccama ONE

Short description:

Ataccama ONE is a unified data quality and governance platform. It offers AI-driven data monitoring. It supports automation and workflows. It integrates with enterprise systems. It is scalable. It is suitable for large organizations.

Key Features

- AI-driven monitoring

- Data profiling

- Automation

- Integration

- Governance

Pros

- Strong automation

- Scalable

- Advanced features

Cons

- Cost

- Complex setup

Platforms / Deployment

- Cloud

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Supports integration with enterprise data platforms and APIs.

- Data systems

- APIs

Support & Community

Enterprise support with growing adoption.

#4 — IBM InfoSphere QualityStage

Short description:

IBM QualityStage provides data cleansing and matching capabilities. It supports large-scale data processing. It integrates with IBM ecosystem. It offers strong reliability. It is scalable. It is suitable for enterprises.

Key Features

- Data matching

- Cleansing

- Integration

- Monitoring

- Reporting

Pros

- Strong reliability

- Scalable

- Enterprise-ready

Cons

- Complex

- Expensive

Platforms / Deployment

- On-prem / Cloud

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with IBM data and analytics tools.

- Data platforms

- APIs

Support & Community

Enterprise-level support.

#5 — SAP Data Services

Short description:

SAP Data Services provides data quality and integration capabilities. It supports profiling and cleansing. It integrates with SAP systems. It is scalable. It offers strong enterprise features.

Key Features

- Data integration

- Profiling

- Cleansing

- Monitoring

- Reporting

Pros

- Strong SAP integration

- Scalable

- Reliable

Cons

- Complex

- Cost

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with SAP and enterprise systems.

- ERP systems

- APIs

Support & Community

Enterprise support available.

#6 — Oracle Enterprise Data Quality

Short description:

Oracle Enterprise Data Quality provides tools for profiling and cleansing data. It supports monitoring and validation. It integrates with Oracle ecosystem. It is scalable. It offers strong performance.

Key Features

- Data profiling

- Cleansing

- Monitoring

- Integration

- Reporting

Pros

- Strong performance

- Scalable

- Reliable

Cons

- Complex

- Cost

Platforms / Deployment

- Cloud / On-prem

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with Oracle databases and applications.

- Databases

- APIs

Support & Community

Enterprise-level support.

#7 — Precisely Data Integrity Suite

Short description:

Precisely provides data quality and integrity solutions with strong governance features. It supports data monitoring and validation. It integrates with enterprise systems. It is scalable. It provides reliable performance.

Key Features

- Data validation

- Monitoring

- Integration

- Governance

- Reporting

Pros

- Strong governance

- Scalable

- Reliable

Cons

- Complex

- Cost

Platforms / Deployment

- Cloud

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Supports integration with enterprise systems and APIs.

- Data platforms

- APIs

Support & Community

Enterprise support available.

#8 — Monte Carlo Data Observability

Short description:

Monte Carlo focuses on data observability and quality monitoring. It detects anomalies in data pipelines. It provides alerts and insights. It integrates with modern data stacks. It is scalable.

Key Features

- Data observability

- Anomaly detection

- Monitoring

- Integration

- Alerts

Pros

- Strong monitoring

- Easy to use

- Scalable

Cons

- Limited cleansing features

- Cost

Platforms / Deployment

- Cloud

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with modern data warehouses and tools.

- Data warehouses

- APIs

Support & Community

Growing ecosystem with good documentation.

#9 — Great Expectations

Short description:

Great Expectations is an open-source data quality tool for validation and testing. It allows users to define data expectations. It integrates with pipelines. It is flexible. It is widely used by developers.

Key Features

- Data validation

- Testing framework

- Integration

- Automation

- Reporting

Pros

- Open-source

- Flexible

- Developer-friendly

Cons

- Requires setup

- Limited UI

Platforms / Deployment

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Supports integration with data pipelines and tools.

- Data pipelines

- APIs

Support & Community

Strong open-source community.

#10 — Soda

Short description:

Soda provides data quality monitoring with a focus on observability. It detects anomalies and issues. It integrates with modern data stacks. It is scalable. It is easy to use.

Key Features

- Data monitoring

- Anomaly detection

- Integration

- Reporting

- Alerts

Pros

- Easy to use

- Scalable

- Flexible

Cons

- Limited advanced features

- Smaller ecosystem

Platforms / Deployment

- Cloud

Security & Compliance

- RBAC

- Compliance Not publicly stated

Integrations & Ecosystem

Integrates with modern data tools and APIs.

- Data warehouses

- APIs

Support & Community

Growing community and support.

Comparison Table

| Tool | Best For | Platform | Deployment | Standout Feature | Rating |

|---|---|---|---|---|---|

| Informatica | Enterprise | Multi | Hybrid | Full suite | N/A |

| Talend | SMB | Multi | Hybrid | Open-source | N/A |

| Ataccama | Enterprise | Cloud | Cloud | AI automation | N/A |

| IBM | Enterprise | Multi | Hybrid | Matching | N/A |

| SAP | Enterprise | Multi | Hybrid | SAP integration | N/A |

| Oracle | Enterprise | Multi | Hybrid | Performance | N/A |

| Precisely | Enterprise | Cloud | Cloud | Governance | N/A |

| Monte Carlo | Modern data | Cloud | Cloud | Observability | N/A |

| Great Expectations | Devs | Multi | Hybrid | Testing | N/A |

| Soda | SMB | Cloud | Cloud | Monitoring | N/A |

Evaluation & Scoring of Data Quality Tools

| Tool | Core | Ease | Integration | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Informatica | 10 | 7 | 9 | 10 | 9 | 9 | 7 | 8.9 |

| Talend | 9 | 8 | 9 | 9 | 8 | 8 | 8 | 8.5 |

| Ataccama | 9 | 7 | 8 | 9 | 9 | 8 | 8 | 8.4 |

| IBM | 9 | 7 | 8 | 9 | 9 | 8 | 7 | 8.2 |

| SAP | 9 | 7 | 8 | 9 | 8 | 8 | 7 | 8.1 |

| Oracle | 9 | 7 | 8 | 9 | 9 | 8 | 7 | 8.2 |

| Precisely | 9 | 7 | 8 | 9 | 8 | 8 | 7 | 8.1 |

| Monte Carlo | 8 | 9 | 8 | 8 | 9 | 8 | 8 | 8.4 |

| Great Expectations | 8 | 7 | 9 | 8 | 8 | 7 | 9 | 8.1 |

| Soda | 8 | 9 | 8 | 8 | 8 | 7 | 9 | 8.3 |

Scoring is comparative and based on capabilities, usability, integrations, and value. Higher scores indicate stronger overall offerings, but the best tool depends on specific business needs and use cases.

Which Data Quality Tool Is Right for You

Solo / Freelancer

- Great Expectations

SMB

- Soda

Mid-Market

- Talend, Monte Carlo

Enterprise

- Informatica, Ataccama, SAP

Budget vs Premium

- Budget option is Great Expectations

- Premium option is Informatica

Feature Depth vs Ease of Use

- Easy option is Soda

- Advanced option is Informatica

Integrations & Scalability

- Strong integration offered by Talend

Security & Compliance Needs

- Enterprise-grade option is Informatica

Frequently Asked Questions

1. What are Data Quality Tools

Data quality tools are solutions that help organizations maintain accurate and reliable data. They identify errors and inconsistencies. They ensure data is usable for analytics. They improve decision-making.

2. Why are Data Quality Tools important

Poor data leads to incorrect insights and business risks. These tools help maintain accuracy. They improve reporting and analytics. They ensure compliance and trust.

3. How do Data Quality Tools work

They scan and validate datasets. They apply rules and checks. They detect anomalies. They provide alerts and reports.

4. Who should use Data Quality Tools

Data engineers, analysts, and enterprises use these tools. Organizations with large datasets benefit most. They help improve data reliability.

5. Are Data Quality Tools scalable

Yes, they support large datasets and cloud environments. They scale with business growth. They ensure consistent data quality across systems.

6. Do Data Quality Tools integrate with other tools

Yes, they integrate with data warehouses, pipelines, and analytics platforms. This improves workflows. It ensures seamless data management.

7. Are Data Quality Tools secure

They include access controls and monitoring. They ensure data protection. Proper configuration improves security. They reduce data risks.

8. Are Data Quality Tools difficult to implement

Some tools are easy to use while others require expertise. Setup depends on complexity. Proper planning ensures success.

9. What are alternatives to Data Quality Tools

Alternatives include manual validation and ETL tools. However, they are less efficient. Data quality tools provide automation and accuracy.

10. Are Data Quality Tools expensive

Pricing varies by features and scale. Open-source options are available. Enterprise tools can be costly. Investment depends on needs.

Conclusion

Data Quality Tools play a crucial role in ensuring that organizations can trust their data for analytics, reporting, and decision-making. As businesses increasingly rely on data-driven strategies, maintaining high data quality is no longer optional but essential. These tools help automate validation, detect anomalies, and ensure consistency across complex data environments. Choosing the right data quality solution depends on your organization’s scale, technical expertise, and integration requirements. Enterprise platforms like Informatica and Ataccama offer comprehensive capabilities, while tools like Soda and Great Expectations provide flexibility and ease of use for smaller teams. The best approach is to shortlist a few tools, test them in real-world scenarios, and ensure they align with your data strategy before making a final decision.