Introduction

Batch processing frameworks are systems designed to process large volumes of data in groups (batches) rather than in real time. Instead of handling data as it arrives, these frameworks collect data over a period and process it at scheduled intervals. This approach is ideal for workloads that require heavy computation, historical analysis, and cost-efficient data processing.

Batch processing remains a critical part of modern data infrastructure, especially for analytics, reporting, and large-scale transformations. While real-time systems are growing, batch processing continues to power many core business operations due to its reliability and scalability.

Real-world use cases include:

- Data warehousing and ETL pipelines

- Financial reporting and reconciliation

- Log processing and historical analysis

- Machine learning model training

- Large-scale data transformations

What buyers should evaluate:

- Processing performance and scalability

- Ease of scheduling and orchestration

- Integration with data storage systems

- Fault tolerance and reliability

- Cost efficiency for large workloads

- Support for distributed computing

- Developer experience and APIs

- Deployment flexibility

- Monitoring and debugging tools

- Ecosystem and community support

Best for: Data engineers, analytics teams, enterprises handling large datasets, and organizations focused on historical data processing.

Not ideal for: Applications requiring instant insights or real-time decision-making.

Key Trends in Batch Processing Frameworks

- Convergence of batch and stream processing models

- Increased adoption of cloud-native batch systems

- Integration with data lakes and lakehouse architectures

- Automation in data pipelines and orchestration

- Support for AI/ML workflows and large-scale training

- Serverless batch processing services

- Improved cost optimization through resource scaling

- Enhanced monitoring and observability

- Declarative data pipeline development

- Hybrid architectures combining batch and real-time

How We Selected These Tools (Methodology)

The frameworks were selected based on:

- Industry adoption and maturity

- Performance in large-scale batch workloads

- Feature completeness and flexibility

- Integration with modern data ecosystems

- Scalability and fault tolerance

- Developer experience and usability

- Deployment options (cloud, on-prem, hybrid)

- Community and ecosystem strength

- Innovation in data processing

- Overall cost-value balance

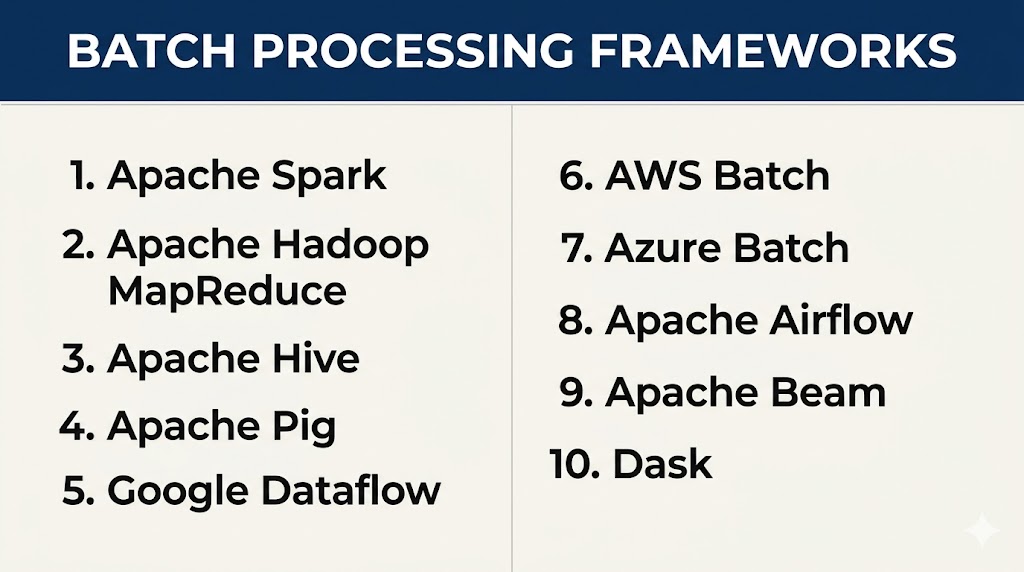

Top 10 Batch Processing Frameworks Tools

#1 — Apache Hadoop MapReduce

Short description: A foundational batch processing framework for distributed data processing across large clusters.

Key Features

- Distributed processing model

- Fault tolerance

- Scalable architecture

- Data locality optimization

- Integration with Hadoop ecosystem

- Batch-oriented processing

Pros

- Highly reliable for large datasets

- Mature ecosystem

Cons

- Slow compared to modern tools

- Complex setup

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- HDFS

- Hive

- Pig

Support & Community

Strong legacy community support.

#2 — Apache Spark

Short description: A fast, in-memory data processing engine supporting batch and stream workloads.

Key Features

- In-memory processing

- Distributed computing

- SQL support

- Machine learning libraries

- High scalability

- Unified processing engine

Pros

- Faster than MapReduce

- Rich ecosystem

Cons

- Memory intensive

- Requires tuning

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hadoop

- Databases

- APIs

Support & Community

Very strong global community.

#3 — Apache Hive

Short description: A data warehouse system built on Hadoop for batch querying and analytics.

Key Features

- SQL-like query language

- Batch data processing

- Integration with Hadoop

- Data warehousing capabilities

- Scalable queries

Pros

- Easy for SQL users

- Strong integration with Hadoop

Cons

- High latency

- Not suitable for real-time

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hadoop

- Data warehouses

- BI tools

Support & Community

Established community support.

#4 — Apache Pig

Short description: A high-level platform for creating batch processing programs using a scripting language.

Key Features

- Data flow scripting

- Simplified programming model

- Integration with Hadoop

- Batch processing

- Extensible functions

Pros

- Easier than MapReduce

- Flexible scripting

Cons

- Declining usage

- Limited modern support

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hadoop

- Data pipelines

Support & Community

Limited but stable community.

#5 — Google Dataflow

Short description: A managed service for batch and stream data processing using unified pipelines.

Key Features

- Managed infrastructure

- Auto-scaling

- Unified processing model

- High reliability

- Pipeline abstraction

Pros

- Easy to use

- No infrastructure management

Cons

- Cloud dependency

- Pricing complexity

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Cloud services

- APIs

Support & Community

Strong enterprise support.

#6 — AWS Batch

Short description: A fully managed service for running batch computing workloads on AWS.

Key Features

- Job scheduling

- Auto-scaling

- Container-based execution

- Resource optimization

- Integration with AWS services

Pros

- Fully managed

- Scalable infrastructure

Cons

- AWS lock-in

- Setup complexity

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- AWS services

- Containers

Support & Community

Strong support ecosystem.

#7 — Azure Batch

Short description: A cloud service for running large-scale parallel batch jobs.

Key Features

- Parallel processing

- Job scheduling

- Auto-scaling

- Integration with Azure

- High performance

Pros

- Scalable

- Easy integration

Cons

- Limited outside Azure

- Configuration complexity

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Azure services

- APIs

Support & Community

Enterprise-level support.

#8 — Apache Oozie

Short description: A workflow scheduler system for managing Hadoop batch jobs.

Key Features

- Workflow scheduling

- Job coordination

- Integration with Hadoop

- Automation of pipelines

- Dependency management

Pros

- Strong scheduling capabilities

- Reliable for Hadoop workflows

Cons

- Complex configuration

- Limited modern features

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hadoop ecosystem

- Batch pipelines

Support & Community

Moderate community support.

#9 — Luigi

Short description: A Python-based workflow management system for batch processing pipelines.

Key Features

- Pipeline orchestration

- Dependency management

- Task scheduling

- Monitoring capabilities

- Python-based workflows

Pros

- Easy to use for developers

- Lightweight

Cons

- Limited scalability compared to enterprise tools

- Basic UI

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Python ecosystem

- Data pipelines

Support & Community

Active developer community.

#10 — Azkaban

Short description: A batch workflow job scheduler designed for managing complex data pipelines.

Key Features

- Workflow scheduling

- Dependency management

- Job execution tracking

- Scalable pipelines

- Web-based UI

Pros

- Easy workflow management

- Reliable scheduling

Cons

- Limited features compared to modern tools

- Smaller ecosystem

Platforms / Deployment

Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Hadoop

- Data pipelines

Support & Community

Moderate community support.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Hadoop MapReduce | Large-scale processing | Multi-platform | Self-hosted | Distributed computing | N/A |

| Apache Spark | Fast batch processing | Multi-platform | Cloud/Self-hosted | In-memory speed | N/A |

| Apache Hive | Data warehousing | Multi-platform | Cloud/Self-hosted | SQL queries | N/A |

| Apache Pig | Scripting pipelines | Multi-platform | Self-hosted | Data flow scripts | N/A |

| Dataflow | Managed pipelines | Web | Cloud | Auto-scaling | N/A |

| AWS Batch | Cloud batch jobs | Web | Cloud | Managed compute | N/A |

| Azure Batch | Parallel workloads | Web | Cloud | Job scheduling | N/A |

| Apache Oozie | Workflow scheduling | Multi-platform | Self-hosted | Pipeline automation | N/A |

| Luigi | Python pipelines | Multi-platform | Cloud/Self-hosted | Task orchestration | N/A |

| Azkaban | Job scheduling | Multi-platform | Self-hosted | Workflow tracking | N/A |

Evaluation & Scoring of Batch Processing Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Hadoop | 8 | 5 | 8 | 6 | 7 | 8 | 9 | 7.3 |

| Spark | 10 | 7 | 10 | 7 | 9 | 9 | 8 | 8.8 |

| Hive | 7 | 8 | 8 | 6 | 6 | 8 | 8 | 7.4 |

| Pig | 6 | 7 | 6 | 5 | 6 | 6 | 7 | 6.3 |

| Dataflow | 8 | 9 | 8 | 7 | 8 | 8 | 7 | 8.0 |

| AWS Batch | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Azure Batch | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Oozie | 6 | 6 | 7 | 5 | 6 | 7 | 7 | 6.4 |

| Luigi | 7 | 9 | 7 | 5 | 7 | 7 | 8 | 7.5 |

| Azkaban | 7 | 7 | 7 | 5 | 7 | 7 | 7 | 7.0 |

How to interpret scores:

- Scores are comparative within this category

- Higher scores indicate better overall capability

- Performance-heavy tools rank higher in core features

- Managed services rank higher in ease of use

- Choose based on workload complexity and team expertise

Which Batch Processing Framework Is Right for You?

Solo / Freelancer

- Best: Luigi

- Simple and developer-friendly

SMB

- Best: Spark, Dataflow

- Balanced performance and usability

Mid-Market

- Best: AWS Batch, Azure Batch

- Scalable cloud solutions

Enterprise

- Best: Spark, Hadoop

- High-scale and complex workloads

Budget vs Premium

- Budget: Hadoop, Spark (open-source)

- Premium: Managed cloud services

Feature Depth vs Ease of Use

- Depth: Spark, Hadoop

- Ease: Dataflow, Luigi

Integrations & Scalability

- Strong: Spark, Hadoop

- Moderate: Cloud services

Security & Compliance Needs

- Cloud platforms offer built-in controls

- Self-hosted tools require configuration

Frequently Asked Questions (FAQs)

What is batch processing?

Batch processing is a method of processing large volumes of data at scheduled intervals instead of in real time. It is commonly used for tasks like reporting, analytics, and data transformations. This approach is efficient for handling massive datasets where immediate results are not required.

How is batch processing different from real-time processing?

Batch processing works on collected data over time, while real-time processing handles data instantly as it arrives. Batch is ideal for historical analysis, whereas real-time is better for immediate insights. Many modern systems combine both approaches for flexibility.

Which batch processing framework is best?

There is no single best framework, as the choice depends on your data size, infrastructure, and team expertise. Apache Spark is widely preferred for performance, while cloud services offer ease of use. Evaluating scalability and integration needs is important.

Do I need programming skills to use these tools?

Yes, most batch processing frameworks require coding knowledge, especially in languages like Python, Java, or Scala. Some tools provide simplified interfaces, but technical expertise is still helpful. Data engineers typically manage these systems.

Can batch processing handle big data?

Yes, batch processing frameworks are specifically designed to handle large-scale datasets efficiently. They use distributed computing to process data across multiple nodes. This makes them suitable for enterprise-level workloads.

Are batch processing frameworks expensive?

Costs vary depending on the tool and deployment model. Open-source frameworks are free but require infrastructure and maintenance. Cloud-based solutions may have higher costs but reduce operational overhead.

Can batch processing tools integrate with other systems?

Yes, most frameworks integrate with databases, data lakes, and analytics tools. Integration is essential for building complete data pipelines. A strong ecosystem improves flexibility and scalability.

What industries use batch processing?

Industries like finance, healthcare, retail, and technology use batch processing extensively. It is commonly used for reporting, compliance, and large-scale data analysis. Any business handling large datasets can benefit from it.

What is the main advantage of batch processing?

The main advantage is efficiency in processing large volumes of data at lower cost. It allows complex computations without requiring real-time resources. This makes it ideal for heavy data workloads.

Can batch and real-time processing be used together?

Yes, many modern architectures combine batch and real-time processing for better flexibility. This approach is often called a hybrid or lambda architecture. It allows businesses to balance speed and depth of analysis.

Conclusion

Batch processing frameworks continue to play a vital role in modern data ecosystems, especially for handling large-scale data workloads efficiently. They are ideal for tasks that require deep analysis, historical insights, and cost-effective processing. While real-time systems are gaining popularity, batch processing remains essential for core business operations. Choosing the right framework depends on your data volume, technical expertise, and infrastructure needs. Open-source tools offer flexibility and control, while managed cloud services simplify scaling and operations. Performance and reliability should always be validated through real-world testing. Integration capabilities are critical for building complete data pipelines across systems. Cost planning should include infrastructure, maintenance, and long-term scalability. Security and compliance must align with your organizational requirements. A well-evaluated framework ensures efficient processing, better insights, and long-term success in data-driven environments.