Introduction

LLM Orchestration Frameworks are tools that help developers connect large language models (LLMs) with data, APIs, tools, and workflows to build real-world AI applications. Instead of calling an LLM directly, these frameworks manage prompt flows, memory, tool usage, and multi-step reasoning pipelines.

In simple terms, they act as the “control layer” that turns raw AI models into usable systems like chatbots, copilots, and autonomous agents. They handle complex tasks such as chaining multiple model calls, retrieving external data, and coordinating multi-agent workflows.

With the rise of AI-powered apps, orchestration frameworks are now essential for building scalable, reliable, and production-ready systems.

Real-world use cases include:

- Retrieval-Augmented Generation (RAG) systems

- AI copilots and assistants

- Multi-agent automation workflows

- Document search and knowledge systems

- API-connected AI applications

What buyers should evaluate:

- Workflow orchestration capabilities

- Data integration (vector DBs, APIs, files)

- Multi-agent support

- Ease of use vs flexibility

- Performance and latency overhead

- Debugging and observability tools

- Deployment options (cloud/local)

- Ecosystem and integrations

- Security and governance

- Cost and scalability

Best for: Developers, AI engineers, startups, and enterprises building AI-powered applications.

Not ideal for: Simple use cases where direct API calls are enough or teams without technical expertise.

Key Trends in LLM Orchestration Frameworks

- Shift toward agent-based orchestration systems

- Rise of RAG-first architectures for data-driven AI

- Growth of multi-agent collaboration frameworks

- Increasing focus on observability and debugging tools

- Hybrid stacks combining multiple frameworks together

- Expansion of open-source ecosystems

- API-first design for embedding AI into products

- Performance optimization (lower latency, token efficiency)

- Enterprise demand for secure and governed AI workflows

- Movement toward custom orchestration layers for production

How We Selected These Tools (Methodology)

We selected these frameworks based on:

- Market adoption and developer usage

- Feature completeness (RAG, agents, pipelines)

- Integration ecosystem (APIs, tools, vector DBs)

- Performance and scalability

- Flexibility vs ease of use

- Open-source vs enterprise availability

- Community and documentation strength

- Production readiness

- Innovation in orchestration patterns

- Value across different use cases

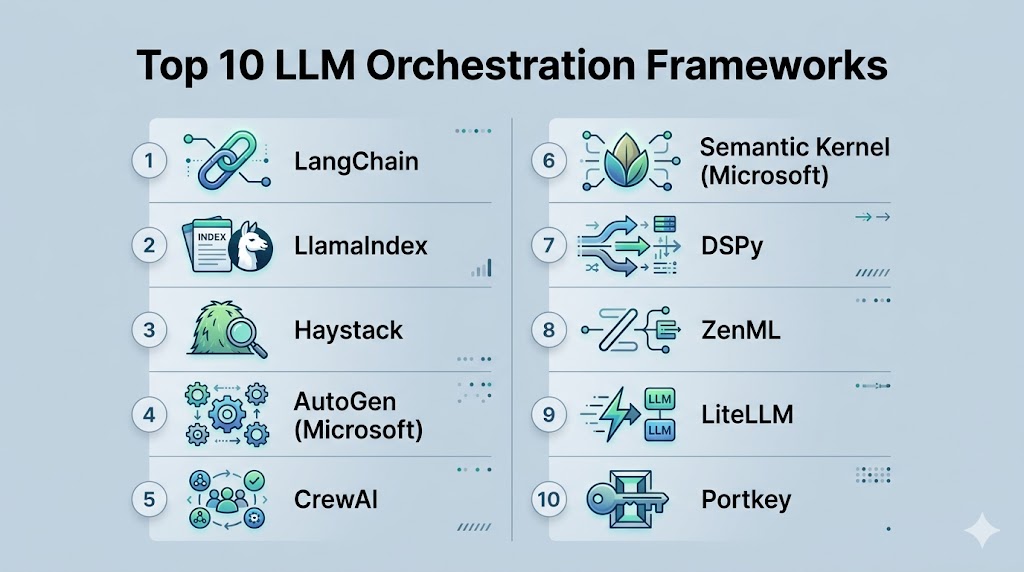

Top 10 LLM Orchestration Frameworks Tools

#1 — LangChain

Short description: The most widely adopted orchestration framework for building LLM-powered applications and agent workflows.

Key Features

- Chain-based workflows

- Tool and API integrations

- Memory management

- Agent frameworks (LangGraph)

- Large ecosystem

- Observability tools

Pros

- Extremely flexible

- Massive ecosystem

Cons

- Complex architecture

- Higher overhead

Platforms / Deployment

Python / JavaScript / Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Extensive integrations across LLMs, vector stores, and APIs.

- Pinecone, FAISS, Weaviate

- OpenAI, Anthropic, Google

- Databases and APIs

Support & Community

Very large global developer community

#2 — LlamaIndex

Short description: A framework focused on connecting LLMs to structured and unstructured data for RAG systems.

Key Features

- Data ingestion pipelines

- Advanced indexing strategies

- Retrieval optimization

- RAG workflows

- Document processing

- Query engines

Pros

- Best for data-heavy use cases

- Strong retrieval performance

Cons

- Limited orchestration flexibility

- Often used with other frameworks

Platforms / Deployment

Python / Cloud / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Strong integrations for data sources and vector databases.

- Document loaders

- Vector DBs

- APIs

Support & Community

Large and growing community

#3 — Haystack

Short description: A production-focused framework for building NLP pipelines and search systems.

Key Features

- Pipeline-based architecture

- Typed components

- RAG support

- Search and QA systems

- Evaluation tools

- Modular design

Pros

- Strong for enterprise use

- High reliability

Cons

- Smaller ecosystem

- Learning curve

Platforms / Deployment

Python / Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Elasticsearch

- Vector databases

- APIs

Support & Community

Enterprise-focused community

#4 — Semantic Kernel

Short description: A Microsoft-backed framework combining orchestration and agent capabilities.

Key Features

- Memory management

- Plugin system

- Multi-agent orchestration

- .NET and Java support

- Workflow automation

Pros

- Strong enterprise integration

- Structured architecture

Cons

- Best for Microsoft stack

- Less flexible outside ecosystem

Platforms / Deployment

Cloud / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- Azure services

- APIs

Support & Community

Enterprise-grade support

#5 — AutoGen

Short description: A framework designed for building multi-agent systems with collaborative workflows.

Key Features

- Multi-agent communication

- Task delegation

- Conversational workflows

- Tool integration

- Automation

Pros

- Strong agent collaboration

- Flexible

Cons

- Developer-focused

- Setup complexity

Platforms / Deployment

Cloud / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- Developer tools

Support & Community

Growing adoption

#6 — CrewAI

Short description: A lightweight framework for building role-based AI agent teams.

Key Features

- Role-based agents

- Workflow orchestration

- Tool integration

- Task automation

- Simple architecture

Pros

- Easy to start

- Good for multi-agent workflows

Cons

- Limited enterprise features

- Smaller ecosystem

Platforms / Deployment

Cloud / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- APIs

- Workflow tools

Support & Community

Active open-source community

#7 — DSPy

Short description: A research-driven framework focused on optimizing LLM pipelines programmatically.

Key Features

- Prompt optimization

- Declarative programming

- Pipeline tuning

- Model-agnostic design

- Performance optimization

Pros

- High efficiency

- Research-backed

Cons

- Not beginner-friendly

- Smaller ecosystem

Platforms / Deployment

Python / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- ML frameworks

- APIs

Support & Community

Academic and developer community

#8 — LiteLLM

Short description: A lightweight framework for managing multiple LLM APIs through a unified interface.

Key Features

- API abstraction layer

- Multi-model support

- Cost tracking

- Routing and fallback

- Logging

Pros

- Simple and efficient

- Good for production

Cons

- Limited orchestration depth

- Focused on API layer

Platforms / Deployment

Cloud / Local

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- LLM providers

- APIs

Support & Community

Growing adoption

#9 — ZenML

Short description: A pipeline orchestration platform extended for LLM workflows and ML pipelines.

Key Features

- Pipeline orchestration

- Experiment tracking

- ML + LLM workflows

- CI/CD integration

- Modular pipelines

Pros

- Strong for MLOps

- Scalable

Cons

- Not LLM-specific

- Requires setup

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- ML tools

- Cloud platforms

Support & Community

Active ML community

#10 — Portkey

Short description: An LLM gateway and orchestration layer focused on reliability and observability.

Key Features

- Request routing

- Observability tools

- Error handling

- Performance tracking

- API management

Pros

- Strong monitoring

- Production-ready

Cons

- Limited workflow depth

- Focused on API layer

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

- LLM APIs

- Monitoring tools

Support & Community

Growing enterprise adoption

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | General orchestration | Python/JS | Hybrid | Ecosystem | N/A |

| LlamaIndex | RAG systems | Python | Hybrid | Data indexing | N/A |

| Haystack | Enterprise NLP | Python | Hybrid | Pipelines | N/A |

| Semantic Kernel | Microsoft stack | Multi | Hybrid | Plugins | N/A |

| AutoGen | Multi-agent systems | Multi | Hybrid | Collaboration | N/A |

| CrewAI | Agent teams | Multi | Hybrid | Roles | N/A |

| DSPy | Optimization | Python | Local | Efficiency | N/A |

| LiteLLM | API orchestration | Multi | Hybrid | Routing | N/A |

| ZenML | Pipelines | Multi | Hybrid | MLOps | N/A |

| Portkey | Observability | Cloud | Cloud | Monitoring | N/A |

Evaluation & Scoring of LLM Orchestration Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 10 | 6 | 10 | 7 | 8 | 9 | 8 | 8.6 |

| LlamaIndex | 9 | 7 | 9 | 7 | 9 | 8 | 8 | 8.4 |

| Haystack | 9 | 6 | 8 | 8 | 8 | 7 | 7 | 8.0 |

| Semantic Kernel | 8 | 7 | 9 | 8 | 8 | 8 | 7 | 8.1 |

| AutoGen | 9 | 6 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| CrewAI | 8 | 7 | 7 | 6 | 7 | 7 | 8 | 7.5 |

| DSPy | 8 | 5 | 7 | 6 | 9 | 6 | 8 | 7.4 |

| LiteLLM | 7 | 9 | 8 | 6 | 8 | 7 | 9 | 7.9 |

| ZenML | 8 | 6 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| Portkey | 7 | 8 | 8 | 7 | 8 | 7 | 8 | 7.8 |

How to interpret scores:

- Scores are relative comparisons

- Higher scores indicate broader capabilities

- Developer frameworks rank high in flexibility

- Lightweight tools rank high in ease and value

- Choose based on your use case

Which LLM Orchestration Framework Is Right for You?

Solo / Freelancer

- Best: LiteLLM, CrewAI

- Simple and lightweight

SMB

- Best: LlamaIndex, LangChain

- Balanced capabilities

Mid-Market

- Best: AutoGen, Haystack

- Scalable workflows

Enterprise

- Best: Semantic Kernel, ZenML

- Governance and scalability

Budget vs Premium

- Budget: CrewAI, LiteLLM

- Premium: Semantic Kernel, enterprise setups

Feature Depth vs Ease of Use

- Depth: LangChain, AutoGen

- Ease: LiteLLM, CrewAI

Integrations & Scalability

- Strong: LangChain, Semantic Kernel

- Moderate: DSPy

Security & Compliance Needs

- Enterprise frameworks offer better governance

- Open-source tools require custom setup

Frequently Asked Questions (FAQs)

What are LLM orchestration frameworks?

They help connect LLMs with tools, data, and workflows. They manage multi-step logic. They enable production AI systems.

Why not call APIs directly?

Direct calls work for simple tasks. Complex systems need orchestration. Frameworks manage workflows and memory.

What is RAG?

Retrieval-Augmented Generation combines LLMs with external data. It improves accuracy. It is a key use case.

Which framework is best?

It depends on your needs. LangChain is general-purpose. LlamaIndex is best for data-heavy apps.

Are these tools free?

Many are open-source. Some offer paid enterprise features. Pricing varies.

Do I need coding skills?

Yes, most frameworks require development knowledge. Some offer low-code options.

Can I combine frameworks?

Yes, many teams use multiple frameworks. Hybrid setups are common.

Are they production-ready?

Some are production-ready. Others need customization. Testing is essential.

What is multi-agent orchestration?

It involves multiple AI agents working together. Each agent performs a task. They collaborate to solve problems.

How do I choose the right framework?

Define your use case first. Evaluate features and complexity. Test with real workloads.

Conclusion

LLM orchestration frameworks are the backbone of modern AI application development, enabling developers to transform standalone language models into powerful, real-world systems. From flexible ecosystems like LangChain to data-focused tools like LlamaIndex and production-ready frameworks like Haystack, each platform serves a specific purpose in the AI stack. The key challenge is not choosing the most popular tool, but selecting the one that aligns with your application architecture, data requirements, and scalability goals. Many teams now adopt hybrid approaches, combining multiple frameworks to maximize performance and flexibility. While these frameworks significantly accelerate development, they can also introduce complexity, making it important to balance abstraction with control. As AI systems evolve toward multi-agent and autonomous workflows, orchestration layers will become even more critical. The best next step is to shortlist a few frameworks, build a small prototype, and evaluate how well they integrate into your workflow. The right choice will ultimately depend on your use case, technical expertise, and long-term scalability needs.