Introduction

RAG (Retrieval-Augmented Generation) Tooling refers to the set of frameworks, vector databases, and orchestration platforms used to build AI systems that combine information retrieval with generative AI models. Instead of relying only on pre-trained knowledge, RAG systems fetch relevant external data and inject it into prompts before generating responses.

This approach significantly improves accuracy, reduces hallucinations, and enables AI to work with real-time or proprietary data. As AI moves into enterprise production, RAG has become a foundational architecture for building reliable, domain-specific AI applications.

Common use cases include:

- AI chatbots powered by internal knowledge bases

- Enterprise search and knowledge assistants

- Customer support automation with documentation grounding

- Code and technical documentation assistants

- Data-driven decision support systems

Key evaluation criteria:

- Retrieval quality and search performance

- LLM integration flexibility

- Vector database compatibility

- Scalability and latency

- Ease of pipeline orchestration

- Monitoring and evaluation capabilities

- Security and access control

- Developer experience and ecosystem

Best for: AI engineers, data teams, enterprises building knowledge-driven AI systems, SaaS companies, and developers building LLM applications.

Not ideal for: Simple AI use cases without external data dependency or teams that do not require contextual grounding.

Key Trends in RAG Tooling

- Growth of vector databases as core infrastructure

- Adoption of hybrid search (semantic + keyword)

- Rise of agent-based and multi-step RAG pipelines

- Integration with real-time data sources and APIs

- Increased focus on retrieval quality and re-ranking

- Emergence of RAG evaluation frameworks

- Expansion of low-code RAG builders

- Stronger security and access control layers

- Integration with enterprise data systems

- Movement toward end-to-end RAG platforms

How We Selected These Tools (Methodology)

- Evaluated industry adoption and developer popularity

- Assessed retrieval and generation capabilities

- Reviewed integration with LLM ecosystems

- Considered performance and scalability

- Included frameworks + vector DBs + search tools

- Balanced open-source and enterprise tools

- Focused on real-world production readiness

- Analyzed community and ecosystem strength

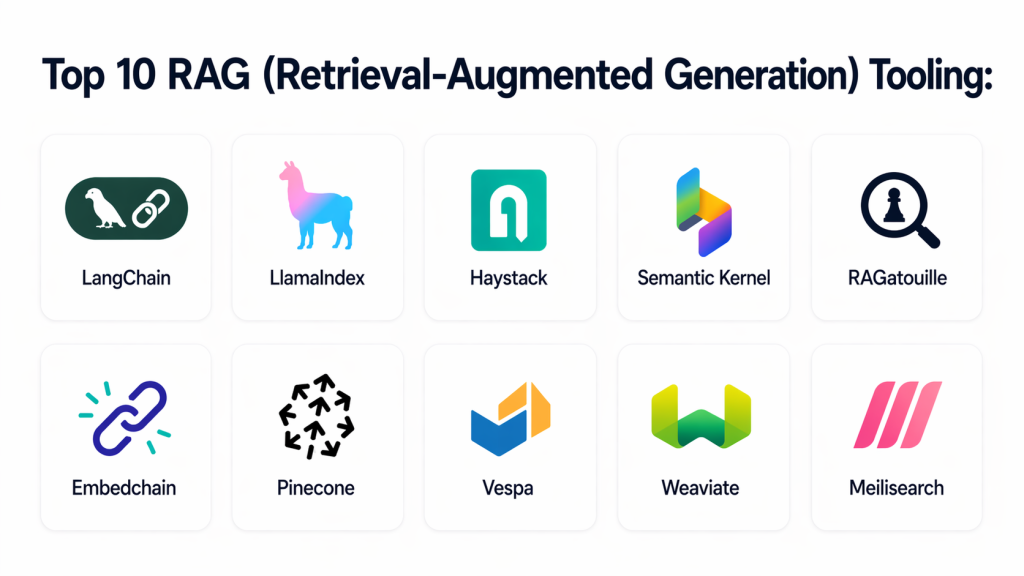

Top 10 RAG Tooling Platforms

#1 — LangChain

Short description: A leading framework for building RAG pipelines with chaining, agents, and integrations. Widely used by developers for building production-grade AI applications.

Key Features

- Prompt chaining and orchestration

- Built-in RAG pipeline components

- Memory and context management

- Multi-model support

- Agent workflows

- Debugging tools

Pros

- Highly flexible

- Massive ecosystem

Cons

- Complex for beginners

- Requires coding

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

Supports extensive integrations across AI and data stack.

- OpenAI

- Hugging Face

- Vector DBs

- APIs

Support & Community

Very large open-source community.

#2 — LlamaIndex

Short description: A powerful data framework designed specifically for building RAG applications with structured and unstructured data.

Key Features

- Data connectors

- Indexing and retrieval pipelines

- Query engines

- Context management

- Multi-source integration

Pros

- Strong data handling

- Optimized for RAG

Cons

- Developer-focused

- Setup complexity

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies

Integrations & Ecosystem

Works with data sources and vector stores.

- Databases

- APIs

- ML tools

Support & Community

Growing open-source ecosystem.

#3 — Haystack

Short description: An open-source NLP framework designed for building search and RAG pipelines.

Key Features

- Document retrieval pipelines

- Question answering systems

- Multi-model support

- Pipeline orchestration

- Evaluation tools

Pros

- Open-source flexibility

- Strong search capabilities

Cons

- Requires setup effort

- Less beginner-friendly

Platforms / Deployment

Self-hosted / Cloud

Security & Compliance

Varies

Integrations & Ecosystem

Supports search engines and ML tools.

Support & Community

Active developer community.

#4 — Semantic Kernel

Short description: A framework for integrating AI with applications, enabling RAG workflows with structured orchestration.

Key Features

- AI orchestration

- Plugin architecture

- Memory integration

- Prompt templates

- Multi-model support

Pros

- Strong enterprise integration

- Flexible design

Cons

- Requires coding

- Limited UI

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with enterprise applications and APIs.

Support & Community

Backed by enterprise ecosystem.

#5 — RAGatouille

Short description: A specialized library for building RAG pipelines using advanced retrieval techniques.

Key Features

- Dense retrieval models

- Ranking optimization

- Pipeline integration

- Performance tuning

- Open-source flexibility

Pros

- High retrieval accuracy

- Lightweight

Cons

- Niche tool

- Limited UI

Platforms / Deployment

Self-hosted

Security & Compliance

Varies

Integrations & Ecosystem

Works with ML pipelines and frameworks.

Support & Community

Smaller but focused community.

#6 — Embedchain

Short description: A developer-friendly framework for quickly building RAG applications with minimal setup.

Key Features

- Simple API

- Data ingestion tools

- Embedding generation

- Retrieval pipelines

- Quick deployment

Pros

- Easy to use

- Fast setup

Cons

- Limited advanced features

- Less scalable

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports APIs and data sources.

Support & Community

Growing community.

#7 — Pinecone

Short description: A leading vector database platform designed for fast and scalable retrieval in RAG systems.

Key Features

- Vector indexing

- High-speed similarity search

- Scalable architecture

- Low latency retrieval

- Managed infrastructure

Pros

- High performance

- Fully managed

Cons

- Cost considerations

- Vendor dependency

Platforms / Deployment

Cloud

Security & Compliance

Enterprise-grade features; details vary

Integrations & Ecosystem

Works with RAG frameworks and ML tools.

Support & Community

Strong enterprise support.

#8 — Vespa

Short description: A search and analytics engine optimized for large-scale RAG and real-time retrieval.

Key Features

- Real-time search

- Hybrid retrieval

- Scalability

- Machine learning integration

- Low latency

Pros

- High scalability

- Strong search performance

Cons

- Complex setup

- Requires expertise

Platforms / Deployment

Self-hosted / Cloud

Security & Compliance

Varies

Integrations & Ecosystem

Supports enterprise search systems.

Support & Community

Active open-source community.

#9 — Weaviate

Short description: An open-source vector database designed for semantic search and RAG pipelines.

Key Features

- Vector search

- GraphQL API

- Hybrid search

- Data indexing

- Scalable architecture

Pros

- Open-source

- Flexible

Cons

- Requires setup

- Performance tuning needed

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Varies

Integrations & Ecosystem

Works with ML and AI tools.

Support & Community

Strong community support.

#10 — Meilisearch

Short description: A fast search engine optimized for developer-friendly RAG implementations.

Key Features

- Fast search indexing

- Easy API integration

- Lightweight setup

- Typo tolerance

- Real-time search

Pros

- Easy to use

- Fast performance

Cons

- Limited advanced AI features

- Not a full RAG framework

Platforms / Deployment

Cloud / Self-hosted

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports APIs and search integrations.

Support & Community

Growing developer community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | Developers | Multi-platform | Hybrid | Pipeline orchestration | N/A |

| LlamaIndex | Data-driven apps | Multi-platform | Hybrid | Data indexing | N/A |

| Haystack | Search pipelines | Multi-platform | Hybrid | NLP pipelines | N/A |

| Semantic Kernel | Enterprise apps | Multi-platform | Hybrid | AI orchestration | N/A |

| RAGatouille | Retrieval optimization | Multi-platform | Self-hosted | Ranking models | N/A |

| Embedchain | Quick builds | Multi-platform | Hybrid | Simple API | N/A |

| Pinecone | Vector DB | Web | Cloud | High-speed retrieval | N/A |

| Vespa | Large-scale search | Multi-platform | Hybrid | Real-time search | N/A |

| Weaviate | Open-source vector DB | Multi-platform | Hybrid | GraphQL API | N/A |

| Meilisearch | Lightweight search | Multi-platform | Hybrid | Fast indexing | N/A |

Evaluation & Scoring of RAG Tooling

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 6 | 9 | 6 | 8 | 8 | 7 | 7.9 |

| LlamaIndex | 9 | 6 | 8 | 6 | 8 | 7 | 7 | 7.7 |

| Haystack | 8 | 6 | 7 | 6 | 7 | 7 | 7 | 7.1 |

| Semantic Kernel | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| RAGatouille | 7 | 6 | 6 | 6 | 8 | 6 | 7 | 6.9 |

| Embedchain | 7 | 9 | 6 | 5 | 7 | 6 | 8 | 7.2 |

| Pinecone | 9 | 8 | 8 | 7 | 9 | 8 | 6 | 8.2 |

| Vespa | 8 | 6 | 7 | 7 | 9 | 7 | 6 | 7.5 |

| Weaviate | 8 | 7 | 7 | 6 | 8 | 7 | 7 | 7.5 |

| Meilisearch | 7 | 9 | 6 | 5 | 8 | 6 | 8 | 7.3 |

How to interpret scores:

These scores are comparative and reflect strengths across features, usability, and performance. Higher scores indicate better overall capability, but the ideal tool depends on your use case. For example, developers may prioritize flexibility, while enterprises may focus on scalability and security.

Which RAG Tool Is Right for You?

Solo / Freelancer

Embedchain or Meilisearch for quick setup and simplicity.

SMB

Weaviate and LlamaIndex offer a balance of power and usability.

Mid-Market

Semantic Kernel and Haystack provide structured pipelines.

Enterprise

LangChain, Pinecone, and Vespa are ideal for large-scale deployments.

Budget vs Premium

Open-source tools reduce cost, while managed services offer convenience.

Feature Depth vs Ease of Use

LangChain offers depth; Embedchain offers simplicity.

Integrations & Scalability

Pinecone and LangChain excel in integration-heavy systems.

Security & Compliance Needs

Enterprises should prioritize tools with strong access control and monitoring.

Frequently Asked Questions (FAQs)

1. What is RAG in AI?

RAG is a technique that combines retrieval systems with generative AI to produce more accurate and context-aware outputs using external data.

2. Why is RAG important?

It improves AI accuracy and reduces hallucinations by grounding responses in real, relevant information sources.

3. What tools are used in RAG pipelines?

RAG pipelines typically include frameworks, vector databases, and retrieval systems working together.

4. Are RAG tools only for developers?

Mostly yes, but some tools offer low-code interfaces for easier adoption.

5. Can RAG work with private data?

Yes, RAG is commonly used to integrate internal knowledge bases and enterprise data.

6. How complex is RAG implementation?

It can range from simple setups to complex enterprise architectures depending on requirements.

7. Do RAG systems require vector databases?

Most implementations use vector databases for efficient retrieval.

8. Are RAG systems scalable?

Yes, with the right infrastructure, they can scale to enterprise-level workloads.

9. What are common challenges?

Data quality, retrieval accuracy, and latency are common challenges.

10. Are there alternatives to RAG?

Alternatives include fine-tuning models, but RAG is more flexible and cost-efficient.

Conclusion

RAG tooling has become a foundational component of modern AI systems, enabling organizations to build more accurate, reliable, and context-aware applications. By combining retrieval mechanisms with generative models, these tools address one of the biggest limitations of AI—lack of up-to-date and domain-specific knowledge. There is no universal “best” tool. Developers may prefer frameworks like LangChain or LlamaIndex for flexibility, while enterprises may rely on Pinecone or Vespa for scalability and performance. Smaller teams can benefit from lightweight tools like Embedchain or Meilisearch. Choosing the right tool depends on your data complexity, scale, and integration needs. Focus on tools that align with your architecture and long-term goals. Start by shortlisting a few tools, building a prototype, and testing retrieval quality. Validate performance, scalability, and integration before moving to production. This approach ensures your RAG system delivers real value.