Introduction

AI Safety & Evaluation Tools are platforms that help organizations test, monitor, and improve the reliability, fairness, and safety of AI systems—especially large language models and generative AI applications. In simple terms, these tools answer a critical question: Is your AI behaving correctly, safely, and consistently in real-world scenarios?

As AI systems move from experimentation to production, evaluation is no longer optional. Teams must detect hallucinations, bias, security risks, and performance degradation before users experience them. Modern tools automate testing, scoring, and monitoring to ensure production-grade reliability.

Common use cases include:

- Testing AI models for hallucinations and factual accuracy

- Monitoring production AI systems for drift and anomalies

- Evaluating prompt performance and output quality

- Ensuring compliance with safety and ethical standards

- Benchmarking multiple AI models

Key evaluation criteria:

- Automated evaluation and scoring systems

- Safety and risk detection capabilities

- Model monitoring and observability

- Integration with ML pipelines

- Scalability and performance tracking

- Ease of experimentation and testing

- Security and compliance features

- Support for multi-model environments

Best for: AI engineers, ML teams, product managers, QA teams, and enterprises deploying AI at scale.

Not ideal for: Teams with minimal AI usage or simple experimentation workflows that don’t require structured evaluation.

Key Trends in AI Safety & Evaluation Tools

- Rapid adoption of automated AI evaluation frameworks

- Integration of hallucination detection and factuality scoring

- Growth of real-time monitoring in production environments

- Emergence of agent-based evaluation systems

- Increased focus on safety benchmarking and certification

- Use of AI-assisted evaluation and scoring models

- Integration with CI/CD pipelines for AI deployments

- Expansion of multi-step and multi-agent testing environments

- Strong emphasis on data quality and drift detection

- Rise of end-to-end AI lifecycle evaluation platforms

How We Selected These Tools (Methodology)

- Evaluated industry adoption and developer usage trends

- Assessed evaluation depth and safety capabilities

- Reviewed performance monitoring and observability features

- Considered integration with AI/ML ecosystems

- Included both enterprise and developer-first tools

- Analyzed scalability and real-world deployment readiness

- Focused on tools supporting modern generative AI workflows

- Balanced open-source and commercial platforms

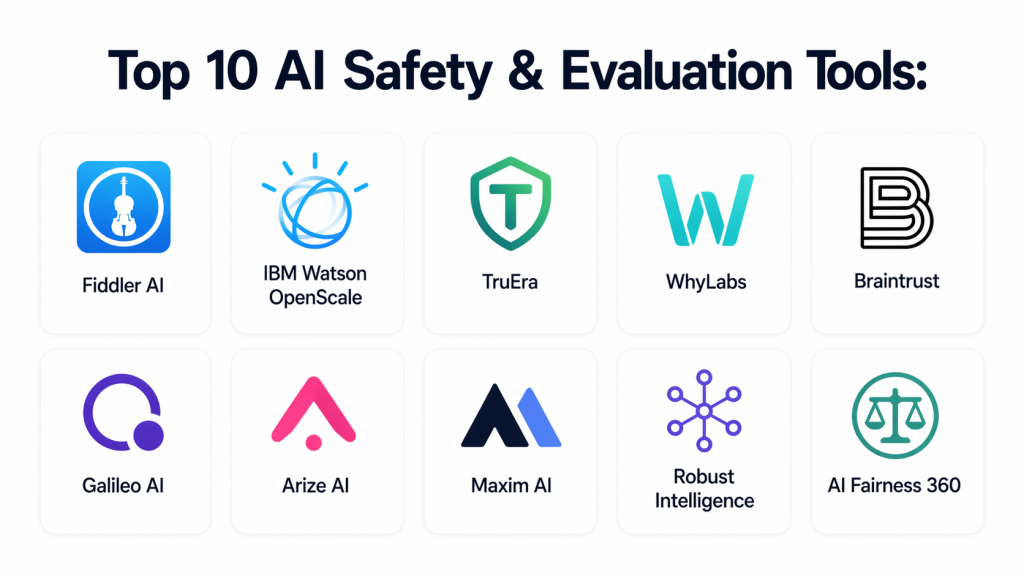

Top 10 AI Safety & Evaluation Tools

#1 — Fiddler AI

Short description: A leading platform for monitoring, explainability, and safety evaluation of AI models in production environments.

Key Features

- Explainable AI dashboards

- Bias detection and fairness monitoring

- Real-time model monitoring

- Drift detection

- Performance analytics

- Alerting system

Pros

- Strong enterprise-grade monitoring

- Advanced explainability features

Cons

- Pricing not transparent

- Requires onboarding effort

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with ML pipelines and enterprise tools.

- APIs

- Data platforms

- ML frameworks

Support & Community

Enterprise-level support and documentation.

#2 — IBM Watson OpenScale

Short description: A comprehensive platform for monitoring AI models, ensuring fairness, and maintaining regulatory compliance.

Key Features

- Bias detection

- Explainability insights

- Model performance monitoring

- Governance workflows

- Automated alerts

Pros

- Strong compliance features

- Enterprise-ready

Cons

- Complex setup

- Higher cost

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

Supports enterprise-grade security; details not publicly stated

Integrations & Ecosystem

Works with enterprise AI systems and cloud platforms.

- IBM Cloud

- APIs

Support & Community

Strong enterprise support.

#3 — TruEra

Short description: A platform focused on model explainability, evaluation, and improving model quality.

Key Features

- Model explainability

- Bias detection

- Performance evaluation

- Debugging tools

- Governance insights

Pros

- Strong model diagnostics

- Developer-friendly

Cons

- Limited automation

- Requires expertise

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports ML frameworks and APIs.

Support & Community

Growing enterprise adoption.

#4 — WhyLabs

Short description: A data observability and AI monitoring platform focused on detecting anomalies and ensuring data quality.

Key Features

- Data monitoring

- Drift detection

- Performance tracking

- Alerting tools

- Observability dashboards

Pros

- Easy integration

- Strong data insights

Cons

- Limited governance features

- Focused more on monitoring

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with data pipelines and ML tools.

- APIs

- Data systems

Support & Community

Active community and support.

#5 — Braintrust

Short description: A modern AI evaluation platform designed for testing, scoring, and improving AI systems in production.

Key Features

- Automated evaluation scoring

- CI/CD integration

- Regression testing

- Dataset generation from production

- Multi-turn evaluation

Pros

- Strong evaluation capabilities

- Developer-friendly

Cons

- Requires technical setup

- Limited UI for non-technical users

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with AI development workflows.

Support & Community

Growing developer community.

#6 — Galileo AI

Short description: A platform specializing in evaluating generative AI outputs such as hallucinations and factual correctness.

Key Features

- Hallucination detection

- Evaluation metrics

- Model monitoring

- Dataset management

- Performance analytics

Pros

- Strong generative AI evaluation

- Advanced scoring systems

Cons

- Limited beginner support

- Enterprise-focused

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Works with LLM APIs and ML tools.

Support & Community

Enterprise support model.

#7 — Arize AI

Short description: A machine learning observability platform with strong evaluation and monitoring capabilities.

Key Features

- Model monitoring

- Drift detection

- Performance tracking

- Data analysis tools

- Visualization dashboards

Pros

- Scalable

- Strong observability

Cons

- Learning curve

- Pricing varies

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Integrates with ML pipelines and data tools.

Support & Community

Active community and documentation.

#8 — Maxim AI

Short description: A platform designed for evaluating AI agents and multi-step workflows.

Key Features

- Agent simulation

- Multi-step evaluation

- Scenario testing

- Performance tracking

- Evaluation frameworks

Pros

- Strong for agent-based AI

- Advanced testing scenarios

Cons

- Newer platform

- Limited ecosystem

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports AI workflows and APIs.

Support & Community

Emerging community.

#9 — Robust Intelligence

Short description: A platform focused on AI security, testing, and validation of AI systems.

Key Features

- AI stress testing

- Risk analysis

- Model validation

- Security testing

- Compliance tools

Pros

- Strong safety focus

- Enterprise-ready

Cons

- Limited accessibility for small teams

- Pricing not transparent

Platforms / Deployment

Cloud

Security & Compliance

Not publicly stated

Integrations & Ecosystem

Supports enterprise integrations.

Support & Community

Enterprise support model.

#10 — AI Fairness 360

Short description: An open-source toolkit designed to detect and mitigate bias in AI systems.

Key Features

- Bias detection metrics

- Fairness algorithms

- Model evaluation tools

- Visualization tools

- Open-source framework

Pros

- Free and open-source

- Strong fairness focus

Cons

- Requires technical expertise

- Limited UI

Platforms / Deployment

Self-hosted

Security & Compliance

Varies

Integrations & Ecosystem

Supports ML frameworks and Python-based workflows.

Support & Community

Strong research and open-source community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Fiddler AI | Enterprise monitoring | Web | Cloud | Explainability dashboards | N/A |

| IBM Watson OpenScale | Governance | Multi-platform | Hybrid | Bias detection | N/A |

| TruEra | Model quality | Web | Cloud | Model explainability | N/A |

| WhyLabs | Observability | Web | Cloud | Data monitoring | N/A |

| Braintrust | Evaluation testing | Web | Cloud | Automated scoring | N/A |

| Galileo AI | GenAI evaluation | Web | Cloud | Hallucination detection | N/A |

| Arize AI | Observability | Web | Cloud | Drift detection | N/A |

| Maxim AI | Agent testing | Web | Cloud | Scenario simulation | N/A |

| Robust Intelligence | Security testing | Web | Cloud | Risk analysis | N/A |

| AI Fairness 360 | Bias detection | Multi-platform | Self-hosted | Fairness toolkit | N/A |

Evaluation & Scoring of AI Safety & Evaluation Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Fiddler AI | 9 | 7 | 8 | 7 | 8 | 8 | 6 | 8.0 |

| IBM Watson OpenScale | 9 | 6 | 8 | 8 | 8 | 8 | 6 | 8.0 |

| TruEra | 8 | 7 | 7 | 6 | 8 | 7 | 6 | 7.3 |

| WhyLabs | 7 | 8 | 7 | 6 | 7 | 7 | 8 | 7.4 |

| Braintrust | 9 | 7 | 8 | 6 | 9 | 7 | 7 | 8.0 |

| Galileo AI | 8 | 7 | 7 | 6 | 8 | 7 | 6 | 7.3 |

| Arize AI | 8 | 7 | 8 | 6 | 8 | 7 | 7 | 7.6 |

| Maxim AI | 8 | 7 | 7 | 6 | 8 | 6 | 7 | 7.2 |

| Robust Intelligence | 9 | 6 | 7 | 8 | 8 | 7 | 6 | 7.9 |

| AI Fairness 360 | 7 | 6 | 6 | 6 | 7 | 7 | 9 | 7.1 |

How to interpret scores:

These scores provide a comparative view of tool capabilities across multiple dimensions. Higher scores indicate stronger overall performance, but the best choice depends on your use case. Enterprise users may prioritize security and compliance, while smaller teams may focus on ease of use and cost efficiency.

Which AI Safety & Evaluation Tool Is Right for You?

Solo / Freelancer

AI Fairness 360 or lightweight tools are suitable for experimentation and basic evaluation needs.

SMB

WhyLabs and Braintrust provide a balance of usability and evaluation capabilities.

Mid-Market

Arize AI and TruEra offer strong monitoring and model evaluation features.

Enterprise

Fiddler AI, IBM Watson OpenScale, and Robust Intelligence provide full-scale governance and safety.

Budget vs Premium

Open-source tools offer flexibility, while enterprise platforms deliver advanced capabilities.

Feature Depth vs Ease of Use

Advanced platforms provide deeper insights but require expertise; simpler tools focus on usability.

Integrations & Scalability

Arize AI and IBM Watson OpenScale excel in large-scale deployments.

Security & Compliance Needs

Highly regulated industries should prioritize enterprise-grade governance tools.

Frequently Asked Questions (FAQs)

1. What are AI Safety & Evaluation Tools?

These tools help measure, test, and improve AI system behavior. They ensure outputs are accurate, safe, and aligned with expected outcomes through structured evaluation and monitoring.

2. Why are these tools important?

They reduce risks such as hallucinations, bias, and incorrect outputs. Without proper evaluation, issues often appear only after deployment, impacting users and business outcomes.

3. Do these tools work with all AI models?

Most tools support multiple AI models and APIs. They are designed to work across different environments and adapt to evolving AI technologies.

4. How do they detect AI risks?

They use scoring systems, benchmarks, and monitoring frameworks to detect anomalies, bias, and unsafe behavior. Many also include real-time alerts and dashboards.

5. Are these tools only for enterprises?

No, there are options for startups and individuals as well. However, enterprise tools provide more advanced governance and compliance capabilities.

6. How long does implementation take?

Implementation can range from a few hours for simple tools to several weeks for enterprise systems depending on integrations and complexity.

7. Do they support real-time monitoring?

Yes, most modern tools offer real-time monitoring to track AI performance and detect issues as they occur in production environments.

8. Can these tools improve AI accuracy?

Yes, by identifying weak areas and enabling iterative improvements, these tools help enhance model accuracy and reliability over time.

9. What are common mistakes when using these tools?

Common mistakes include not defining clear evaluation metrics, ignoring production monitoring, and failing to integrate evaluation into workflows.

10. Are open-source tools reliable?

Open-source tools can be highly reliable if implemented correctly. However, they may require more technical expertise and customization.

Conclusion

AI Safety & Evaluation Tools have become essential as AI systems move into real-world applications. They provide the structure needed to test, validate, and monitor AI systems effectively, ensuring reliability and trust. Without these tools, organizations risk deploying models that behave unpredictably or fail under real-world conditions. There is no single “best” tool for every scenario. Enterprise users may require platforms like Fiddler AI or IBM Watson OpenScale for comprehensive governance, while mid-sized teams might benefit from Arize AI or Braintrust for balanced evaluation capabilities. Smaller teams and researchers can leverage open-source tools like AI Fairness 360. The key is to align your tool choice with your team’s technical maturity, risk tolerance, and deployment scale. Focus on tools that integrate well with your existing workflows and provide actionable insights. Start by shortlisting two or three tools that match your needs. Run controlled experiments, validate evaluation metrics, and monitor real-world performance before making a final decision. This approach ensures long-term success and safe AI deployment.