Introduction

Relevance evaluation toolkits help organizations measure, benchmark, analyze, and improve the quality of search, retrieval, recommendation, ranking, and AI-generated responses. These platforms are commonly used in search engines, retrieval-augmented generation systems, semantic search pipelines, recommendation systems, and AI assistants to evaluate whether retrieved results are accurate, useful, contextually relevant, and aligned with user intent.

As enterprises increasingly adopt generative AI, vector search, retrieval-augmented generation, and intelligent recommendation systems, relevance evaluation has become a critical operational requirement. Poor retrieval quality can reduce trust in AI systems, create inaccurate recommendations, weaken user experience, and increase hallucination risks in large language model workflows.

Common use cases include:

- Search quality benchmarking

- Retrieval-augmented generation evaluation

- Recommendation system testing

- Ranking model evaluation

- AI response relevance analysis

- Semantic retrieval optimization

Key evaluation criteria include:

- Retrieval quality metrics support

- LLM and RAG evaluation capabilities

- Human feedback integration

- Benchmarking flexibility

- Experiment tracking

- Scalability and automation

- Visualization and analytics

- API and SDK ecosystem

- Security and governance controls

- Integration with AI pipelines

Best for: AI engineering teams, search relevance engineers, recommendation system developers, enterprise AI platforms, ML operations teams, and organizations building semantic retrieval systems.

Not ideal for: Businesses without AI retrieval systems, simple keyword-only search environments, or organizations that do not need systematic relevance testing and benchmarking.

Key Trends in Relevance Evaluation Toolkits

- Retrieval-augmented generation evaluation is becoming a major driver for toolkit adoption.

- LLM-as-a-judge workflows are increasingly used for automated evaluation.

- Human feedback loops are being combined with AI-assisted scoring systems.

- Semantic retrieval evaluation is replacing purely keyword-based ranking analysis.

- Experiment tracking and observability integration are becoming essential features.

- Synthetic dataset generation is helping teams accelerate benchmarking workflows.

- Multimodal relevance evaluation is expanding beyond text-only search systems.

- Continuous evaluation pipelines are becoming part of AI production operations.

- Explainability and traceability are becoming more important for enterprise governance.

- Hybrid evaluation approaches combining human and automated scoring are growing rapidly.

How We Selected These Tools

The tools in this list were selected using a practical evaluation methodology focused on search relevance, AI evaluation, and retrieval benchmarking.

- Market adoption and developer popularity

- Support for search and retrieval evaluation metrics

- LLM and RAG workflow compatibility

- Automation and experiment tracking capabilities

- Integration flexibility across AI pipelines

- Scalability for production workloads

- Visualization and reporting features

- Open-source and enterprise ecosystem maturity

- Security and governance capabilities

- Community adoption and documentation quality

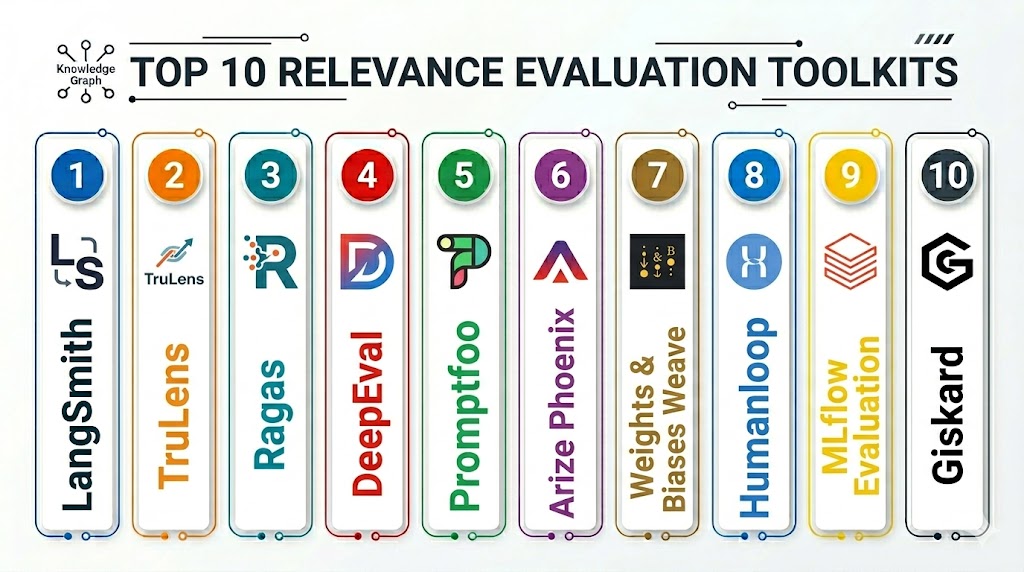

Top 10 Relevance Evaluation Toolkits

1- Ragas

Short description: Ragas is one of the most widely adopted open-source evaluation frameworks for retrieval-augmented generation systems and LLM applications. It helps teams measure answer correctness, faithfulness, context precision, and retrieval quality in AI workflows. Ragas is especially popular among developers building AI assistants, semantic search systems, and enterprise RAG architectures. It focuses heavily on automated evaluation using language model-based scoring.

Key Features

- RAG evaluation metrics

- Faithfulness scoring

- Context relevance analysis

- Answer correctness evaluation

- Synthetic test generation

- LLM-assisted evaluation

- Experiment benchmarking

Pros

- Strong RAG evaluation focus

- Good developer experience

- Open-source flexibility

- Useful automated scoring workflows

Cons

- Requires LLM configuration

- Advanced customization needs expertise

- Enterprise governance features are limited

- Human evaluation workflows may require additional tooling

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Security depends on deployment architecture and model integrations.

Formal compliance details are not publicly stated.

Integrations & Ecosystem

Ragas integrates with LLM orchestration systems, evaluation workflows, and AI retrieval frameworks.

- LangChain integration

- LlamaIndex support

- OpenAI compatibility

- Hugging Face support

- Python SDK

- RAG pipeline integrations

Support & Community

Strong open-source AI evaluation community with growing adoption in generative AI projects.

2- TruLens

Short description: TruLens is an open-source evaluation and observability framework for LLM applications and retrieval systems. It provides relevance scoring, feedback analysis, tracing, and evaluation workflows for AI pipelines. TruLens is widely used for improving trust, transparency, and performance in enterprise AI systems. It supports both automated and human-centric evaluation strategies.

Key Features

- LLM observability

- Retrieval relevance scoring

- Feedback and tracing workflows

- AI pipeline evaluation

- Hallucination analysis

- Experiment tracking

- Human feedback integration

Pros

- Strong observability features

- Useful tracing capabilities

- Good RAG workflow support

- Flexible evaluation architecture

Cons

- Requires operational setup

- Enterprise workflows may need customization

- Scaling observability can become complex

- Advanced analytics require tuning

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Authentication and governance depend on deployment configuration.

Integrations & Ecosystem

TruLens integrates with AI orchestration systems, monitoring stacks, and LLM evaluation workflows.

- LangChain support

- LlamaIndex integration

- OpenAI compatibility

- Hugging Face support

- Python SDK

- AI observability integrations

Support & Community

Growing AI observability community with active open-source contributions.

3- DeepEval

Short description: DeepEval is an open-source evaluation framework focused on LLM systems, retrieval workflows, and AI application testing. It supports benchmarking, answer quality analysis, hallucination detection, and semantic relevance scoring for generative AI applications. It is increasingly used by AI engineering teams building production AI assistants and enterprise retrieval systems.

Key Features

- LLM evaluation workflows

- Retrieval quality scoring

- Hallucination detection

- Benchmark automation

- Semantic similarity testing

- Custom evaluation metrics

- AI testing pipelines

Pros

- Good automated testing support

- Strong AI evaluation capabilities

- Useful semantic relevance analysis

- Flexible metric customization

Cons

- Requires AI pipeline expertise

- Advanced setups can become complex

- Enterprise governance tooling is limited

- Human review workflows may require external systems

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Security controls depend on infrastructure configuration.

Integrations & Ecosystem

DeepEval integrates with modern AI orchestration systems, embeddings, and retrieval workflows.

- OpenAI integration

- LangChain support

- Hugging Face compatibility

- Python SDK

- CI/CD pipeline integration

- RAG workflow support

Support & Community

Growing developer adoption with active AI testing ecosystem support.

4- Elasticsearch Ranking Evaluation API

Short description: Elasticsearch Ranking Evaluation API helps organizations evaluate search ranking quality using configurable relevance judgments and retrieval metrics. It is useful for enterprises managing search engines, semantic retrieval systems, and hybrid ranking environments. The platform supports evaluation workflows directly within Elasticsearch-based search architectures.

Key Features

- Search relevance benchmarking

- Ranking evaluation APIs

- Precision and recall metrics

- Hybrid search evaluation

- Query performance analysis

- Search analytics integration

- Distributed evaluation workflows

Pros

- Strong Elasticsearch ecosystem integration

- Good traditional search evaluation support

- Enterprise scalability

- Useful ranking analysis capabilities

Cons

- Best suited for Elasticsearch users

- Limited LLM-native workflows

- Semantic evaluation requires customization

- Advanced AI retrieval testing needs extensions

Platforms / Deployment

Linux / Windows / macOS

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, encryption support, authentication integration, audit logging.

Integrations & Ecosystem

Elasticsearch integrates with analytics systems, observability stacks, AI retrieval workflows, and enterprise infrastructure.

- Kibana integration

- Search analytics workflows

- API integrations

- Hybrid search support

- Cloud platform connectivity

- Observability integrations

Support & Community

Large enterprise search ecosystem with strong operational documentation.

5- Haystack Evaluation Framework

Short description: Haystack provides retrieval and search evaluation tooling for semantic search, question answering, and retrieval-augmented generation systems. It supports benchmarking, ranking analysis, and pipeline evaluation for AI retrieval workflows. Haystack is widely used for enterprise search modernization and LLM retrieval applications.

Key Features

- Search pipeline evaluation

- Semantic retrieval benchmarking

- Ranking metrics support

- Question-answer evaluation

- Hybrid search workflows

- AI retrieval integration

- Pipeline observability

Pros

- Strong semantic retrieval support

- Good enterprise AI integration

- Flexible pipeline architecture

- Open-source ecosystem

Cons

- Requires AI pipeline expertise

- Large deployments require tuning

- Enterprise governance may need extensions

- Advanced metrics customization can be complex

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Authentication and governance vary by deployment setup.

Integrations & Ecosystem

Haystack integrates with vector databases, AI pipelines, and semantic search systems.

- OpenAI integration

- Weaviate support

- Pinecone integration

- Elasticsearch support

- LangChain workflows

- Hugging Face compatibility

Support & Community

Strong AI search community with growing enterprise adoption.

6- Evidently AI

Short description: Evidently AI is a machine learning observability and evaluation platform that supports model monitoring, data drift analysis, and relevance evaluation workflows for AI systems. It helps organizations monitor retrieval quality, ranking behavior, and model performance in production AI environments.

Key Features

- Model monitoring

- Ranking quality analysis

- Drift detection

- Experiment visualization

- Automated reporting

- Retrieval evaluation workflows

- Performance dashboards

Pros

- Strong observability features

- Useful visualization capabilities

- Good monitoring support

- Flexible deployment options

Cons

- Not solely focused on relevance evaluation

- Advanced retrieval workflows need customization

- Large-scale deployments require planning

- AI infrastructure expertise required

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Authentication support and governance capabilities vary by deployment architecture.

Integrations & Ecosystem

Evidently AI integrates with ML monitoring stacks, AI pipelines, and analytics workflows.

- MLflow integration

- Python SDK

- CI/CD workflows

- Dashboard integrations

- Monitoring stack support

- AI pipeline compatibility

Support & Community

Strong machine learning operations community with growing enterprise observability adoption.

7- OpenSearch Ranking Evaluation

Short description: OpenSearch Ranking Evaluation provides relevance benchmarking and ranking quality analysis within OpenSearch-based search environments. It supports search optimization, retrieval benchmarking, and query quality analysis for semantic and hybrid search systems.

Key Features

- Search ranking evaluation

- Query benchmarking

- Relevance scoring

- Hybrid retrieval analysis

- Distributed search support

- Metrics reporting

- Search analytics integration

Pros

- Open-source flexibility

- Good hybrid search support

- Enterprise scalability

- Strong search ecosystem integration

Cons

- Best for OpenSearch environments

- Limited LLM-native workflows

- Requires operational expertise

- Semantic retrieval customization required

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, authentication controls, encryption support.

Integrations & Ecosystem

OpenSearch integrates with observability systems, analytics stacks, and enterprise search infrastructure.

- Dashboard integrations

- Search analytics workflows

- API support

- Hybrid search systems

- Cloud platform connectivity

- Monitoring integrations

Support & Community

Strong open-source search community with growing enterprise support ecosystem.

8- Arize Phoenix

Short description: Arize Phoenix is an open-source AI observability and evaluation platform focused on retrieval systems, embeddings, ranking analysis, and LLM workflows. It helps organizations monitor retrieval quality, detect hallucinations, and improve semantic search performance.

Key Features

- AI observability

- Embedding analysis

- Retrieval evaluation

- Hallucination detection

- LLM tracing workflows

- Ranking quality analysis

- Interactive dashboards

Pros

- Strong retrieval observability

- Good embedding visualization

- Useful AI debugging tools

- Modern AI monitoring workflows

Cons

- Requires operational integration

- Enterprise workflows may need customization

- Scaling monitoring can become complex

- Advanced governance features are evolving

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted

Security & Compliance

Authentication controls and governance vary by deployment setup.

Integrations & Ecosystem

Arize Phoenix integrates with AI pipelines, embeddings systems, observability workflows, and retrieval frameworks.

- OpenAI integration

- LangChain support

- LlamaIndex compatibility

- Python SDK

- Embedding workflows

- AI observability systems

Support & Community

Rapidly growing AI observability ecosystem with strong developer adoption.

9- PyTerrier

Short description: PyTerrier is an open-source Python-based information retrieval experimentation framework used for relevance evaluation, ranking analysis, and retrieval benchmarking. It is heavily adopted in academic IR research and search experimentation workflows.

Key Features

- Retrieval experimentation

- Ranking evaluation metrics

- Search benchmarking

- Python-based workflows

- Information retrieval pipelines

- Query optimization analysis

- Evaluation automation

Pros

- Strong academic IR capabilities

- Flexible experimentation workflows

- Open-source accessibility

- Good benchmarking support

Cons

- Less enterprise-focused

- Requires IR expertise

- Limited governance features

- Production deployment needs additional tooling

Platforms / Deployment

Linux / macOS / Windows

Self-hosted

Security & Compliance

Security depends on local deployment configuration.

Integrations & Ecosystem

PyTerrier integrates with IR systems, Python workflows, and ranking experimentation environments.

- Python ecosystem integration

- Search engine compatibility

- Ranking metrics support

- Benchmark datasets

- IR experimentation workflows

- API integration

Support & Community

Strong academic information retrieval community and research adoption.

10- MLflow Evaluation

Short description: MLflow Evaluation provides experiment tracking, model evaluation, and benchmarking workflows for machine learning and AI systems. It supports retrieval evaluation indirectly through experiment management and model comparison capabilities. It is useful for organizations standardizing AI experimentation and evaluation pipelines.

Key Features

- Experiment tracking

- Model evaluation workflows

- Benchmark comparisons

- Metric visualization

- Pipeline observability

- AI lifecycle management

- Automated experiment logging

Pros

- Strong ML operations integration

- Good experiment tracking

- Broad ecosystem adoption

- Useful workflow standardization

Cons

- Not dedicated solely to relevance evaluation

- Retrieval workflows require customization

- Advanced ranking analysis may need extensions

- Semantic evaluation support varies

Platforms / Deployment

Linux / macOS / Windows

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication controls, RBAC support, experiment governance capabilities.

Integrations & Ecosystem

MLflow integrates with machine learning workflows, orchestration systems, and enterprise AI infrastructure.

- Databricks integration

- Python SDK

- CI/CD workflows

- AI pipeline integrations

- Experiment tracking systems

- Monitoring workflows

Support & Community

Large machine learning operations ecosystem with strong enterprise and open-source adoption.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Ragas | RAG evaluation workflows | Linux, Windows, macOS | Cloud, Self-hosted | Faithfulness scoring | N/A |

| TruLens | AI observability and evaluation | Linux, Windows, macOS | Cloud, Self-hosted | LLM tracing workflows | N/A |

| DeepEval | Automated AI testing | Linux, Windows, macOS | Cloud, Self-hosted | Hallucination evaluation | N/A |

| Elasticsearch Ranking Evaluation API | Enterprise search ranking | Linux, Windows, macOS | Hybrid | Native ranking evaluation | N/A |

| Haystack Evaluation Framework | Semantic retrieval pipelines | Linux, Windows, macOS | Cloud, Self-hosted | Search pipeline benchmarking | N/A |

| Evidently AI | AI monitoring and evaluation | Linux, Windows, macOS | Cloud, Self-hosted | Drift and monitoring analysis | N/A |

| OpenSearch Ranking Evaluation | Open-source search evaluation | Linux, Windows, macOS | Hybrid | Open-source ranking analysis | N/A |

| Arize Phoenix | AI retrieval observability | Linux, Windows, macOS | Cloud, Self-hosted | Embedding visualization | N/A |

| PyTerrier | Academic IR benchmarking | Linux, Windows, macOS | Self-hosted | Retrieval experimentation | N/A |

| MLflow Evaluation | Experiment management | Linux, Windows, macOS | Hybrid | AI experiment tracking | N/A |

Evaluation & Scoring of Relevance Evaluation Toolkits

| Tool Name | Core 25% | Ease 15% | Integrations 15% | Security 10% | Performance 10% | Support 10% | Value 15% | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Ragas | 9.2 | 8.5 | 8.8 | 7.8 | 8.8 | 8.5 | 9.2 | 8.7 |

| TruLens | 9.0 | 8.0 | 8.8 | 8.0 | 8.8 | 8.5 | 8.8 | 8.6 |

| DeepEval | 8.8 | 8.2 | 8.5 | 7.8 | 8.5 | 8.2 | 8.8 | 8.4 |

| Elasticsearch Ranking Evaluation API | 8.7 | 7.5 | 9.0 | 9.0 | 9.0 | 9.0 | 8.0 | 8.6 |

| Haystack Evaluation Framework | 8.8 | 8.0 | 8.8 | 7.8 | 8.5 | 8.5 | 8.5 | 8.5 |

| Evidently AI | 8.2 | 8.2 | 8.5 | 8.5 | 8.5 | 8.5 | 8.2 | 8.3 |

| OpenSearch Ranking Evaluation | 8.5 | 7.5 | 8.8 | 8.8 | 8.8 | 8.5 | 8.2 | 8.4 |

| Arize Phoenix | 8.8 | 8.0 | 8.8 | 8.0 | 8.8 | 8.5 | 8.5 | 8.5 |

| PyTerrier | 8.2 | 7.5 | 8.0 | 7.0 | 8.2 | 8.0 | 9.0 | 8.0 |

| MLflow Evaluation | 8.0 | 8.2 | 9.0 | 8.5 | 8.5 | 8.8 | 8.5 | 8.5 |

These scores are comparative and should be interpreted based on the type of relevance evaluation workflows your organization manages. Some platforms specialize in retrieval-augmented generation evaluation, while others focus more on search ranking analysis, AI observability, or experimentation workflows. Open-source frameworks provide strong flexibility but may require operational expertise and custom governance. Organizations should validate evaluation quality using real retrieval datasets, production-like AI workflows, and representative search scenarios before selecting a long-term evaluation toolkit.

Which Relevance Evaluation Toolkit Is Right for You?

Solo / Freelancer

Independent AI developers and experimental retrieval projects often benefit from lightweight open-source frameworks such as Ragas, DeepEval, or PyTerrier. These tools provide strong experimentation capabilities without requiring heavy enterprise infrastructure. Simplicity and flexibility are usually more important than governance at this stage. Local evaluation workflows can also reduce operational complexity.

SMB

SMBs should prioritize usability, automation, and manageable operational overhead. Ragas, TruLens, Haystack Evaluation Framework, and Arize Phoenix are strong options depending on whether the organization focuses on RAG systems, semantic search, or observability. SMBs should focus on tools that integrate easily with their existing AI stack. Automated evaluation workflows can significantly reduce manual testing effort.

Mid-Market

Mid-market organizations typically require stronger monitoring, scalability, and experiment tracking capabilities. TruLens, Elasticsearch Ranking Evaluation API, Arize Phoenix, and Haystack Evaluation Framework are strong candidates for production AI retrieval systems. Teams should prioritize observability and reproducibility alongside raw retrieval metrics. Governance and workflow standardization become increasingly important at this stage.

Enterprise

Large enterprises should prioritize scalability, governance, observability, hybrid deployment flexibility, and integration depth. Elasticsearch Ranking Evaluation API, OpenSearch Ranking Evaluation, MLflow Evaluation, TruLens, and Arize Phoenix are strong options depending on infrastructure strategy. Enterprises should also evaluate long-term operational costs, security controls, and monitoring workflows. Continuous evaluation pipelines are critical for production AI governance.

Budget vs Premium

Open-source relevance evaluation frameworks provide strong flexibility and lower upfront costs, while enterprise monitoring platforms deliver better governance, scalability, and operational tooling. Buyers should compare infrastructure overhead, staffing needs, and workflow complexity alongside licensing costs. Managed observability services may reduce operational burden for growing AI systems.

Feature Depth vs Ease of Use

Highly customizable frameworks often provide deeper evaluation capabilities but may require more engineering effort. Easier platforms can improve adoption but may not support every advanced benchmarking requirement. Teams should balance developer productivity against evaluation sophistication. Pilot testing helps identify practical operational trade-offs early.

Integrations & Scalability

Relevance evaluation platforms should integrate with vector databases, retrieval systems, AI orchestration frameworks, observability stacks, and CI/CD workflows. Scalability testing should include evaluation latency, experiment volume, concurrent pipelines, and monitoring overhead. Continuous AI evaluation often grows significantly as retrieval systems expand. Distributed evaluation architectures may become important at enterprise scale.

Security & Compliance Needs

Organizations handling sensitive enterprise data should prioritize RBAC, authentication controls, audit logging, governance workflows, and secure experiment management. AI evaluation pipelines can expose sensitive retrieval data if governance is weak. Security reviews should include embeddings, prompts, datasets, and evaluation outputs. Compliance requirements should be validated before production deployment.

Frequently Asked Questions

1. What is a relevance evaluation toolkit?

A relevance evaluation toolkit helps organizations measure the quality of search results, retrieval systems, rankings, recommendations, or AI-generated responses. These platforms use metrics, benchmarking workflows, and evaluation pipelines to determine whether retrieved content matches user intent. They are widely used in semantic search and generative AI environments. Relevance evaluation improves trust and performance in AI systems.

2. Why is relevance evaluation important for AI systems?

Relevance evaluation ensures that AI systems retrieve useful, accurate, and contextually correct information. Poor retrieval quality can increase hallucinations, reduce recommendation quality, and damage user trust. Evaluation frameworks help organizations benchmark performance and improve retrieval workflows systematically. Continuous evaluation is becoming critical for enterprise AI governance.

3. What are common relevance evaluation metrics?

Common metrics include precision, recall, Mean Reciprocal Rank, Normalized Discounted Cumulative Gain, semantic similarity, faithfulness, context precision, and answer correctness. Modern RAG systems also evaluate hallucination risk and retrieval grounding. Different use cases require different metric combinations. Organizations should align metrics with business objectives.

4. What is retrieval-augmented generation evaluation?

Retrieval-augmented generation evaluation measures how effectively a retrieval system provides relevant context to an LLM and how accurately the generated response uses that information. It often includes faithfulness, relevance, and grounding analysis. RAG evaluation is increasingly important for enterprise AI assistants and semantic search applications. Automated and human review workflows are commonly combined.

5. Can relevance evaluation be fully automated?

Partially. Automated evaluation frameworks can score retrieval quality, semantic similarity, and hallucination risk using metrics and LLM-based judging systems. However, human feedback is still important for nuanced evaluation and business alignment. Many organizations use hybrid evaluation workflows combining automation with human review. Fully automated systems may miss context-specific quality issues.

6. Are open-source relevance evaluation frameworks production-ready?

Yes. Frameworks such as Ragas, TruLens, DeepEval, and Haystack are increasingly used in production AI environments. However, enterprises may need additional governance, observability, and monitoring capabilities depending on operational complexity. Open-source flexibility often comes with increased operational responsibility. Infrastructure planning remains important for large deployments.

7. How do organizations choose the right evaluation toolkit?

Organizations should evaluate integration compatibility, metric coverage, scalability, governance capabilities, observability features, and workflow automation. Some tools are optimized for RAG evaluation, while others focus on traditional search ranking or ML observability. Pilot testing with real datasets and production-like workloads is essential. Long-term monitoring requirements should also influence selection.

8. What role does observability play in relevance evaluation?

Observability helps teams trace retrieval workflows, identify failures, monitor hallucinations, analyze embeddings, and understand ranking behavior. It improves debugging and supports continuous optimization. Modern AI systems increasingly require observability alongside evaluation metrics. Monitoring pipelines are especially important in production generative AI deployments.

9. What security features should enterprises prioritize?

Enterprises should prioritize RBAC, authentication controls, audit logging, experiment governance, encryption, and secure dataset management. AI evaluation systems may expose sensitive prompts, embeddings, or retrieval results if governance is weak. Security reviews should include evaluation datasets and monitoring workflows. Compliance validation should occur before production rollout.

10. Can relevance evaluation toolkits support multimodal AI systems?

Yes. Modern relevance evaluation systems increasingly support multimodal retrieval involving text, images, audio, and video embeddings. Evaluation methods continue evolving as multimodal AI systems become more common. Organizations should validate whether a toolkit supports the modalities used in their applications. Multimodal evaluation complexity is higher than text-only workflows.

Conclusion

Relevance evaluation toolkits are becoming essential infrastructure for organizations building search systems, retrieval-augmented generation pipelines, semantic retrieval platforms, and AI-powered recommendation engines. The right platform depends on whether the organization prioritizes automated RAG evaluation, search ranking analysis, AI observability, experimentation workflows, or enterprise governance. Ragas, TruLens, and DeepEval are strong choices for modern generative AI and RAG evaluation workflows, while Elasticsearch Ranking Evaluation API and OpenSearch Ranking Evaluation are practical for enterprise search infrastructures. Arize Phoenix and Evidently AI provide strong observability capabilities, while PyTerrier remains valuable for information retrieval experimentation and benchmarking research. MLflow Evaluation supports broader AI lifecycle management and experiment governance. Organizations should shortlist several platforms, validate metrics using real retrieval datasets, test scalability and monitoring workflows, and confirm governance requirements before standardizing a long-term relevance evaluation strategy.