Introduction

Data Annotation Platforms help organizations label, classify, tag, segment, and organize datasets used for machine learning, artificial intelligence, computer vision, natural language processing, and generative AI applications. These platforms enable teams to create high-quality training data for AI models by combining automation, workflow management, human review systems, and quality control processes.

As AI adoption accelerates across industries, data annotation has become one of the most important stages in the machine learning lifecycle. AI systems are only as effective as the datasets used to train them, making accurate annotation critical for model quality, bias reduction, compliance, and production reliability. Modern annotation platforms now support multimodal AI workflows involving text, images, video, audio, documents, and 3D data.

Common real-world use cases include:

- Computer vision dataset labeling

- NLP and LLM training data preparation

- Autonomous vehicle perception training

- Medical imaging annotation

- Generative AI and Retrieval-Augmented Generation workflows

Key evaluation criteria for buyers include:

- Annotation accuracy and quality controls

- Automation and AI-assisted labeling

- Multi-format data support

- Workflow and review management

- Scalability and collaboration features

- Security and compliance capabilities

- Integration with ML pipelines

- Dataset versioning and governance

- Real-time collaboration support

- Cost and workforce management flexibility

Best for: AI teams, machine learning engineers, data scientists, autonomous systems teams, healthcare AI companies, enterprise AI programs, computer vision teams, and organizations building custom AI models.

Not ideal for: Organizations with minimal AI needs, static datasets requiring little labeling, or teams using fully pre-trained models without custom data workflows.

Key Trends in Data Annotation Platforms

- AI-assisted auto-labeling is reducing manual annotation workloads.

- Human-in-the-loop workflows are becoming standard for enterprise AI quality control.

- Multimodal annotation support is growing rapidly with generative AI adoption.

- Synthetic data generation is being integrated into annotation pipelines.

- Annotation governance and auditability are becoming more important in regulated industries.

- Foundation model fine-tuning is driving demand for large-scale text annotation.

- Real-time collaboration and distributed workforce management are improving scalability.

- Vector search and semantic labeling are becoming more common in AI pipelines.

- Data lineage and dataset versioning are now critical enterprise requirements.

- Privacy-aware annotation workflows are expanding in healthcare and finance sectors.

How We Selected These Tools

The platforms in this list were selected based on annotation capabilities, AI workflow support, scalability, automation features, ecosystem maturity, and enterprise adoption.

Evaluation factors included:

- Industry adoption and credibility

- Annotation quality and workflow depth

- Automation and AI-assisted labeling support

- Multi-format dataset compatibility

- Collaboration and workforce management

- Security and governance controls

- Integration with ML and MLOps systems

- Scalability and enterprise readiness

- Developer APIs and extensibility

- Support quality and documentation maturity

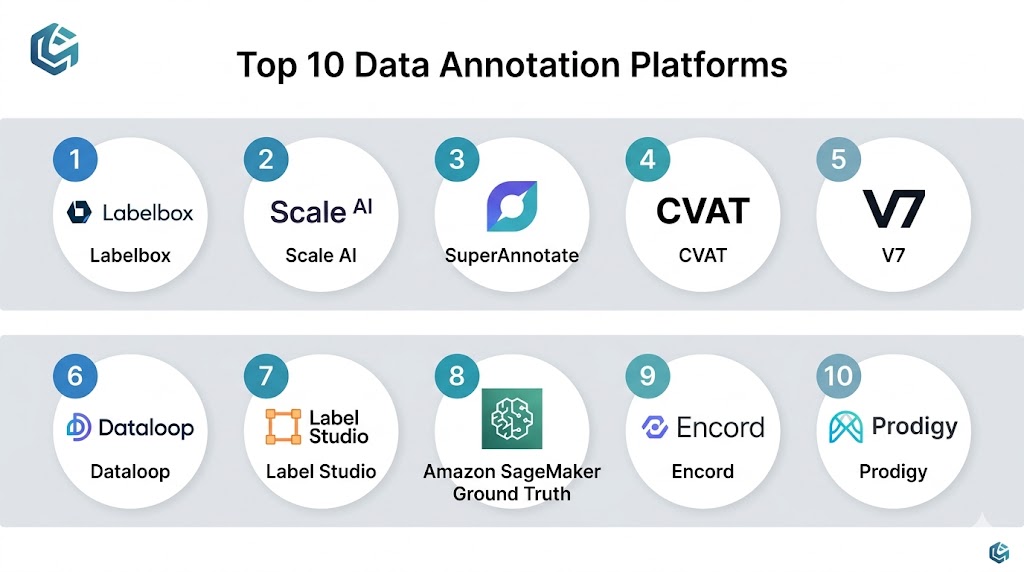

Top 10 Data Annotation Platforms

1- Labelbox

Short Description:

Labelbox is a widely used enterprise data annotation platform designed for AI training data management across image, video, text, audio, and multimodal workflows. It combines annotation tooling, workflow automation, quality management, and AI-assisted labeling capabilities. Labelbox is especially popular among enterprise AI and computer vision teams.

Key Features

- Multi-format annotation support

- AI-assisted labeling workflows

- Human review and QA pipelines

- Dataset versioning

- Collaboration management

- Model-assisted annotation

- Enterprise workflow orchestration

Pros

- Strong enterprise AI workflow support

- Good multimodal annotation capabilities

- Excellent collaboration features

- Strong automation functionality

Cons

- Enterprise pricing may be expensive

- Advanced workflows require configuration

- Smaller teams may find it complex

- Custom integrations may require engineering effort

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise security controls.

Integrations & Ecosystem

Labelbox integrates with MLOps systems, cloud platforms, AI frameworks, and storage environments.

- AWS

- Azure

- Google Cloud

- Python SDKs

- ML frameworks

- Data lakes

Support & Community

Strong enterprise support, onboarding services, and active AI development ecosystem.

2- Scale AI

Short Description:

Scale AI is an enterprise AI data platform focused on annotation, data labeling operations, model evaluation, and AI workflow management. It is heavily used in autonomous systems, generative AI, defense, and enterprise AI environments requiring large-scale annotation operations.

Key Features

- Enterprise annotation workflows

- Human-in-the-loop review systems

- AI-assisted labeling

- Multimodal dataset support

- Workforce management

- Model evaluation workflows

- Synthetic data support

Pros

- Strong enterprise-scale operations

- Good automation capabilities

- Broad AI industry adoption

- Suitable for large datasets

Cons

- Premium enterprise pricing

- May be too large-scale for smaller teams

- Some workflows require managed services

- Customization may depend on enterprise agreements

Platforms / Deployment

Cloud

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise-grade governance controls.

Integrations & Ecosystem

Scale AI integrates with cloud storage, MLOps systems, AI pipelines, and enterprise infrastructure.

- AWS

- Google Cloud

- Azure

- APIs

- ML pipelines

- AI development platforms

Support & Community

Strong enterprise support and large-scale AI services ecosystem.

3- CVAT

Short Description:

CVAT is an open-source computer vision annotation platform designed for image and video labeling workflows. It is widely used for object detection, segmentation, tracking, and machine learning training data preparation in AI research and production environments.

Key Features

- Image and video annotation

- Object tracking workflows

- Segmentation tools

- Open-source extensibility

- Collaborative annotation support

- AI-assisted labeling

- Multiple export formats

Pros

- Strong computer vision support

- Open-source flexibility

- Good annotation tooling

- Active developer community

Cons

- Requires self-hosting expertise

- Enterprise governance features are limited

- UI customization may require development

- Support depends on deployment model

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Authentication integration and deployment-dependent security controls.

Integrations & Ecosystem

CVAT integrates with machine learning workflows, storage systems, and AI tooling environments.

- Python

- OpenCV

- TensorFlow

- PyTorch

- Cloud storage

- APIs

Support & Community

Strong open-source community and growing enterprise adoption.

4- Supervisely

Short Description:

Supervisely is a collaborative annotation and MLOps platform designed for computer vision and AI training workflows. It combines annotation, automation, model training, visualization, and team collaboration capabilities within a unified environment.

Key Features

- Image and video annotation

- AI-assisted labeling

- Dataset management

- Workflow collaboration

- Visualization tools

- Model integration support

- Automation pipelines

Pros

- Strong visual annotation experience

- Good collaboration features

- Flexible deployment options

- Useful AI automation support

Cons

- Smaller ecosystem than major vendors

- Enterprise scaling may require planning

- Advanced customization may need expertise

- Some features require premium plans

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, encryption, authentication support, and enterprise security options.

Integrations & Ecosystem

Supervisely integrates with AI frameworks, cloud platforms, and machine learning workflows.

- PyTorch

- TensorFlow

- AWS

- Azure

- APIs

- Computer vision pipelines

Support & Community

Growing AI developer community with enterprise onboarding support.

5- Dataloop

Short Description:

Dataloop is an AI data management and annotation platform focused on enterprise AI pipelines, workflow automation, and human-in-the-loop annotation operations. It supports multimodal data processing and large-scale AI training workflows.

Key Features

- Multimodal annotation workflows

- AI-assisted automation

- Workflow orchestration

- Data pipeline management

- Human review systems

- Model feedback loops

- Dataset versioning

Pros

- Strong workflow automation

- Good enterprise AI pipeline support

- Useful human review capabilities

- Broad data format compatibility

Cons

- Enterprise pricing complexity

- Smaller ecosystem compared to larger vendors

- Advanced setup may require planning

- Learning curve for large workflows

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise governance support.

Integrations & Ecosystem

Dataloop integrates with AI systems, storage platforms, and machine learning workflows.

- AWS

- Azure

- APIs

- MLOps systems

- Python frameworks

- Data lakes

Support & Community

Strong enterprise onboarding and growing AI workflow ecosystem.

6- V7 Darwin

Short Description:

V7 Darwin is an AI training data platform focused heavily on medical imaging, computer vision, and high-accuracy annotation workflows. It combines automation, AI-assisted labeling, and quality review systems for enterprise AI teams.

Key Features

- Image and video annotation

- AI-assisted auto-labeling

- Medical imaging workflows

- Quality assurance systems

- Dataset management

- Workflow collaboration

- Model feedback support

Pros

- Excellent medical imaging support

- Strong annotation accuracy features

- Good AI automation workflows

- User-friendly interface

Cons

- Specialized focus may not fit all industries

- Enterprise pricing may be high

- Smaller ecosystem

- Some advanced workflows require training

Platforms / Deployment

Cloud

Security & Compliance

Encryption, RBAC, SSO, and enterprise governance controls.

Integrations & Ecosystem

V7 Darwin integrates with AI frameworks, cloud platforms, and healthcare imaging systems.

- DICOM systems

- AWS

- APIs

- AI frameworks

- Cloud storage

- ML pipelines

Support & Community

Strong support for healthcare and enterprise AI use cases.

7- Prodigy

Short Description:

Prodigy is a lightweight annotation tool designed for NLP, active learning, and machine learning workflows. It is widely used by data scientists and NLP engineers for custom text labeling and iterative model improvement.

Key Features

- NLP annotation workflows

- Active learning support

- Text classification tools

- Entity recognition labeling

- Python integration

- Model-assisted annotation

- Lightweight deployment

Pros

- Excellent NLP workflow support

- Strong developer experience

- Lightweight architecture

- Good active learning integration

Cons

- Limited multimodal support

- Smaller enterprise feature set

- Requires technical users

- Collaboration workflows less advanced

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

Prodigy integrates with NLP frameworks, Python tooling, and machine learning systems.

- spaCy

- Python

- NLP pipelines

- APIs

- ML frameworks

- Data science environments

Support & Community

Strong NLP-focused developer community and technical documentation.

8- Amazon SageMaker Ground Truth

Short Description:

Amazon SageMaker Ground Truth is a managed annotation service designed for AI training data workflows in AWS environments. It combines human labeling, automation, and machine learning-assisted annotation capabilities.

Key Features

- Managed labeling workflows

- Human review support

- AI-assisted labeling

- Computer vision annotation

- Text annotation support

- Workforce management

- AWS-native integration

Pros

- Strong AWS ecosystem integration

- Managed infrastructure

- Scalable labeling workflows

- Good automation support

Cons

- Best suited for AWS-centric environments

- Vendor lock-in concerns

- Advanced workflows may require AWS expertise

- Costs vary by workload scale

Platforms / Deployment

Cloud

Security & Compliance

IAM integration, encryption, audit logging, RBAC, and AWS cloud security controls.

Integrations & Ecosystem

Ground Truth integrates with AWS AI, storage, analytics, and machine learning services.

- SageMaker

- S3

- Lambda

- AWS AI services

- APIs

- ML workflows

Support & Community

Strong AWS enterprise support and cloud documentation ecosystem.

9- Label Studio

Short Description:

Label Studio is an open-source data labeling platform designed for text, image, audio, video, and multimodal AI workflows. It supports customizable annotation interfaces and flexible deployment options for AI teams.

Key Features

- Multi-format annotation support

- Custom labeling interfaces

- Open-source extensibility

- Human review workflows

- API integrations

- Data export flexibility

- AI-assisted labeling support

Pros

- Highly flexible annotation workflows

- Open-source customization

- Broad multimodal support

- Good developer usability

Cons

- Enterprise governance may require customization

- Self-hosting operational effort

- Scaling requires planning

- Advanced workflows may need engineering support

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication integration, encryption support, and deployment-dependent security controls.

Integrations & Ecosystem

Label Studio integrates with cloud storage, AI frameworks, APIs, and machine learning pipelines.

- Hugging Face

- AWS

- APIs

- Python

- ML frameworks

- Cloud storage

Support & Community

Active open-source community and growing enterprise ecosystem.

10- Kili Technology

Short Description:

Kili Technology is a collaborative data annotation platform designed for enterprise AI workflows involving text, images, audio, and document labeling. It focuses on quality control, workflow automation, and annotation team management.

Key Features

- Multimodal annotation workflows

- Human review systems

- AI-assisted labeling

- Quality management tools

- Workflow collaboration

- Dataset governance

- Team productivity tracking

Pros

- Strong workflow collaboration

- Good annotation quality controls

- Broad data format support

- Useful enterprise governance features

Cons

- Smaller ecosystem than major vendors

- Enterprise scaling may require planning

- Some advanced integrations require customization

- Premium features may increase costs

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise governance support.

Integrations & Ecosystem

Kili Technology integrates with AI pipelines, cloud storage, APIs, and enterprise machine learning workflows.

- AWS

- APIs

- Python

- ML frameworks

- Data lakes

- AI development systems

Support & Community

Strong onboarding resources and growing enterprise AI customer base.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Labelbox | Enterprise multimodal annotation | Web / Cloud | Cloud / Hybrid | AI-assisted labeling | N/A |

| Scale AI | Large-scale enterprise AI workflows | Web / Cloud | Cloud | Enterprise annotation operations | N/A |

| CVAT | Open-source computer vision labeling | Linux / Web | Self-hosted / Hybrid | Video annotation workflows | N/A |

| Supervisely | Collaborative vision workflows | Web / Cloud | Hybrid | Visual AI collaboration | N/A |

| Dataloop | AI workflow automation | Web / Cloud | Cloud / Hybrid | Human-in-the-loop pipelines | N/A |

| V7 Darwin | Medical imaging annotation | Web / Cloud | Cloud | Medical AI workflows | N/A |

| Prodigy | NLP annotation | Linux / Python | Self-hosted / Hybrid | Active learning support | N/A |

| SageMaker Ground Truth | AWS AI training pipelines | Cloud | Cloud | Managed labeling workflows | N/A |

| Label Studio | Open-source multimodal annotation | Web / Cloud | Hybrid | Flexible custom interfaces | N/A |

| Kili Technology | Enterprise collaborative annotation | Web / Cloud | Cloud / Hybrid | Quality management workflows | N/A |

Evaluation & Scoring of Data Annotation Platforms

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Labelbox | 9 | 8 | 9 | 9 | 8 | 9 | 7 | 8.5 |

| Scale AI | 9 | 7 | 8 | 9 | 9 | 9 | 6 | 8.2 |

| CVAT | 8 | 7 | 7 | 6 | 8 | 7 | 9 | 7.6 |

| Supervisely | 8 | 8 | 8 | 7 | 8 | 8 | 8 | 8.0 |

| Dataloop | 8 | 7 | 8 | 8 | 8 | 8 | 7 | 7.8 |

| V7 Darwin | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7.8 |

| Prodigy | 7 | 8 | 7 | 6 | 7 | 7 | 9 | 7.3 |

| SageMaker Ground Truth | 8 | 8 | 9 | 9 | 8 | 9 | 7 | 8.2 |

| Label Studio | 8 | 8 | 8 | 6 | 8 | 7 | 9 | 7.9 |

| Kili Technology | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7.8 |

These scores are comparative and should be interpreted as a practical buying guide rather than a universal ranking system. A higher score generally reflects stronger balance across annotation quality, usability, integrations, security, scalability, support, and operational value. The best platform depends heavily on dataset type, AI maturity, workforce scale, compliance needs, and workflow complexity.

Which Data Annotation Platform Is Right for You?

Solo / Freelancer

Individual AI developers and small teams often benefit from lightweight and flexible platforms such as Prodigy, Label Studio, and CVAT. These tools provide strong customization and lower entry costs while supporting NLP and computer vision workflows. However, they may require more operational effort and technical setup compared to fully managed enterprise platforms.

SMB

SMBs should prioritize ease of onboarding, automation support, and scalable collaboration workflows. Labelbox, Supervisely, and V7 Darwin are strong options because they balance usability, AI-assisted annotation, and workflow management without requiring massive enterprise infrastructure. Smaller teams should focus on annotation quality and operational simplicity.

Mid-Market

Mid-market organizations usually need governance, collaboration, workflow automation, and integration with ML pipelines. Labelbox, Dataloop, and SageMaker Ground Truth are strong choices for organizations scaling AI training operations across multiple teams and datasets. These platforms also support human review and dataset management workflows more effectively.

Enterprise

Large enterprises should prioritize governance, security, workforce management, automation, and multimodal annotation support. Scale AI, Labelbox, SageMaker Ground Truth, and Dataloop are among the strongest enterprise-ready platforms for managing large-scale AI training operations. Regulated industries should also evaluate auditability and privacy controls carefully.

Budget vs Premium

Open-source tools such as CVAT and Label Studio reduce licensing costs but often require more operational management and customization. Enterprise platforms provide automation, governance, workforce scaling, and managed infrastructure but may significantly increase ownership costs at scale.

Feature Depth vs Ease of Use

Scale AI and Labelbox provide deep enterprise workflow capabilities, while Supervisely and V7 Darwin emphasize usability and collaborative annotation experiences. Prodigy is highly efficient for technical NLP users but less suitable for large non-technical annotation teams.

Integrations & Scalability

Organizations building AI pipelines should prioritize platforms with strong integration ecosystems and support for cloud storage, MLOps systems, vector databases, and machine learning frameworks. Platforms with scalable review workflows are especially important for enterprise AI quality control.

Security & Compliance Needs

Healthcare, finance, defense, and regulated industries should prioritize encryption, RBAC, audit logging, SSO, privacy-aware workflows, and governance support. Teams handling sensitive datasets should also review deployment flexibility and data residency requirements carefully.

Frequently Asked Questions

1. What is a Data Annotation Platform?

A Data Annotation Platform is a tool used to label, classify, segment, tag, or organize datasets for machine learning and AI model training. These platforms help create structured training data for computer vision, NLP, speech recognition, recommendation systems, and generative AI applications. High-quality annotations are critical for building accurate AI models.

2. Why is data annotation important for AI?

Machine learning models depend heavily on labeled training data to learn patterns and make predictions. Poor-quality annotations can reduce model accuracy, introduce bias, and create unreliable AI systems. Annotation platforms help organizations improve data consistency, workflow quality, and scalability.

3. Which industries use data annotation platforms the most?

Industries such as healthcare, autonomous vehicles, finance, retail, cybersecurity, manufacturing, defense, and customer support heavily use annotation platforms. AI adoption in these sectors often requires large amounts of labeled text, image, audio, or video data.

4. What types of data can annotation platforms handle?

Modern annotation platforms support images, video, text, audio, documents, 3D point clouds, medical imaging, and multimodal datasets. Some platforms specialize in computer vision, while others focus more heavily on NLP or enterprise AI workflows.

5. What is AI-assisted annotation?

AI-assisted annotation uses machine learning models to pre-label or suggest annotations automatically. Human reviewers then validate or correct the outputs, significantly reducing manual effort and improving productivity. This approach is increasingly common in enterprise AI pipelines.

6. Are open-source annotation tools enterprise-ready?

Yes, tools such as CVAT and Label Studio are widely used in production environments. However, enterprises should evaluate operational complexity, security controls, governance capabilities, and support models before standardizing on open-source platforms.

7. What security features should buyers prioritize?

Organizations should evaluate RBAC, SSO, encryption, audit logging, dataset governance, privacy workflows, and secure collaboration features. Regulated industries should also assess deployment flexibility, compliance readiness, and access control management.

8. Can annotation platforms support generative AI workflows?

Yes, annotation platforms increasingly support generative AI and Retrieval-Augmented Generation systems. They are used for text classification, prompt-response labeling, ranking tasks, semantic annotation, and fine-tuning datasets for foundation models.

9. What are the biggest implementation challenges?

Common challenges include annotation quality consistency, workforce management, scaling review workflows, maintaining dataset versioning, reducing bias, and integrating annotation systems into ML pipelines. Poor governance and weak quality assurance can significantly reduce model reliability.

10. How should organizations evaluate annotation platforms?

Teams should run pilot projects using real datasets and realistic annotation workflows. Buyers should validate usability, annotation quality controls, automation support, scalability, integrations, governance features, and operational costs before selecting a platform.

Conclusion

Data Annotation Platforms have become foundational infrastructure for modern AI, machine learning, computer vision, NLP, and generative AI systems. As organizations increasingly rely on AI-powered applications, the quality, governance, and scalability of annotation workflows directly impact model performance and operational trust. Labelbox and Scale AI remain among the strongest enterprise platforms for large-scale multimodal AI training operations, while CVAT and Label Studio continue to provide flexible open-source alternatives for technical teams. Platforms such as Supervisely, Dataloop, and V7 Darwin focus heavily on collaborative workflows, automation, and specialized AI domains such as medical imaging. The right annotation platform ultimately depends on dataset complexity, AI maturity, workforce size, governance requirements, security expectations, and budget priorities. Organizations should shortlist multiple platforms, run pilot annotation workflows, evaluate automation quality and review systems, validate integrations with ML pipelines, and choose the solution that best fits long-term AI development goals.