Introduction

Model Explainability Tools help organizations understand, interpret, monitor, and explain how machine learning and AI models make decisions. These tools provide visibility into model behavior, feature importance, prediction drivers, bias risks, performance drift, and decision logic. In simple terms, they help teams answer an important question: why did the model produce this output?

As AI systems become more common in finance, healthcare, insurance, hiring, cybersecurity, customer support, and enterprise automation, explainability is no longer optional. Businesses need transparent models to support trust, governance, compliance, debugging, and responsible AI practices. Model explainability tools are especially important when AI decisions affect customers, employees, risk scores, credit approvals, fraud alerts, medical insights, or operational workflows.

Common real-world use cases include:

- Explaining individual AI predictions

- Detecting biased or unfair model behavior

- Monitoring model drift and performance changes

- Supporting responsible AI governance

- Debugging black-box machine learning models

Key evaluation criteria for buyers include:

- Local and global explanation capabilities

- Bias and fairness analysis

- Model monitoring and drift detection

- Support for different model types

- Integration with MLOps workflows

- Visualization and reporting quality

- Security and governance controls

- Scalability for enterprise AI systems

- Ease of use for technical and business users

- Support for regulatory and audit workflows

Best for: Data scientists, machine learning engineers, AI governance teams, risk teams, compliance leaders, enterprise AI teams, product teams, and regulated organizations using AI in high-impact decision-making.

Not ideal for: Teams using only simple rule-based automation, small experiments with no production models, or organizations that do not require AI monitoring, governance, or decision transparency.

Key Trends in Model Explainability Tools

- Explainability is becoming a core requirement in enterprise AI governance.

- Generative AI systems are increasing demand for transparency and evaluation workflows.

- Bias, fairness, and responsible AI checks are becoming standard in model review processes.

- Model monitoring platforms are combining explainability with drift, performance, and data quality insights.

- Local explanations are becoming important for customer-facing AI decisions.

- Global model behavior analysis is helping teams understand feature influence at scale.

- Explainability dashboards are becoming more business-friendly and less technical.

- MLOps platforms are embedding explainability directly into deployment workflows.

- Regulated industries are prioritizing audit trails, model documentation, and approval workflows.

- Open-source explainability libraries remain important for research, experimentation, and custom AI systems.

How We Selected These Tools

The tools in this list were selected based on explainability depth, enterprise adoption, usability, integration maturity, governance support, and relevance for modern AI workflows.

Evaluation factors included:

- Model explanation capabilities

- Support for local and global interpretability

- Bias and fairness analysis features

- Model monitoring and drift detection

- Integration with ML and MLOps ecosystems

- Visualization and reporting quality

- Security and governance controls

- Open-source or enterprise flexibility

- Scalability for production AI systems

- Support quality and community maturity

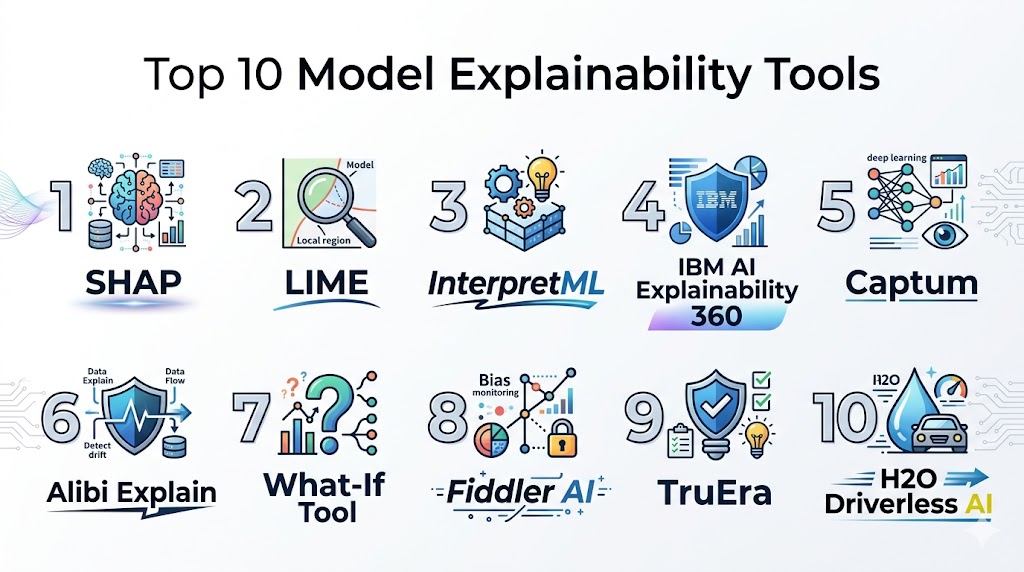

Top 10 Model Explainability Tools

1- SHAP

Short Description:

SHAP is one of the most widely used open-source model explainability tools for understanding feature contribution in machine learning predictions. It helps data scientists explain both individual predictions and overall model behavior using feature attribution methods. SHAP is especially popular in research, data science, and enterprise AI validation workflows.

Key Features

- Local prediction explanations

- Global feature importance analysis

- Support for multiple model types

- Visualization plots for interpretability

- Feature attribution scoring

- Works with tabular, text, and image models

- Strong Python ecosystem support

Pros

- Highly trusted in data science workflows

- Strong open-source adoption

- Flexible across many model types

- Excellent visualization support

Cons

- Can be computationally expensive

- Requires technical expertise

- Not a full governance platform

- Performance may vary on large datasets

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

SHAP integrates well with Python-based machine learning workflows, notebooks, and model development environments. It is commonly used during experimentation, validation, and model review.

- Python

- scikit-learn

- XGBoost

- TensorFlow

- PyTorch

- Jupyter notebooks

Support & Community

Very strong open-source community, extensive examples, research adoption, and broad data science usage.

2- LIME

Short Description:

LIME is an open-source explainability tool designed to explain individual predictions from machine learning models. It works by approximating complex model behavior locally around a specific prediction. LIME is useful for teams that need lightweight, model-agnostic explanations during model development and validation.

Key Features

- Local model explanations

- Model-agnostic interpretability

- Tabular, text, and image support

- Feature contribution analysis

- Lightweight implementation

- Useful for black-box models

- Python-based workflow support

Pros

- Easy to understand conceptually

- Works with many model types

- Useful for quick experimentation

- Strong educational and research value

Cons

- Local approximations may be unstable

- Less comprehensive than full monitoring tools

- Requires technical setup

- Not designed for enterprise governance alone

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

LIME integrates with Python machine learning workflows and can be used alongside notebooks, custom pipelines, and model validation processes.

- Python

- scikit-learn

- TensorFlow

- PyTorch

- Jupyter notebooks

- Custom ML pipelines

Support & Community

Strong academic and open-source community with wide adoption in explainability learning and experimentation.

3- IBM Watson OpenScale

Short Description:

IBM Watson OpenScale is an enterprise AI governance and model monitoring platform designed to help organizations track model performance, explain predictions, detect bias, and manage AI risk. It is well suited for enterprises that need explainability, fairness, and governance across production AI systems.

Key Features

- Model explainability workflows

- Bias and fairness monitoring

- Drift detection

- Model performance tracking

- AI governance support

- Enterprise dashboards

- Multi-model monitoring

Pros

- Strong enterprise governance capabilities

- Good bias and fairness analysis

- Suitable for regulated industries

- Supports production AI monitoring

Cons

- Enterprise setup can be complex

- Best fit for larger organizations

- May require IBM ecosystem familiarity

- Pricing and implementation may be heavy for small teams

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise governance support.

Integrations & Ecosystem

IBM Watson OpenScale integrates with machine learning platforms, enterprise AI systems, and governance workflows.

- IBM Cloud Pak for Data

- Python models

- Machine learning platforms

- Enterprise dashboards

- Data governance systems

- Cloud AI workflows

Support & Community

Strong enterprise support, professional services, documentation, and governance-focused implementation resources.

4- Fiddler AI

Short Description:

Fiddler AI is an enterprise model monitoring and explainability platform focused on responsible AI, model transparency, drift detection, and performance observability. It helps teams understand model behavior in production and provides explanation workflows for business-critical AI decisions.

Key Features

- Model explainability dashboards

- Performance monitoring

- Bias and fairness analysis

- Data drift detection

- Prediction-level explanations

- Responsible AI governance

- Enterprise reporting workflows

Pros

- Strong production AI observability

- Good explainability and monitoring combination

- Business-friendly dashboards

- Useful for responsible AI programs

Cons

- Enterprise-focused pricing

- May be excessive for small experiments

- Requires integration planning

- Advanced workflows may need onboarding support

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise security controls.

Integrations & Ecosystem

Fiddler AI integrates with model deployment systems, data platforms, and enterprise AI workflows.

- Python ML workflows

- Cloud platforms

- MLOps pipelines

- Data warehouses

- APIs

- Enterprise AI systems

Support & Community

Strong enterprise support, responsible AI resources, and onboarding assistance.

5- Arize AI

Short Description:

Arize AI is an ML observability platform that includes explainability, drift detection, performance monitoring, tracing, and evaluation features for production AI systems. It is widely used by teams managing machine learning and generative AI applications at scale.

Key Features

- Model performance monitoring

- Feature drift detection

- Explainability workflows

- Model tracing and debugging

- LLM evaluation support

- Data quality monitoring

- Production AI observability

Pros

- Strong ML observability capabilities

- Good production debugging workflows

- Useful for both ML and LLM systems

- Strong visualization and monitoring

Cons

- May require mature MLOps workflows

- Enterprise pricing can increase at scale

- Explainability is part of broader observability

- Setup requires pipeline integration

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise governance support.

Integrations & Ecosystem

Arize AI integrates with MLOps systems, data platforms, AI frameworks, and production deployment environments.

- Python

- MLflow

- Kubernetes

- Cloud platforms

- LLM frameworks

- Data warehouses

Support & Community

Strong enterprise support, technical documentation, and growing AI observability community.

6- WhyLabs

Short Description:

WhyLabs is an AI observability and monitoring platform focused on detecting model drift, data quality issues, performance degradation, and production model risks. It helps teams understand why models change over time and supports explainability workflows through monitoring and diagnostics.

Key Features

- Model monitoring

- Data drift detection

- Data quality profiling

- Performance diagnostics

- AI observability dashboards

- Alerting workflows

- Production model health tracking

Pros

- Strong data and model monitoring

- Useful for production AI reliability

- Good alerting and diagnostics

- Supports scalable AI operations

Cons

- Less focused on standalone explanation methods

- Requires production data integration

- May be too advanced for early-stage teams

- Governance depth depends on setup

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, encryption, authentication integration, and enterprise governance support.

Integrations & Ecosystem

WhyLabs integrates with AI pipelines, cloud platforms, model deployment systems, and monitoring workflows.

- Python

- Kubernetes

- ML pipelines

- Cloud infrastructure

- APIs

- Data platforms

Support & Community

Strong technical documentation, enterprise support, and active AI observability ecosystem.

7- Aporia

Short Description:

Aporia is an AI control and observability platform designed for monitoring, explaining, and governing machine learning and AI systems. It supports model monitoring, explainability, anomaly detection, and operational controls for production AI environments.

Key Features

- Model monitoring dashboards

- Explainability workflows

- Data drift detection

- Anomaly detection

- AI governance controls

- Performance tracking

- Production alerting

Pros

- Strong production model monitoring

- Good governance-focused features

- Useful for AI risk management

- Supports business and technical users

Cons

- Enterprise-focused platform

- Requires integration with production systems

- May be more than small teams need

- Advanced setup may require support

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise-grade access controls.

Integrations & Ecosystem

Aporia integrates with machine learning workflows, data systems, cloud environments, and production AI infrastructure.

- Python

- APIs

- Cloud platforms

- MLOps systems

- Data warehouses

- Model deployment tools

Support & Community

Enterprise support, onboarding assistance, and AI monitoring documentation.

8- TruEra

Short Description:

TruEra is an AI quality, explainability, and model intelligence platform designed to help teams evaluate, debug, monitor, and improve machine learning models. It focuses on model quality, fairness, drift, and explainability across development and production workflows.

Key Features

- Model explainability

- Bias and fairness analysis

- Model quality diagnostics

- Drift monitoring

- Root cause analysis

- AI governance support

- Model debugging workflows

Pros

- Strong model quality focus

- Useful for regulated AI teams

- Good fairness and explainability features

- Supports development and production workflows

Cons

- Enterprise-oriented pricing

- Requires mature ML processes

- Advanced workflows may need training

- Less suitable for lightweight experimentation

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise governance controls.

Integrations & Ecosystem

TruEra integrates with machine learning platforms, data science workflows, and enterprise AI systems.

- Python

- ML pipelines

- Cloud platforms

- Data science notebooks

- APIs

- Enterprise AI workflows

Support & Community

Strong enterprise support, AI quality expertise, and model risk management resources.

9- Microsoft Responsible AI Toolbox

Short Description:

Microsoft Responsible AI Toolbox is an open-source toolkit designed to help data scientists evaluate fairness, interpret models, analyze errors, and improve responsible AI workflows. It combines multiple explainability and diagnostic tools into a practical development environment.

Key Features

- Model interpretability

- Error analysis

- Fairness assessment

- Counterfactual explanations

- Responsible AI dashboards

- Python support

- Integration with ML workflows

Pros

- Strong responsible AI feature set

- Open-source flexibility

- Useful for model debugging

- Good visualization capabilities

Cons

- Requires technical users

- Not a full enterprise governance platform

- Deployment support depends on team setup

- Best suited for development and evaluation workflows

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

Microsoft Responsible AI Toolbox works with Python ML workflows, notebooks, and responsible AI development processes.

- Python

- scikit-learn

- Azure ML

- Jupyter notebooks

- Model evaluation workflows

- Data science pipelines

Support & Community

Strong open-source community, Microsoft ecosystem support, and responsible AI documentation.

10- Evidently AI

Short Description:

Evidently AI is an open-source and commercial AI monitoring platform that helps teams evaluate model quality, data drift, performance, and explainability signals. It is popular among data scientists and MLOps teams that need practical reporting and monitoring for production models.

Key Features

- Model monitoring reports

- Data drift detection

- Performance tracking

- Data quality checks

- Explainability diagnostics

- Batch and production monitoring

- Open-source workflow support

Pros

- Strong open-source value

- Easy reporting workflows

- Good for MLOps teams

- Useful for drift and model quality checks

Cons

- Enterprise governance may require paid options

- Less focused on deep explanation methods alone

- Requires technical setup

- Advanced production monitoring needs planning

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

Authentication, encryption, RBAC, and governance options vary by deployment and plan.

Integrations & Ecosystem

Evidently AI integrates with machine learning workflows, notebooks, pipelines, and monitoring systems.

- Python

- MLflow

- Airflow

- Prefect

- Jupyter notebooks

- Data platforms

Support & Community

Active open-source community, documentation, and commercial support options.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| SHAP | Feature attribution analysis | Python / Linux / Cloud | Self-hosted / Hybrid | Local and global explanations | N/A |

| LIME | Lightweight local explanations | Python / Linux | Self-hosted / Hybrid | Model-agnostic explanations | N/A |

| IBM Watson OpenScale | Enterprise AI governance | Web / Cloud | Cloud / Hybrid | Bias and explainability monitoring | N/A |

| Fiddler AI | Responsible AI observability | Web / Cloud | Cloud / Hybrid | Production explainability dashboards | N/A |

| Arize AI | ML observability | Web / Cloud | Cloud / Hybrid | Drift and model debugging | N/A |

| WhyLabs | AI reliability monitoring | Web / Cloud | Cloud / Self-hosted / Hybrid | Data and model health monitoring | N/A |

| Aporia | AI control and monitoring | Web / Cloud | Cloud / Hybrid | AI governance controls | N/A |

| TruEra | Model quality and fairness | Web / Cloud | Cloud / Hybrid | Model diagnostics and fairness | N/A |

| Microsoft Responsible AI Toolbox | Responsible AI development | Python / Cloud | Self-hosted / Hybrid | Fairness and error analysis | N/A |

| Evidently AI | Open-source model monitoring | Python / Web / Cloud | Cloud / Self-hosted / Hybrid | Drift and model quality reports | N/A |

Evaluation & Scoring of Model Explainability Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| SHAP | 9 | 7 | 8 | 5 | 7 | 8 | 10 | 7.9 |

| LIME | 7 | 8 | 7 | 5 | 7 | 7 | 10 | 7.2 |

| IBM Watson OpenScale | 9 | 7 | 8 | 9 | 8 | 9 | 6 | 8.0 |

| Fiddler AI | 9 | 8 | 8 | 9 | 8 | 9 | 7 | 8.3 |

| Arize AI | 8 | 8 | 9 | 9 | 9 | 9 | 7 | 8.4 |

| WhyLabs | 8 | 8 | 8 | 8 | 9 | 8 | 8 | 8.2 |

| Aporia | 8 | 8 | 8 | 9 | 8 | 8 | 7 | 8.0 |

| TruEra | 9 | 7 | 8 | 9 | 8 | 8 | 6 | 7.9 |

| Microsoft Responsible AI Toolbox | 8 | 7 | 8 | 5 | 7 | 8 | 10 | 7.7 |

| Evidently AI | 8 | 8 | 8 | 7 | 8 | 8 | 9 | 8.0 |

These scores are comparative and should be used as a practical evaluation guide rather than an absolute ranking. A higher score usually indicates stronger balance across explainability depth, usability, integrations, security, monitoring, support, and value. The right choice depends on whether your team needs research-level interpretability, production monitoring, AI governance, fairness analysis, or enterprise reporting.

Which Model Explainability Tool Is Right for You?

Solo / Freelancer

Solo developers and independent data scientists usually benefit most from SHAP, LIME, Microsoft Responsible AI Toolbox, and Evidently AI. These tools provide strong explainability and diagnostic capabilities without requiring heavy enterprise infrastructure. They are especially useful for experiments, proof-of-concepts, and model validation workflows.

SMB

SMBs should focus on ease of use, practical monitoring, and low operational complexity. Evidently AI, WhyLabs, and Arize AI are strong options for teams that need model quality checks, drift detection, and explainability without building everything from scratch. SHAP can also be used alongside these tools for deeper technical analysis.

Mid-Market

Mid-market organizations usually need a mix of explainability, monitoring, collaboration, and model governance. Arize AI, Fiddler AI, WhyLabs, Aporia, and Evidently AI are practical choices for teams managing multiple production models. These platforms help bridge the gap between data science teams and business stakeholders.

Enterprise

Large enterprises should prioritize governance, auditability, security, fairness, bias monitoring, and production-scale AI oversight. IBM Watson OpenScale, Fiddler AI, TruEra, Arize AI, and Aporia are strong enterprise-ready options. Regulated organizations should evaluate reporting, access controls, audit trails, and integration with AI governance processes.

Budget vs Premium

Open-source tools such as SHAP, LIME, Microsoft Responsible AI Toolbox, and Evidently AI provide excellent value for technical teams. Premium platforms provide stronger governance, dashboards, support, security, and production monitoring, but they increase total ownership cost. Budget-sensitive teams can start open-source and move to enterprise tools when governance needs mature.

Feature Depth vs Ease of Use

SHAP and LIME provide deep technical explanations but require data science expertise. Fiddler AI, Arize AI, WhyLabs, and Aporia provide more accessible dashboards and production workflows. Microsoft Responsible AI Toolbox is strong for development-stage analysis, while IBM Watson OpenScale is more suitable for enterprise governance.

Integrations & Scalability

Teams running production models should prioritize tools that integrate with MLOps pipelines, cloud platforms, data warehouses, APIs, experiment tracking systems, and deployment environments. Arize AI, WhyLabs, Fiddler AI, Aporia, and Evidently AI are especially relevant for scalable monitoring and observability workflows.

Security & Compliance Needs

Regulated industries should evaluate RBAC, SSO, encryption, audit logs, access controls, reporting workflows, bias monitoring, and model documentation capabilities. Explainability tools should support both technical debugging and business-level accountability for AI decisions.

Frequently Asked Questions

1. What are Model Explainability Tools?

Model Explainability Tools help teams understand how AI and machine learning models make decisions. They show which features influenced predictions, how models behave across datasets, and where risks such as bias, drift, or instability may appear. These tools are important for trust, debugging, governance, and compliance.

2. Why is model explainability important?

Explainability helps organizations understand whether AI models are making decisions fairly, accurately, and reliably. It supports debugging, model improvement, compliance reviews, and stakeholder trust. Without explainability, teams may struggle to detect hidden risks in black-box models.

3. What is the difference between local and global explainability?

Local explainability explains one specific prediction, such as why a customer was flagged as high risk. Global explainability explains overall model behavior across many predictions, such as which features generally influence outcomes the most. Both are important for responsible AI workflows.

4. Which tools are best for technical data science teams?

SHAP, LIME, Microsoft Responsible AI Toolbox, and Evidently AI are strong choices for technical data science teams. They provide flexible explainability, diagnostics, and reporting capabilities. These tools are especially useful during experimentation, validation, and model debugging.

5. Which tools are best for enterprise AI governance?

IBM Watson OpenScale, Fiddler AI, TruEra, Arize AI, and Aporia are strong options for enterprise governance workflows. They provide dashboards, monitoring, bias detection, audit support, and production AI visibility. Enterprises should also evaluate security and compliance capabilities.

6. Can explainability tools detect bias?

Many explainability and responsible AI tools can help detect bias by analyzing model behavior across sensitive or business-critical groups. However, bias detection also depends on dataset quality, proper fairness metrics, and clear governance policies. Tools support the process, but teams must define fairness expectations carefully.

7. Do model explainability tools work with deep learning models?

Yes, many tools support deep learning models, although explanation quality and performance may vary depending on model architecture and data type. SHAP, LIME, and enterprise platforms can support different model categories, but deep learning explanations often require careful interpretation.

8. Are explainability tools required for regulated industries?

Many regulated industries strongly benefit from explainability because AI decisions may need to be audited, justified, or reviewed. Finance, healthcare, insurance, hiring, and public sector use cases often require strong transparency and governance. Requirements vary by organization and jurisdiction.

9. What are common mistakes when implementing explainability?

Common mistakes include treating explainability as an afterthought, relying on one metric, ignoring bias analysis, failing to monitor drift, and producing explanations that business users cannot understand. Teams should build explainability into the model lifecycle from development through production.

10. How should organizations choose a Model Explainability Tool?

Organizations should first define whether they need technical explanations, production monitoring, governance reporting, bias analysis, or all of these capabilities. Then they should test tools with real models and datasets. The final decision should consider usability, integrations, security, scalability, support, and long-term AI governance needs.

Conclusion

Model Explainability Tools are now essential for organizations that want trustworthy, transparent, and governable AI systems. As machine learning and generative AI move deeper into business decision-making, teams need clear visibility into how models behave, why predictions happen, and where risks such as bias, drift, or performance degradation may appear. SHAP and LIME remain valuable open-source options for technical explainability, while enterprise platforms such as Fiddler AI, IBM Watson OpenScale, TruEra, Arize AI, WhyLabs, and Aporia provide broader monitoring and governance capabilities. Microsoft Responsible AI Toolbox and Evidently AI offer practical options for development-stage evaluation and model quality reporting. The best tool depends on model complexity, production maturity, governance requirements, compliance needs, team expertise, and budget. Organizations should shortlist two or three options, test them with real models, validate explanation quality, review security controls, and choose the platform that best supports long-term responsible AI operations.