Introduction

Human-in-the-Loop Labeling Tools help organizations create high-quality datasets for artificial intelligence and machine learning projects by combining human expertise with automation. These platforms allow teams to annotate images, videos, audio, text, documents, and sensor data while ensuring quality through human review and validation workflows.

As AI adoption continues to grow across industries, businesses are realizing that accurate data labeling directly impacts model performance, reliability, and AI safety. Modern annotation platforms now include automation, active learning, workflow orchestration, collaboration tools, and integration capabilities that help teams scale large AI initiatives efficiently.

These tools are commonly used for:

- Computer vision projects

- NLP and chatbot training

- Medical imaging analysis

- Autonomous vehicle systems

- Generative AI and RLHF workflows

Buyers should evaluate these platforms based on:

- Annotation accuracy

- Automation capabilities

- Workflow management

- Quality assurance controls

- Collaboration features

- Scalability

- Security and governance

- Integration ecosystem

- Deployment flexibility

- Cost efficiency

Best for: AI engineers, data scientists, machine learning teams, healthcare AI providers, autonomous systems developers, and enterprises building custom AI solutions.

Not ideal for: Organizations with minimal AI usage, teams relying entirely on pre-trained AI APIs, or businesses without ongoing annotation requirements.

Key Trends in Human-in-the-Loop Labeling Tools

- AI-assisted labeling is reducing repetitive manual annotation work.

- RLHF workflows are becoming essential for generative AI training.

- Video and multimodal annotation demand continues to rise.

- Open-source labeling platforms are gaining enterprise attention.

- Workflow orchestration is becoming a core feature requirement.

- Security and governance controls are increasingly important.

- API-first architectures are simplifying MLOps integration.

- Synthetic data support is improving AI training efficiency.

- Real-time collaboration workflows are replacing isolated annotation environments.

- Healthcare and robotics industries are driving advanced annotation innovation.

How We Selected These Tools

The tools in this list were selected using a balanced evaluation framework focused on practical enterprise AI requirements and real-world usability.

Selection factors included:

- Market adoption and visibility

- Annotation feature completeness

- AI-assisted automation capabilities

- Workflow management quality

- Scalability for large datasets

- Integration flexibility

- Security and governance readiness

- Deployment flexibility

- Community adoption and support quality

- Suitability across multiple industries

The final list includes a mix of enterprise-grade, open-source, and developer-focused annotation platforms suitable for different organizational needs and technical maturity levels.

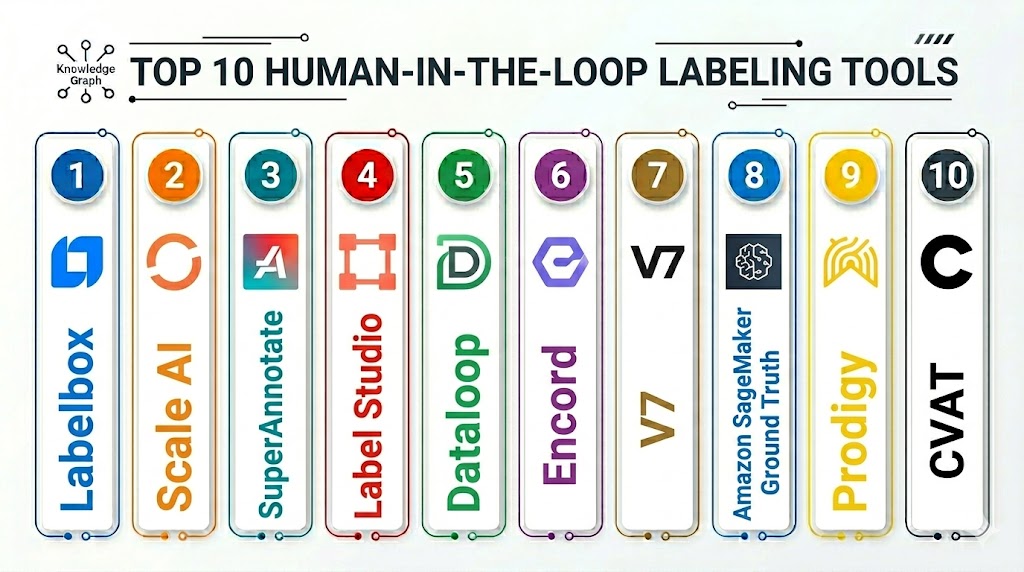

Top 10 Human-in-the-Loop Labeling Tools

1- Labelbox

Short Description:

Labelbox is a leading enterprise AI data labeling platform designed for organizations managing large-scale machine learning workflows. It supports image, video, text, and geospatial annotation while combining automation with human quality review. The platform is widely used by enterprise AI teams requiring scalable collaboration and governance capabilities.

Key Features

- AI-assisted annotation

- Multimodal labeling support

- Active learning workflows

- Consensus quality scoring

- Workflow orchestration

- Dataset management

- Enterprise collaboration tools

Pros

- Strong enterprise scalability

- Mature automation capabilities

- Advanced QA workflows

- Broad annotation support

Cons

- Premium pricing structure

- Learning curve for advanced workflows

- Complex enterprise setup

- Better suited for larger teams

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO/SAML

- RBAC

- Audit logs

- Encryption

- SOC 2

- GDPR support

Integrations & Ecosystem

Labelbox integrates with cloud providers, MLOps systems, and AI pipelines through APIs and SDKs.

- AWS

- Azure

- Google Cloud

- Snowflake

- Python SDK

- REST APIs

Support & Community

Strong onboarding programs, enterprise support tiers, and detailed documentation for AI teams.

2- Scale AI

Short Description:

Scale AI provides enterprise-grade annotation infrastructure focused on autonomous systems, defense, generative AI, and large-scale machine learning operations. The platform combines human reviewers with automation to improve dataset quality and accelerate AI development cycles.

Key Features

- RLHF workflows

- LLM fine-tuning support

- Autonomous vehicle annotation

- Synthetic data capabilities

- Human QA pipelines

- API-first architecture

- Large-scale workforce management

Pros

- Excellent enterprise scalability

- Advanced AI automation

- Strong RLHF capabilities

- Efficient high-volume processing

Cons

- Expensive for smaller teams

- Enterprise-oriented onboarding

- Complex pricing structure

- Less suitable for lightweight projects

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO/SAML

- RBAC

- Encryption

- Audit logs

- Not publicly stated for some certifications

Integrations & Ecosystem

Scale AI supports enterprise AI infrastructure and modern ML workflows.

- AWS

- Azure

- Google Cloud

- APIs

- Data lake integrations

- MLOps pipelines

Support & Community

Enterprise-focused support model with implementation guidance and onboarding assistance.

3- SuperAnnotate

Short Description:

SuperAnnotate is a collaborative annotation platform built for enterprise computer vision and AI projects. It supports image, video, text, and document annotation workflows while emphasizing automation, collaboration, and quality management.

Key Features

- Smart annotation tools

- Video labeling support

- Team collaboration workflows

- QA management

- Dataset analytics

- Model-assisted labeling

- Workflow automation

Pros

- Excellent collaboration features

- User-friendly interface

- Strong video annotation support

- Flexible workflow design

Cons

- Enterprise pricing model

- Advanced features require training

- Limited open-source flexibility

- Complex deployment for large projects

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO

- RBAC

- Encryption

- Audit logs

- GDPR support

Integrations & Ecosystem

SuperAnnotate integrates with cloud storage systems and AI development environments.

- AWS

- Azure

- Google Cloud

- APIs

- Python SDK

- ML workflow integrations

Support & Community

Growing enterprise adoption with strong onboarding and customer support services.

4- CVAT

Short Description:

CVAT is a popular open-source annotation platform widely used for computer vision projects. Originally developed for AI image and video labeling workflows, it provides flexibility and customization for technical teams and developers.

Key Features

- Open-source architecture

- Video object tracking

- Segmentation labeling

- Collaboration workflows

- API support

- Flexible customization

- Self-hosted deployment

Pros

- Free open-source core

- Highly customizable

- Strong developer adoption

- Excellent for technical teams

Cons

- Requires infrastructure management

- UI less polished than enterprise tools

- Limited built-in automation

- Enterprise support varies

Platforms / Deployment

- Web

- Self-hosted

- Cloud

Security & Compliance

- RBAC

- Self-managed security controls

- Not publicly stated for certifications

Integrations & Ecosystem

CVAT works well in developer-centric AI environments and custom pipelines.

- Docker

- Kubernetes

- REST APIs

- Python tools

- Custom ML integrations

Support & Community

Large open-source community with active development and strong GitHub engagement.

5- V7 Darwin

Short Description:

V7 Darwin is a data labeling platform focused on healthcare AI, computer vision, and enterprise annotation workflows. It combines AI-assisted automation with human validation to improve annotation speed and consistency.

Key Features

- Medical imaging support

- AI-assisted annotation

- Workflow automation

- Dataset versioning

- Video labeling

- Team collaboration

- QA workflows

Pros

- Excellent healthcare AI focus

- Strong automation capabilities

- Good user experience

- Advanced QA workflows

Cons

- Enterprise-focused pricing

- Specialized toward vision workflows

- Smaller ecosystem than larger competitors

- Limited open-source flexibility

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO

- RBAC

- Audit logs

- Encryption

- GDPR support

Integrations & Ecosystem

V7 Darwin supports integrations for healthcare imaging and AI workflows.

- APIs

- Cloud storage integrations

- DICOM workflows

- ML pipelines

- Python SDK

Support & Community

Strong onboarding support with growing enterprise healthcare adoption.

6- Dataloop

Short Description:

Dataloop provides an end-to-end AI data operations platform that combines annotation, automation, orchestration, and workflow management. It is designed for organizations managing large AI training pipelines and complex data operations.

Key Features

- AI-assisted annotation

- Workflow automation

- Video labeling

- Data orchestration

- Human QA workflows

- Pipeline management

- MLOps connectivity

Pros

- Strong automation capabilities

- Good MLOps integrations

- Broad modality support

- Flexible deployment options

Cons

- Enterprise complexity

- Smaller ecosystem than major competitors

- Learning curve for beginners

- Advanced setup requirements

Platforms / Deployment

- Web

- Cloud

- Hybrid

Security & Compliance

- SSO

- Encryption

- RBAC

- Audit logging

- Not publicly stated for some certifications

Integrations & Ecosystem

Dataloop focuses heavily on AI workflow orchestration and automation.

- AWS

- Azure

- Google Cloud

- APIs

- CI/CD integrations

- MLOps platforms

Support & Community

Professional onboarding and enterprise-focused support services are available.

7- Label Studio

Short Description:

Label Studio is an open-source annotation platform supporting image, text, audio, and multimodal datasets. It is widely used by developers, researchers, and AI teams requiring flexible deployment and customization capabilities.

Key Features

- Open-source platform

- Multimodal annotation

- Active learning support

- Custom labeling interfaces

- API integrations

- ML-assisted annotation

- Flexible deployment

Pros

- Highly customizable

- Strong developer flexibility

- Broad annotation support

- Cost-effective for technical teams

Cons

- Requires infrastructure management

- Enterprise support varies

- Advanced workflows need configuration

- UI customization can become complex

Platforms / Deployment

- Web

- Self-hosted

- Cloud

Security & Compliance

- RBAC

- Self-managed security controls

- Not publicly stated for certifications

Integrations & Ecosystem

Label Studio integrates well into AI and data engineering workflows.

- REST APIs

- Docker

- Kubernetes

- Python SDK

- ML backends

- Cloud storage integrations

Support & Community

Large open-source community with active developer contributions and documentation.

8- Encord

Short Description:

Encord is a multimodal annotation platform designed for video, computer vision, and enterprise AI workflows. The platform combines data curation, quality management, and AI-assisted labeling into a collaborative environment.

Key Features

- Video annotation

- Active learning workflows

- Data curation tools

- QA management

- AI-assisted labeling

- Dataset versioning

- Multimodal support

Pros

- Excellent video annotation workflows

- Strong enterprise tooling

- Modern interface design

- Good data management capabilities

Cons

- Enterprise-oriented pricing

- Smaller ecosystem than open-source platforms

- Advanced setup requires onboarding

- Less suitable for lightweight projects

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO

- RBAC

- Encryption

- Audit logs

- GDPR support

Integrations & Ecosystem

Encord integrates with modern AI infrastructure and cloud platforms.

- AWS

- Azure

- Google Cloud

- APIs

- Python SDK

- ML workflow integrations

Support & Community

Enterprise-focused support with increasing adoption across AI-driven industries.

9- Roboflow Annotate

Short Description:

Roboflow Annotate is a developer-friendly computer vision annotation platform designed for startups, researchers, and AI engineering teams. It simplifies image labeling, dataset management, and model optimization workflows.

Key Features

- Image annotation

- Dataset versioning

- Auto-labeling

- Collaboration workflows

- Model training integrations

- Data augmentation

- Computer vision optimization

Pros

- Easy to use

- Fast onboarding

- Excellent developer experience

- Good for startups and SMBs

Cons

- Primarily focused on vision workflows

- Limited enterprise governance

- Fewer advanced workflow controls

- Smaller compliance feature set

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- MFA

- RBAC

- Encryption

- Not publicly stated for certifications

Integrations & Ecosystem

Roboflow integrates strongly with modern computer vision frameworks.

- YOLO

- TensorFlow

- PyTorch

- APIs

- Python SDK

- Cloud storage platforms

Support & Community

Strong tutorials, educational content, and active developer community support.

10- Prodigy

Short Description:

Prodigy is a developer-focused annotation tool built primarily for NLP and active learning workflows. It emphasizes fast iteration cycles, model-assisted labeling, and lightweight deployment for AI researchers and smaller technical teams.

Key Features

- NLP annotation

- Active learning workflows

- Python-first architecture

- Scriptable interfaces

- Model-assisted annotation

- Lightweight deployment

- Local workflow support

Pros

- Excellent for NLP projects

- Fast annotation iteration

- Strong developer flexibility

- Efficient active learning support

Cons

- Limited enterprise governance

- Smaller collaboration capabilities

- Narrow modality support

- Less suitable for large enterprises

Platforms / Deployment

- Web

- Self-hosted

Security & Compliance

- Self-managed security

- Not publicly stated for certifications

Integrations & Ecosystem

Prodigy integrates well with NLP frameworks and developer ecosystems.

- spaCy

- Python

- REST APIs

- NLP frameworks

- Custom ML pipelines

Support & Community

Strong documentation and active developer-focused adoption.

Comparison Table

| Tool Name | Best For | Platform Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Labelbox | Enterprise AI teams | Web | Cloud | Multimodal enterprise workflows | N/A |

| Scale AI | Large AI operations | Web | Cloud | RLHF and automation | N/A |

| SuperAnnotate | Collaborative annotation | Web | Cloud | Advanced QA workflows | N/A |

| CVAT | Open-source vision projects | Web | Self-hosted / Cloud | Flexible customization | N/A |

| V7 Darwin | Healthcare AI | Web | Cloud | Medical imaging workflows | N/A |

| Dataloop | AI workflow orchestration | Web | Cloud / Hybrid | End-to-end pipelines | N/A |

| Label Studio | Flexible multimodal workflows | Web | Self-hosted / Cloud | Custom annotation interfaces | N/A |

| Encord | Video and multimodal AI | Web | Cloud | Advanced video workflows | N/A |

| Roboflow Annotate | Startup AI teams | Web | Cloud | Developer-friendly workflows | N/A |

| Prodigy | NLP annotation | Web | Self-hosted | Active learning support | N/A |

Evaluation & Scoring of Human-in-the-Loop Labeling Tools

| Tool Name | Core 25% | Ease 15% | Integrations 15% | Security 10% | Performance 10% | Support 10% | Value 15% | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Labelbox | 9.5 | 8.5 | 9.0 | 9.0 | 9.0 | 9.0 | 7.5 | 8.8 |

| Scale AI | 9.5 | 8.0 | 9.0 | 8.5 | 9.5 | 9.0 | 7.0 | 8.7 |

| SuperAnnotate | 9.0 | 8.5 | 8.5 | 8.5 | 8.5 | 8.5 | 7.5 | 8.5 |

| CVAT | 8.0 | 7.0 | 8.0 | 7.0 | 8.0 | 7.5 | 9.5 | 8.0 |

| V7 Darwin | 8.5 | 8.5 | 8.0 | 8.5 | 8.5 | 8.0 | 7.5 | 8.3 |

| Dataloop | 8.5 | 7.5 | 8.5 | 8.0 | 8.5 | 8.0 | 7.5 | 8.1 |

| Label Studio | 8.5 | 7.5 | 8.5 | 7.0 | 8.0 | 8.0 | 9.0 | 8.2 |

| Encord | 8.5 | 8.5 | 8.0 | 8.5 | 8.5 | 8.0 | 7.5 | 8.3 |

| Roboflow Annotate | 8.0 | 9.0 | 8.0 | 7.5 | 8.0 | 8.0 | 8.5 | 8.2 |

| Prodigy | 7.5 | 8.0 | 7.5 | 6.5 | 8.0 | 7.5 | 9.0 | 7.8 |

These scores are comparative and designed to help buyers evaluate strengths across different categories. Enterprise platforms generally perform better in governance, automation, and scalability, while open-source platforms often provide better value and customization flexibility. Organizations should prioritize platforms that align with their technical expertise, compliance requirements, workflow complexity, and operational goals.

Which Human-in-the-Loop Labeling Tool Is Right for You?

Solo / Freelancer

Independent developers and researchers usually benefit most from lightweight and flexible platforms. Prodigy and Label Studio are excellent choices for NLP workflows and experimental AI projects. Roboflow Annotate works especially well for smaller computer vision projects.

SMB

Small and mid-sized businesses often require a balance between usability, automation, and affordability. Roboflow Annotate, SuperAnnotate, and Label Studio provide strong collaboration and annotation workflows without excessive operational complexity.

Mid-Market

Mid-market organizations typically require stronger governance, workflow management, and scalability. Encord, Dataloop, and V7 Darwin offer balanced enterprise features while remaining flexible enough for growing AI teams.

Enterprise

Large organizations managing sensitive datasets and large-scale AI initiatives should prioritize platforms like Labelbox, Scale AI, and SuperAnnotate. These tools provide stronger governance, automation, collaboration, and scalability capabilities.

Budget vs Premium

Open-source tools such as CVAT and Label Studio deliver strong value for technically capable teams. Premium enterprise tools justify higher costs through automation, governance, support, and large-scale workflow orchestration.

Feature Depth vs Ease of Use

Highly customizable platforms usually require more technical expertise. CVAT and Label Studio prioritize flexibility, while Roboflow and V7 Darwin focus more on usability and fast onboarding experiences.

Integrations & Scalability

Organizations planning large AI deployments should prioritize API-first platforms with strong MLOps integrations. Labelbox, Scale AI, and Dataloop perform especially well in enterprise-scale environments.

Security & Compliance Needs

Healthcare, finance, government, and regulated industries should prioritize platforms with strong RBAC, audit logging, encryption, and SSO support. Self-hosted deployment may also be important for organizations handling sensitive datasets.

Frequently Asked Questions

1. What are Human-in-the-Loop Labeling Tools?

Human-in-the-loop labeling tools combine automation with human expertise to create accurate training datasets for AI models. Humans validate and improve machine-generated annotations to ensure better quality and reliability. These platforms are widely used in computer vision, NLP, robotics, and generative AI projects.

2. Why are these tools important for AI projects?

AI systems rely heavily on clean and accurate data. Poor-quality annotations can negatively impact model accuracy and reliability. Human-in-the-loop workflows improve data quality while helping organizations scale annotation operations more efficiently.

3. Which industries use these platforms the most?

Healthcare, autonomous vehicles, manufacturing, finance, logistics, security, robotics, and retail are among the largest adopters. Any organization building custom machine learning systems may require annotation workflows.

4. Are open-source annotation tools suitable for enterprises?

Yes, many enterprises use open-source platforms like CVAT and Label Studio successfully. However, organizations often need internal technical expertise for infrastructure management, customization, maintenance, and security configuration.

5. What is RLHF in AI labeling workflows?

RLHF stands for Reinforcement Learning from Human Feedback. Humans review and rank model outputs to help improve AI response quality and behavior. It is commonly used for training generative AI and large language models.

6. How long does implementation usually take?

Smaller deployments can be operational within days, while enterprise deployments may require several weeks. Factors affecting implementation include integrations, workflow complexity, onboarding, and dataset migration requirements.

7. What are common mistakes when selecting a labeling platform?

Organizations often focus only on pricing while ignoring scalability, integrations, workflow automation, and governance capabilities. Poor planning around quality assurance and deployment complexity can also create long-term operational issues.

8. Can these platforms integrate with MLOps systems?

Most modern annotation platforms provide APIs, SDKs, and automation tools that support integration with MLOps workflows, CI/CD systems, cloud storage, and machine learning pipelines.

9. How do pricing models typically work?

Pricing models vary widely between vendors. Some charge per user seat, while others charge based on annotation volume, storage, or managed workforce services. Enterprise contracts often use custom pricing models.

10. Should organizations prioritize automation or human review?

The most effective approach combines both automation and human oversight. Automation improves speed and efficiency, while human reviewers maintain quality, consistency, and edge-case validation.

Conclusion

Human-in-the-Loop Labeling Tools have become essential for organizations building reliable AI systems because model performance depends heavily on accurate and scalable training data. Modern annotation platforms now combine automation, workflow orchestration, collaboration, and governance features that help teams manage increasingly complex AI projects across computer vision, NLP, healthcare AI, robotics, and generative AI workflows. Enterprise buyers should evaluate these platforms based not only on annotation capabilities but also on scalability, integration flexibility, security controls, deployment options, and operational efficiency. Open-source platforms such as CVAT and Label Studio remain excellent choices for technically skilled teams seeking customization and cost efficiency, while enterprise solutions like Labelbox, Scale AI, and SuperAnnotate provide stronger governance, automation, and large-scale collaboration capabilities. The best platform ultimately depends on organizational size, technical expertise, compliance requirements, and workflow complexity. Before making a final decision, teams should shortlist two or three tools, run pilot projects, validate integration requirements, and carefully assess annotation quality workflows under real production conditions.