Introduction

Responsible AI Tooling platforms help organizations build, deploy, monitor, and govern artificial intelligence systems in a safe, ethical, transparent, and compliant manner. These tools focus on reducing bias, improving explainability, ensuring regulatory alignment, monitoring model behavior, and maintaining accountability throughout the AI lifecycle.

As AI adoption accelerates across enterprises, governments, healthcare systems, financial institutions, and generative AI applications, organizations are under increasing pressure to demonstrate that their AI systems are fair, secure, auditable, and trustworthy. Responsible AI tooling has evolved from a niche governance layer into a critical operational requirement for modern AI programs.

Common real-world use cases include:

- AI bias detection and mitigation

- LLM safety and hallucination monitoring

- AI governance and audit tracking

- Model explainability and transparency

- Regulatory compliance management

Key evaluation criteria for buyers include:

- Bias detection capabilities

- Explainability and transparency tools

- AI governance workflows

- LLM safety monitoring

- Compliance and audit support

- Integration with ML pipelines

- Scalability and automation

- Security and access controls

- Reporting and observability

- Ease of deployment and usability

Best for: Enterprise AI teams, financial institutions, healthcare organizations, government agencies, MLOps teams, AI governance leaders, compliance teams, and companies deploying high-risk AI systems.

Not ideal for: Organizations with minimal AI usage, teams relying only on simple prebuilt AI APIs, or businesses without governance or compliance requirements.

Key Trends in Responsible AI Tooling

- AI governance platforms are becoming mandatory in regulated industries.

- LLM safety monitoring is rapidly expanding due to generative AI adoption.

- Bias detection and explainability features are becoming integrated into MLOps pipelines.

- AI observability is evolving into a continuous monitoring discipline.

- Policy-based AI governance workflows are replacing manual review processes.

- Enterprises are prioritizing audit trails and AI accountability reporting.

- Open-source responsible AI frameworks are gaining traction among developers.

- AI red teaming and adversarial testing are becoming operational requirements.

- Privacy-preserving AI workflows are increasing in importance.

- Integration between AI governance and cybersecurity tooling is growing rapidly.

How We Selected These Tools

The tools in this list were evaluated using a practical enterprise-focused framework designed around real-world responsible AI requirements.

Selection criteria included:

- Market adoption and industry visibility

- Bias detection and explainability depth

- Governance and compliance capabilities

- AI observability and monitoring strength

- Integration with MLOps ecosystems

- Scalability for enterprise AI programs

- Security and access control maturity

- Workflow automation capabilities

- Community adoption and ecosystem maturity

- Support for generative AI governance

The final list includes enterprise-grade platforms, developer-focused frameworks, and open-source tools suitable for different AI governance maturity levels.

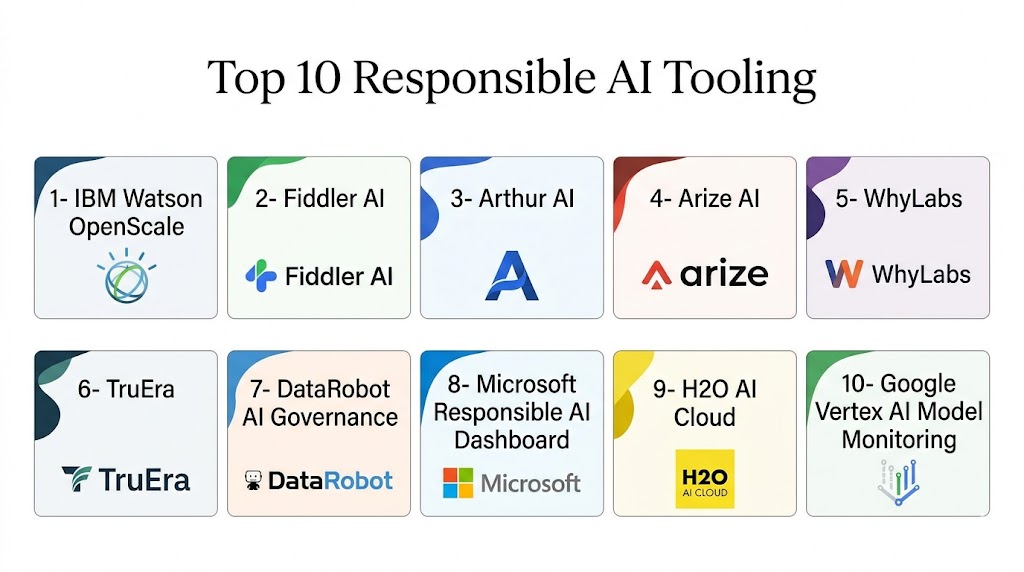

Top 10 Responsible AI Tooling Platforms

1- IBM watsonx.governance

Short Description:

IBM watsonx.governance is an enterprise AI governance platform designed to help organizations monitor, explain, and govern machine learning and generative AI systems. It supports lifecycle governance, risk management, compliance workflows, and AI accountability reporting for regulated industries and enterprise-scale AI deployments.

Key Features

- AI governance workflows

- Bias detection and mitigation

- Explainability dashboards

- LLM governance controls

- Risk and compliance tracking

- Audit trail management

- AI lifecycle monitoring

Pros

- Strong enterprise governance capabilities

- Mature compliance workflows

- Good explainability tooling

- Broad AI lifecycle coverage

Cons

- Enterprise-oriented complexity

- Premium pricing structure

- Requires governance planning

- Better suited for larger organizations

Platforms / Deployment

- Web

- Cloud

- Hybrid

Security & Compliance

- SSO/SAML

- RBAC

- Audit logs

- Encryption

- GDPR support

- Enterprise governance controls

Integrations & Ecosystem

IBM watsonx.governance integrates with enterprise AI ecosystems and IBM infrastructure while supporting external ML workflows.

- IBM watsonx

- APIs

- ML lifecycle integrations

- Enterprise governance systems

- Cloud platforms

Support & Community

Strong enterprise support, onboarding programs, and global consulting ecosystem.

2- Microsoft Responsible AI Toolbox

Short Description:

Microsoft Responsible AI Toolbox is a suite of open-source and enterprise tools focused on AI fairness, interpretability, error analysis, and responsible machine learning operations. It is commonly used alongside Azure AI and enterprise ML workflows.

Key Features

- Fairness assessment

- Explainability tools

- Error analysis dashboards

- Model interpretability

- Responsible AI scorecards

- Data analysis workflows

- Integration with ML pipelines

Pros

- Strong Azure ecosystem integration

- Good explainability tooling

- Developer-friendly workflows

- Strong enterprise backing

Cons

- Best experience within Microsoft ecosystem

- Advanced workflows require ML expertise

- Some tooling fragmented across products

- Enterprise governance setup may be complex

Platforms / Deployment

- Web

- Cloud

- Self-hosted

Security & Compliance

- Azure security controls

- RBAC

- Encryption

- Audit capabilities

- Enterprise compliance support

Integrations & Ecosystem

The toolbox integrates strongly with Microsoft AI and ML infrastructure.

- Azure ML

- GitHub

- APIs

- Python SDKs

- MLOps workflows

Support & Community

Large enterprise ecosystem with strong documentation and active developer adoption.

3- Fiddler AI

Short Description:

Fiddler AI is an AI observability and responsible AI monitoring platform focused on explainability, fairness analysis, drift detection, and LLM monitoring. It helps enterprises monitor AI behavior continuously in production environments.

Key Features

- AI observability dashboards

- Bias monitoring

- Explainability analysis

- Drift detection

- LLM monitoring

- Root cause analysis

- Real-time alerts

Pros

- Strong observability capabilities

- Excellent monitoring dashboards

- Good enterprise scalability

- Strong LLM monitoring support

Cons

- Enterprise-focused pricing

- Requires mature ML operations

- Advanced setup complexity

- Smaller ecosystem than hyperscalers

Platforms / Deployment

- Web

- Cloud

- Hybrid

Security & Compliance

- SSO/SAML

- RBAC

- Audit logs

- Encryption

- Enterprise governance controls

Integrations & Ecosystem

Fiddler AI integrates with modern MLOps and AI infrastructure.

- Databricks

- Snowflake

- AWS

- Azure

- APIs

- ML monitoring pipelines

Support & Community

Strong enterprise onboarding and AI observability support services.

4- Arthur AI

Short Description:

Arthur AI provides AI monitoring, explainability, and governance tooling designed for enterprise machine learning systems. The platform helps organizations detect model drift, bias, and operational anomalies while maintaining AI transparency.

Key Features

- Model monitoring

- Explainability analysis

- Bias detection

- Drift monitoring

- LLM observability

- Alerting systems

- Governance reporting

Pros

- Strong monitoring capabilities

- Good enterprise integrations

- Effective drift detection

- Supports production AI operations

Cons

- Premium enterprise pricing

- Requires ML operational maturity

- Advanced configuration complexity

- Smaller community ecosystem

Platforms / Deployment

- Web

- Cloud

- Hybrid

Security & Compliance

- SSO/SAML

- RBAC

- Encryption

- Audit logging

- Enterprise governance controls

Integrations & Ecosystem

Arthur AI integrates with modern AI infrastructure and enterprise ML systems.

- AWS

- Azure

- Databricks

- APIs

- ML pipelines

- Observability systems

Support & Community

Enterprise-oriented support model with onboarding assistance and implementation guidance.

5- WhyLabs

Short Description:

WhyLabs is an AI observability and responsible AI platform designed for monitoring machine learning and LLM systems in production. It focuses on drift detection, anomaly monitoring, data quality, and governance visibility.

Key Features

- Data drift detection

- AI observability

- Data quality monitoring

- LLM monitoring

- Alerting workflows

- Governance visibility

- Performance analytics

Pros

- Strong observability tooling

- Good developer experience

- Efficient anomaly detection

- Modern monitoring architecture

Cons

- Requires monitoring expertise

- Enterprise features may increase cost

- Less governance depth than some competitors

- Advanced workflows require setup

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- RBAC

- Encryption

- Audit logging

- Enterprise governance support

Integrations & Ecosystem

WhyLabs integrates with AI pipelines and ML monitoring environments.

- Python SDK

- APIs

- AWS

- Databricks

- ML workflows

- Observability integrations

Support & Community

Growing AI observability ecosystem with strong technical documentation.

6- TruEra

Short Description:

TruEra focuses on AI quality management, explainability, and governance for enterprise machine learning systems. The platform helps organizations improve model trustworthiness, transparency, and operational reliability.

Key Features

- Explainability analysis

- AI quality monitoring

- Drift detection

- Bias analysis

- Governance workflows

- Root cause analysis

- Model performance monitoring

Pros

- Strong explainability tooling

- Enterprise governance support

- Good ML quality analysis

- Effective drift monitoring

Cons

- Enterprise-focused deployment

- Premium pricing

- Complex implementation for smaller teams

- Advanced governance setup required

Platforms / Deployment

- Web

- Cloud

- Hybrid

Security & Compliance

- SSO/SAML

- RBAC

- Encryption

- Audit logging

- Enterprise compliance controls

Integrations & Ecosystem

TruEra integrates with enterprise ML operations and observability workflows.

- Databricks

- Snowflake

- AWS

- Azure

- APIs

- ML lifecycle systems

Support & Community

Enterprise onboarding and strong customer support for regulated AI environments.

7- Fairlearn

Short Description:

Fairlearn is an open-source toolkit designed to assess and mitigate fairness issues in machine learning models. It is widely used by developers and researchers building responsible AI workflows within Python-based ML environments.

Key Features

- Bias assessment tools

- Fairness metrics

- Mitigation algorithms

- Visualization dashboards

- Open-source architecture

- Python integration

- Model evaluation workflows

Pros

- Free and open-source

- Strong fairness analysis capabilities

- Developer-friendly design

- Good research adoption

Cons

- Requires technical expertise

- Limited enterprise governance tooling

- No full enterprise workflow layer

- Requires integration effort

Platforms / Deployment

- Web

- Self-hosted

Security & Compliance

- Self-managed controls

- Not publicly stated for certifications

Integrations & Ecosystem

Fairlearn integrates well with Python-based machine learning workflows.

- Python

- Scikit-learn

- Jupyter

- ML pipelines

- Open-source ML ecosystems

Support & Community

Strong research and developer community with active open-source contributions.

8- Aequitas

Short Description:

Aequitas is an open-source bias and fairness audit toolkit designed for machine learning accountability and transparency analysis. It helps organizations evaluate fairness risks in predictive models and decision systems.

Key Features

- Bias auditing

- Fairness analysis

- Model transparency

- Audit reporting

- Open-source framework

- Risk assessment workflows

- Comparative fairness metrics

Pros

- Strong fairness auditing

- Open-source flexibility

- Good transparency tooling

- Useful for compliance analysis

Cons

- Requires technical implementation

- Limited enterprise workflow tooling

- Smaller ecosystem

- Requires integration with ML pipelines

Platforms / Deployment

- Web

- Self-hosted

Security & Compliance

- Self-managed controls

- Not publicly stated for certifications

Integrations & Ecosystem

Aequitas integrates with Python-based AI and analytics environments.

- Python

- Jupyter

- ML workflows

- Open-source ecosystems

- Data science platforms

Support & Community

Academic and research-driven community with active fairness research adoption.

9- Credo AI

Short Description:

Credo AI is an AI governance and compliance platform focused on responsible AI oversight, policy management, and regulatory alignment. It helps enterprises operationalize AI governance programs across multiple business units.

Key Features

- AI governance workflows

- Compliance management

- Policy enforcement

- AI risk tracking

- Audit documentation

- AI inventory management

- Governance dashboards

Pros

- Strong governance workflows

- Good compliance management

- Enterprise policy support

- Strong audit capabilities

Cons

- Less focused on deep ML monitoring

- Enterprise pricing structure

- Governance-heavy deployment

- Requires organizational adoption planning

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO/SAML

- RBAC

- Audit logs

- Encryption

- Enterprise governance controls

Integrations & Ecosystem

Credo AI integrates with governance, compliance, and enterprise AI systems.

- APIs

- Enterprise governance tools

- ML lifecycle systems

- Compliance workflows

- Cloud platforms

Support & Community

Enterprise onboarding and governance-focused customer support services.

10- LangKit

Short Description:

LangKit is an open-source toolkit focused on LLM observability, hallucination analysis, and responsible generative AI workflows. It helps developers monitor and evaluate language model outputs for quality and safety issues.

Key Features

- LLM monitoring

- Hallucination analysis

- Prompt evaluation

- Toxicity detection

- Open-source architecture

- Generative AI observability

- Workflow analytics

Pros

- Strong LLM-focused tooling

- Open-source flexibility

- Good developer usability

- Lightweight deployment

Cons

- Smaller ecosystem

- Limited enterprise governance tooling

- Requires technical integration

- Focused mainly on generative AI workflows

Platforms / Deployment

- Web

- Self-hosted

Security & Compliance

- Self-managed controls

- Not publicly stated for certifications

Integrations & Ecosystem

LangKit integrates with generative AI and developer workflows.

- Python

- APIs

- LLM frameworks

- Observability tools

- AI pipelines

Support & Community

Growing open-source community with increasing adoption among generative AI developers.

Comparison Table

| Tool Name | Best For | Platform Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| IBM watsonx.governance | Enterprise AI governance | Web | Cloud / Hybrid | AI lifecycle governance | N/A |

| Microsoft Responsible AI Toolbox | Azure AI governance | Web | Cloud / Self-hosted | Fairness and explainability | N/A |

| Fiddler AI | AI observability | Web | Cloud / Hybrid | Real-time AI monitoring | N/A |

| Arthur AI | Enterprise AI monitoring | Web | Cloud / Hybrid | Drift and explainability analysis | N/A |

| WhyLabs | AI observability | Web | Cloud | Data quality monitoring | N/A |

| TruEra | AI quality management | Web | Cloud / Hybrid | Explainability and governance | N/A |

| Fairlearn | Open-source fairness analysis | Web | Self-hosted | Bias mitigation tooling | N/A |

| Aequitas | Fairness auditing | Web | Self-hosted | AI bias auditing | N/A |

| Credo AI | AI governance compliance | Web | Cloud | Policy and compliance management | N/A |

| LangKit | LLM observability | Web | Self-hosted | Hallucination monitoring | N/A |

Evaluation & Scoring of Responsible AI Tooling Platforms

| Tool Name | Core 25% | Ease 15% | Integrations 15% | Security 10% | Performance 10% | Support 10% | Value 15% | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| IBM watsonx.governance | 9.5 | 8.0 | 9.0 | 9.5 | 9.0 | 9.0 | 7.5 | 8.9 |

| Microsoft Responsible AI Toolbox | 9.0 | 8.5 | 9.0 | 9.0 | 8.5 | 8.5 | 8.5 | 8.7 |

| Fiddler AI | 9.0 | 8.0 | 8.5 | 8.5 | 9.0 | 8.5 | 7.5 | 8.5 |

| Arthur AI | 8.5 | 8.0 | 8.5 | 8.5 | 8.5 | 8.0 | 7.5 | 8.3 |

| WhyLabs | 8.5 | 8.5 | 8.0 | 8.0 | 8.5 | 8.0 | 8.0 | 8.3 |

| TruEra | 8.5 | 7.5 | 8.5 | 8.5 | 8.5 | 8.0 | 7.5 | 8.2 |

| Fairlearn | 7.5 | 7.5 | 7.5 | 6.5 | 8.0 | 7.5 | 9.5 | 7.8 |

| Aequitas | 7.5 | 7.0 | 7.0 | 6.5 | 7.5 | 7.0 | 9.0 | 7.4 |

| Credo AI | 8.5 | 8.0 | 8.0 | 8.5 | 8.0 | 8.0 | 7.5 | 8.1 |

| LangKit | 7.5 | 8.0 | 7.5 | 6.5 | 8.0 | 7.0 | 9.0 | 7.7 |

These scores are comparative and intended to help organizations evaluate strengths across governance, explainability, fairness analysis, observability, and operational AI monitoring. Enterprise governance platforms generally perform better in compliance, automation, and policy management, while open-source tools often provide stronger flexibility and cost efficiency for technical teams.

Which Responsible AI Tooling Platform Is Right for You?

Solo / Freelancer

Independent developers and researchers often benefit most from lightweight and open-source platforms. Fairlearn, Aequitas, and LangKit are strong choices for fairness analysis, LLM monitoring, and experimental responsible AI workflows.

SMB

Small and mid-sized businesses usually require a balance between usability, governance visibility, and operational cost. WhyLabs and Microsoft Responsible AI Toolbox provide strong monitoring and explainability capabilities without excessive enterprise complexity.

Mid-Market

Mid-market organizations typically need scalable governance workflows, monitoring, and integration flexibility. Fiddler AI, Arthur AI, and TruEra provide balanced enterprise capabilities for growing AI operations.

Enterprise

Large enterprises managing regulated AI systems should prioritize platforms like IBM watsonx.governance, Credo AI, and Fiddler AI. These tools provide stronger governance, auditability, compliance workflows, and operational oversight.

Budget vs Premium

Open-source tools such as Fairlearn, Aequitas, and LangKit offer strong flexibility and lower operational cost for technically skilled teams. Premium enterprise platforms justify higher pricing through governance automation, support, scalability, and compliance readiness.

Feature Depth vs Ease of Use

Highly advanced governance and observability platforms may require specialized ML operations expertise. Simpler toolkits are easier to deploy but may lack enterprise governance depth and automation.

Integrations & Scalability

Organizations operating mature AI programs should prioritize API-first platforms with strong MLOps integrations and enterprise workflow connectivity. IBM, Microsoft, and Fiddler AI perform particularly well in large-scale AI environments.

Security & Compliance Needs

Healthcare, government, financial services, and regulated industries should prioritize tools with strong governance controls, audit logging, RBAC, and enterprise compliance capabilities.

Frequently Asked Questions

1. What is Responsible AI Tooling?

Responsible AI tooling refers to platforms and frameworks designed to help organizations build ethical, transparent, fair, and governable AI systems. These tools focus on explainability, fairness, monitoring, compliance, and AI risk management.

2. Why is Responsible AI becoming important?

Organizations deploying AI systems are increasingly expected to demonstrate fairness, accountability, transparency, and regulatory compliance. Responsible AI tooling helps reduce operational, ethical, and regulatory risks.

3. What is AI explainability?

AI explainability refers to techniques that help humans understand how AI systems make decisions. Explainability tools help organizations improve trust, debugging, and compliance visibility.

4. What is AI observability?

AI observability involves continuously monitoring AI systems in production for issues such as drift, hallucinations, bias, instability, and performance degradation.

5. Which industries need Responsible AI platforms the most?

Healthcare, finance, insurance, government, legal services, autonomous systems, and cybersecurity industries often have the strongest responsible AI requirements because of regulatory and operational risks.

6. Are open-source responsible AI tools enough for enterprises?

Open-source tools can work well for technical teams with strong engineering capabilities. However, enterprises often require additional governance, auditability, workflow automation, and compliance management features.

7. What is AI bias detection?

Bias detection tools analyze whether AI systems produce unfair or discriminatory outcomes across demographic groups or operational scenarios. These tools help organizations identify and mitigate fairness risks.

8. How do these platforms integrate with MLOps workflows?

Most responsible AI platforms provide APIs, SDKs, dashboards, and observability integrations that connect with machine learning pipelines, cloud platforms, and monitoring environments.

9. Can Responsible AI tools monitor LLM hallucinations?

Yes, many modern platforms now include hallucination detection, toxicity analysis, prompt evaluation, and generative AI monitoring capabilities for large language models.

10. What should buyers prioritize when selecting a Responsible AI platform?

Organizations should evaluate governance capabilities, explainability tooling, monitoring depth, integration flexibility, scalability, security controls, and regulatory alignment based on their AI maturity and industry requirements.

Conclusion

Responsible AI Tooling platforms are becoming essential components of modern AI operations because organizations increasingly need visibility, accountability, and governance across the AI lifecycle. As enterprises expand their use of generative AI, predictive analytics, autonomous systems, and large-scale machine learning, the risks associated with bias, hallucinations, compliance failures, and operational instability continue to grow. Modern responsible AI platforms now combine observability, explainability, governance, fairness analysis, compliance management, and AI monitoring into centralized operational workflows that help organizations build more trustworthy AI systems. Open-source frameworks such as Fairlearn, Aequitas, and LangKit remain valuable for technically skilled teams seeking flexibility and experimentation, while enterprise platforms like IBM watsonx.governance, Fiddler AI, and Credo AI provide broader governance, automation, and operational oversight capabilities. The best platform ultimately depends on organizational size, AI maturity, compliance requirements, and operational complexity. Before making a long-term investment, organizations should shortlist multiple tools, run pilot programs, validate integrations and governance workflows, and carefully assess operational monitoring requirements in real production environments.