Introduction

Data Pipeline Orchestration Tools help organizations automate, schedule, monitor, and manage data workflows across modern analytics and cloud platforms. These tools coordinate tasks such as ETL jobs, data transformation, machine learning workflows, API integrations, and real-time processing pipelines. Instead of manually triggering tasks and managing dependencies, orchestration platforms provide centralized automation, visibility, and reliability for complex data operations.

As businesses adopt cloud-native infrastructure, AI-powered analytics, streaming architectures, and distributed applications, orchestration tools have become a critical layer in modern data engineering. Organizations now need scalable, secure, and observable workflow systems that can integrate across warehouses, lakes, APIs, SaaS applications, and machine learning environments.

Common real-world use cases include:

- Automating ETL and ELT workflows

- Managing machine learning pipelines

- Coordinating cloud-native analytics jobs

- Running event-driven processing systems

- Synchronizing data across platforms

Key evaluation criteria for buyers include:

- Workflow automation flexibility

- Scalability and reliability

- Monitoring and observability

- Integration ecosystem

- Security and governance controls

- Cloud-native compatibility

- Ease of deployment

- AI and automation support

- Developer experience

- Cost efficiency

Best for: Data engineers, analytics teams, DevOps teams, cloud architects, AI/ML operations teams, SaaS companies, and enterprises managing complex data ecosystems.

Not ideal for: Small teams with extremely basic automation requirements or organizations that only need lightweight task scheduling.

Key Trends in Data Pipeline Orchestration Tools

- AI-assisted workflow automation is reducing manual configuration work.

- Event-driven orchestration is becoming more common than traditional batch scheduling.

- Unified orchestration platforms are combining ETL, ML workflows, and observability.

- Kubernetes-native orchestration support is growing rapidly.

- Real-time data orchestration is becoming critical for AI and streaming workloads.

- Data observability and lineage tracking are now standard expectations.

- Low-code workflow builders are improving accessibility for business teams.

- Security-first orchestration with RBAC, SSO, and audit logging is increasingly important.

- Multi-cloud workflow portability is becoming a key enterprise requirement.

- Usage-based pricing models are replacing fixed infrastructure licensing.

How We Selected These Tools

The tools in this list were selected using a balanced evaluation process focused on enterprise readiness, developer experience, scalability, and ecosystem maturity.

Evaluation factors included:

- Market adoption and industry visibility

- Workflow orchestration capabilities

- Reliability and scalability

- Security and governance features

- Integration ecosystem depth

- Support for cloud-native environments

- Monitoring and observability features

- AI and machine learning compatibility

- Community and enterprise support quality

- Suitability across SMB and enterprise environments

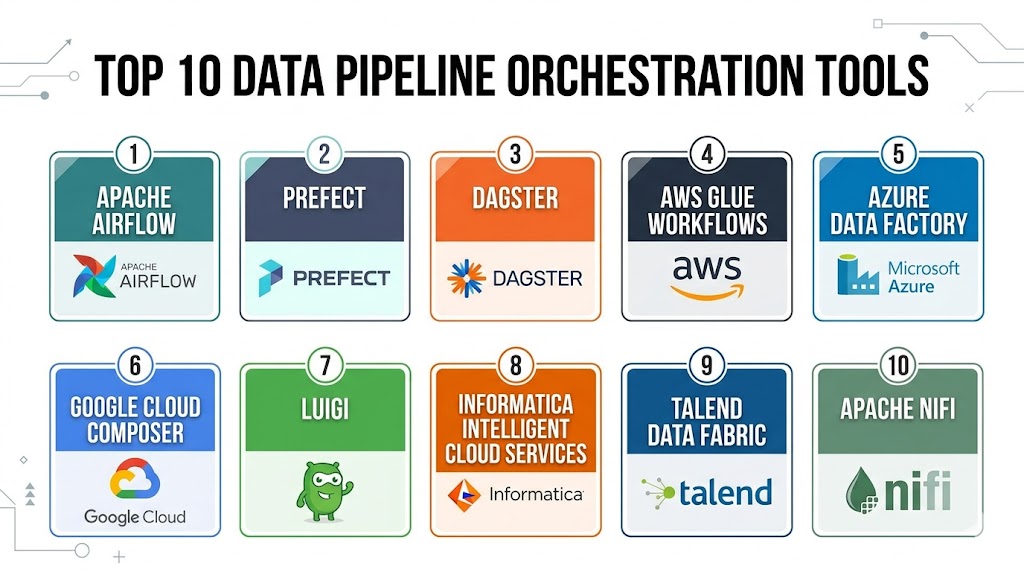

Top 10 Data Pipeline Orchestration Tools

1- Apache Airflow

Short Description:

Apache Airflow is one of the most popular open-source workflow orchestration platforms used for managing complex data pipelines. It allows teams to define workflows using Python-based DAGs and supports extensive scheduling, automation, and monitoring capabilities. Airflow is widely adopted by enterprises and cloud-native engineering teams because of its flexibility and massive integration ecosystem.

Key Features

- Python-based DAG orchestration

- Advanced workflow scheduling

- Dynamic pipeline generation

- Kubernetes integration

- Monitoring and retry management

- Extensive plugin ecosystem

- Workflow dependency handling

Pros

- Highly flexible architecture

- Large open-source community

- Strong integration ecosystem

- Suitable for enterprise-scale workflows

Cons

- Requires operational maintenance

- Steeper learning curve

- UI can feel technical

- Scaling may require infrastructure expertise

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

SSO, RBAC, encryption, and audit logging support. Additional compliance capabilities vary by deployment provider.

Integrations & Ecosystem

Apache Airflow supports integrations with major cloud providers, databases, analytics platforms, and orchestration systems.

- AWS

- Azure

- Google Cloud

- Snowflake

- Databricks

- Kubernetes

Support & Community

Very strong open-source community with broad enterprise adoption and extensive documentation.

2- Prefect

Short Description:

Prefect is a modern orchestration platform focused on developer experience, workflow reliability, and observability. It simplifies orchestration management while supporting cloud-native and event-driven architectures. Prefect is popular among modern data engineering teams looking for easier deployment and monitoring.

Key Features

- Python-native orchestration

- Event-driven workflows

- Real-time monitoring

- Hybrid execution models

- Dynamic task management

- API-first architecture

- Built-in observability tools

Pros

- Easier onboarding experience

- Modern workflow architecture

- Strong observability features

- Flexible deployment options

Cons

- Smaller ecosystem than Airflow

- Advanced features may require paid plans

- Community is still growing

- Custom integrations may require engineering effort

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, encryption, SSO/SAML support. Additional compliance certifications vary by plan.

Integrations & Ecosystem

Prefect integrates with cloud infrastructure, orchestration engines, and modern analytics stacks.

- AWS

- Azure

- Google Cloud

- Kubernetes

- Docker

- Snowflake

Support & Community

Growing community with strong developer-focused documentation and onboarding resources.

3- Dagster

Short Description:

Dagster is a modern data orchestration platform designed around data-aware workflows and software-defined assets. It provides strong lineage visibility, testing capabilities, and observability features for analytics and data engineering teams. Dagster is especially popular in modern cloud-native analytics environments.

Key Features

- Asset-based orchestration

- Data lineage tracking

- Workflow testing framework

- Declarative pipeline management

- Observability dashboards

- Scheduling and automation

- Cloud-native architecture

Pros

- Excellent data observability

- Modern UI and developer experience

- Strong support for data quality workflows

- Good cloud-native compatibility

Cons

- Smaller ecosystem compared to Airflow

- Learning curve for asset-based concepts

- Enterprise plans can become expensive

- Less suitable for generic automation

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, audit logging, and SSO/SAML support. Additional compliance capabilities vary.

Integrations & Ecosystem

Dagster integrates with modern analytics, cloud infrastructure, and transformation platforms.

- dbt

- Snowflake

- BigQuery

- Databricks

- AWS

- Kubernetes

Support & Community

Active and rapidly growing developer community with strong documentation.

4- Azure Data Factory

Short Description:

Azure Data Factory is Microsoft’s cloud-native data integration and orchestration platform built for enterprise-scale data movement and transformation. It provides visual workflow orchestration and extensive hybrid integration capabilities. The platform is widely used by organizations invested in the Microsoft ecosystem.

Key Features

- Visual workflow builder

- Hybrid data integration

- Managed ETL pipelines

- Enterprise scheduling

- Built-in connectors

- Data transformation workflows

- Native Azure integrations

Pros

- Strong Microsoft ecosystem support

- Extensive connector library

- Enterprise scalability

- Low-code workflow management

Cons

- Best optimized for Azure environments

- Complex pricing structure

- Less flexible for advanced custom workflows

- Debugging can be challenging

Platforms / Deployment

Cloud / Hybrid

Security & Compliance

SSO, RBAC, encryption, audit logging, and enterprise-grade governance controls.

Integrations & Ecosystem

Azure Data Factory integrates deeply with Microsoft services and enterprise systems.

- Azure Synapse

- Power BI

- SQL Server

- Oracle

- SAP

- Snowflake

Support & Community

Strong enterprise support backed by Microsoft documentation and partner ecosystem.

5- AWS Step Functions

Short Description:

AWS Step Functions is a serverless orchestration platform designed for distributed applications and cloud-native automation workflows. It helps organizations coordinate services, APIs, and event-driven workloads without managing infrastructure. The platform is widely adopted in AWS-centric environments.

Key Features

- Serverless orchestration

- Event-driven workflows

- Visual workflow designer

- Error handling and retries

- State management

- Deep AWS integration

- Scalable execution engine

Pros

- Highly scalable architecture

- Minimal infrastructure management

- Strong AWS ecosystem integration

- Reliable workflow monitoring

Cons

- Vendor lock-in concerns

- Limited multi-cloud portability

- Complex workflows may become difficult to manage

- Best suited for AWS-focused environments

Platforms / Deployment

Cloud

Security & Compliance

IAM integration, encryption, logging, RBAC, and enterprise-grade AWS security controls.

Integrations & Ecosystem

AWS Step Functions integrates natively across AWS infrastructure and serverless services.

- Lambda

- S3

- Glue

- Redshift

- DynamoDB

- EventBridge

Support & Community

Strong enterprise documentation and broad adoption within AWS ecosystems.

6- Google Cloud Composer

Short Description:

Google Cloud Composer is Google Cloud’s managed Apache Airflow service designed for scalable workflow orchestration in cloud-native environments. It simplifies Airflow deployment and infrastructure management while supporting enterprise analytics and AI workloads.

Key Features

- Managed Apache Airflow

- Auto-scaling infrastructure

- Monitoring and logging

- Workflow scheduling

- Google Cloud integrations

- Kubernetes support

- Security management

Pros

- Reduces Airflow maintenance overhead

- Strong Google Cloud integration

- Scalable architecture

- Simplified deployment experience

Cons

- Primarily optimized for Google Cloud

- Higher operational costs at scale

- Requires familiarity with GCP

- Less flexible than self-managed Airflow

Platforms / Deployment

Cloud

Security & Compliance

IAM integration, encryption, RBAC, and enterprise cloud security controls.

Integrations & Ecosystem

Cloud Composer integrates with analytics, AI, and infrastructure services across Google Cloud.

- BigQuery

- Dataflow

- Vertex AI

- Pub/Sub

- Kubernetes Engine

- Cloud Storage

Support & Community

Supported by Google Cloud enterprise services and Apache Airflow ecosystem.

7- Luigi

Short Description:

Luigi is a lightweight open-source workflow orchestration tool designed for dependency management and batch processing pipelines. It is commonly used by Python-focused engineering teams that require simple orchestration capabilities without heavy infrastructure complexity.

Key Features

- Dependency management

- Batch workflow orchestration

- Python-based workflows

- Task scheduling

- Workflow visualization

- Failure handling

- Lightweight deployment

Pros

- Simple architecture

- Easy for Python developers

- Open-source flexibility

- Good for lightweight workflows

Cons

- Limited observability features

- Smaller ecosystem

- Basic UI experience

- Less cloud-native support

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

Varies / Not publicly stated

Integrations & Ecosystem

Luigi supports integrations through Python libraries and custom development.

- Hadoop

- Spark

- Databases

- Batch systems

- Python frameworks

Support & Community

Moderate open-source community with stable long-term adoption.

8- Control-M

Short Description:

Control-M is an enterprise workload automation platform designed for mission-critical business process orchestration. It supports hybrid infrastructure automation, SLA management, and enterprise governance requirements across large-scale IT environments.

Key Features

- Enterprise workload automation

- SLA management

- Cross-platform orchestration

- Workflow monitoring

- Managed file transfer support

- Batch processing automation

- Hybrid infrastructure support

Pros

- Enterprise-grade reliability

- Strong governance capabilities

- Broad infrastructure compatibility

- Mature automation features

Cons

- Expensive licensing model

- Complex implementation

- Requires specialized administrators

- Less developer-friendly

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

SSO, RBAC, audit logging, and enterprise governance support.

Integrations & Ecosystem

Control-M integrates with enterprise applications, infrastructure platforms, and legacy systems.

- SAP

- Oracle

- AWS

- Azure

- Databases

- Mainframes

Support & Community

Strong enterprise support with professional services and global customer base.

9- Kestra

Short Description:

Kestra is a modern orchestration platform focused on declarative workflows, scalability, and event-driven automation. It provides strong observability and developer experience features for cloud-native engineering teams managing modern automation pipelines.

Key Features

- Event-driven orchestration

- Declarative workflow definitions

- Real-time monitoring

- Scalable execution engine

- Built-in observability

- Multi-language support

- API-driven automation

Pros

- Modern orchestration architecture

- Flexible workflow automation

- Strong scalability capabilities

- Good developer experience

Cons

- Smaller ecosystem

- Newer platform maturity

- Limited enterprise references

- Fewer third-party integrations

Platforms / Deployment

Cloud / Self-hosted / Hybrid

Security & Compliance

RBAC, audit logs, and encryption support. Additional compliance details vary.

Integrations & Ecosystem

Kestra integrates with cloud infrastructure, orchestration engines, and streaming systems.

- Kubernetes

- Docker

- AWS

- PostgreSQL

- Kafka

- Google Cloud

Support & Community

Growing community with active development and improving documentation quality.

10- Apache NiFi

Short Description:

Apache NiFi is a flow-based orchestration platform focused on real-time data movement and streaming ingestion. Its drag-and-drop interface and real-time processing capabilities make it popular for data-intensive environments and streaming workflows.

Key Features

- Visual workflow orchestration

- Real-time streaming support

- Drag-and-drop pipeline builder

- Data provenance tracking

- Fine-grained flow control

- Back-pressure management

- Extensive processor library

Pros

- Excellent streaming support

- User-friendly visual workflows

- Strong data provenance tracking

- Flexible integration ecosystem

Cons

- Resource-intensive deployments

- Scaling can become complex

- UI may feel crowded

- Less developer-centric than code-first tools

Platforms / Deployment

Self-hosted / Hybrid

Security & Compliance

RBAC, encryption, secure data transfer, and audit logging support.

Integrations & Ecosystem

Apache NiFi integrates with streaming systems, databases, APIs, and enterprise platforms.

- Kafka

- Hadoop

- AWS

- Azure

- MQTT

- Databases

Support & Community

Strong Apache open-source community with broad enterprise adoption.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Apache Airflow | Enterprise orchestration | Linux / Cloud | Hybrid | Python DAG flexibility | N/A |

| Prefect | Modern cloud-native orchestration | Web / Cloud | Hybrid | Developer experience | N/A |

| Dagster | Data-aware orchestration | Web / Cloud | Hybrid | Asset-based workflows | N/A |

| Azure Data Factory | Microsoft enterprises | Web / Cloud | Cloud / Hybrid | Visual ETL pipelines | N/A |

| AWS Step Functions | Serverless workflows | Cloud | Cloud | Event-driven orchestration | N/A |

| Google Cloud Composer | Managed Airflow | Cloud | Cloud | Managed orchestration | N/A |

| Luigi | Lightweight workflows | Linux | Self-hosted | Simplicity | N/A |

| Control-M | Enterprise automation | Web / Windows / Linux | Hybrid | SLA management | N/A |

| Kestra | Modern event-driven workflows | Web / Cloud | Hybrid | Declarative workflows | N/A |

| Apache NiFi | Streaming data flows | Web / Linux | Hybrid | Real-time orchestration | N/A |

Evaluation & Scoring of Data Pipeline Orchestration Tools

| Tool Name | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Apache Airflow | 9 | 7 | 10 | 8 | 9 | 9 | 8 | 8.6 |

| Prefect | 8 | 9 | 8 | 8 | 8 | 8 | 8 | 8.2 |

| Dagster | 9 | 8 | 8 | 8 | 8 | 8 | 7 | 8.1 |

| Azure Data Factory | 8 | 8 | 9 | 9 | 8 | 9 | 7 | 8.2 |

| AWS Step Functions | 8 | 8 | 9 | 9 | 9 | 9 | 7 | 8.4 |

| Google Cloud Composer | 8 | 8 | 8 | 9 | 8 | 8 | 7 | 8.0 |

| Luigi | 6 | 8 | 6 | 5 | 7 | 6 | 9 | 6.9 |

| Control-M | 9 | 6 | 8 | 9 | 9 | 9 | 5 | 7.9 |

| Kestra | 8 | 8 | 7 | 7 | 8 | 7 | 8 | 7.8 |

| Apache NiFi | 8 | 7 | 8 | 8 | 8 | 8 | 8 | 7.9 |

These scores are comparative and designed to help organizations evaluate relative strengths across enterprise requirements. Higher scores generally indicate stronger balance across usability, integrations, scalability, governance, and operational maturity. Teams should prioritize the categories most aligned with their technical and business goals.

Which Data Pipeline Orchestration Tool Is Right for You?

Solo / Freelancer

Luigi and Prefect are strong choices for smaller workflows and lightweight orchestration requirements. They provide easier setup experiences and lower operational overhead compared to enterprise-heavy orchestration systems.

SMB

Prefect, Dagster, and Azure Data Factory work well for SMB environments because they balance scalability, usability, and cloud-native automation capabilities without requiring excessive infrastructure management.

Mid-Market

Apache Airflow, Dagster, and Google Cloud Composer provide strong orchestration capabilities for growing organizations managing analytics, ETL, and AI workflows across multiple teams.

Enterprise

Control-M, Apache Airflow, Azure Data Factory, and AWS Step Functions are strong enterprise options due to governance capabilities, scalability, compliance features, and hybrid infrastructure support.

Budget vs Premium

Open-source platforms such as Airflow, Dagster, Luigi, and Apache NiFi reduce licensing costs but may increase operational workload. Premium enterprise solutions provide stronger support and governance capabilities but increase total ownership costs.

Feature Depth vs Ease of Use

Airflow and Control-M provide extensive orchestration flexibility for complex environments, while Prefect and Azure Data Factory focus more on usability and simplified workflow management.

Integrations & Scalability

Organizations managing hybrid or multi-cloud environments should prioritize orchestration tools with strong integration ecosystems and scalable workflow execution capabilities.

Security & Compliance Needs

Highly regulated industries should prioritize orchestration platforms with strong RBAC, encryption, audit logging, SSO, and governance support.

Frequently Asked Questions

1. What is a data pipeline orchestration tool?

A data pipeline orchestration tool automates workflow scheduling, dependency management, and monitoring for data processing systems. These platforms coordinate ETL jobs, machine learning workflows, analytics pipelines, and automation tasks across distributed environments.

2. How is orchestration different from ETL?

ETL focuses specifically on moving and transforming data, while orchestration manages the execution and coordination of workflows. Orchestration platforms can control ETL, machine learning, APIs, infrastructure automation, and analytics processes together.

3. Are open-source orchestration tools good for enterprises?

Yes, many enterprises successfully use open-source orchestration platforms such as Apache Airflow and Dagster. However, organizations should evaluate operational complexity, scaling requirements, governance needs, and long-term support considerations.

4. Which orchestration platform is best for cloud-native environments?

Prefect, AWS Step Functions, Google Cloud Composer, and Dagster are strong options for cloud-native architectures. The ideal choice depends on infrastructure preferences, workflow complexity, and cloud provider strategy.

5. What security features should buyers evaluate?

Important security features include SSO, RBAC, MFA, encryption, audit logging, secrets management, and governance controls. Regulated organizations should also review deployment flexibility and compliance support.

6. Are orchestration tools expensive?

Costs vary based on deployment model, workflow scale, and infrastructure requirements. Managed cloud services reduce maintenance work but may increase operational expenses, while open-source platforms reduce licensing costs but require engineering resources.

7. Can orchestration tools support AI and machine learning pipelines?

Yes, modern orchestration tools increasingly support machine learning workflows, feature engineering, model training, observability, and AI infrastructure automation. Many integrate directly with cloud AI services and ML platforms.

8. How difficult is migration between orchestration tools?

Migration complexity depends on workflow customization, integrations, and dependency structures. Organizations with highly customized workflows may require significant engineering effort during platform migration.

9. What are the most common implementation mistakes?

Common mistakes include poor workflow design, weak observability planning, ignoring governance requirements, underestimating scaling complexity, and selecting tools based only on popularity rather than actual workload fit.

10. How should companies evaluate orchestration platforms before purchase?

Organizations should run pilot projects using real workloads, validate integration compatibility, evaluate governance controls, test scalability, and assess operational overhead before standardizing on a platform.

Conclusion

Data Pipeline Orchestration Tools have become essential infrastructure for modern analytics, AI, cloud-native automation, and enterprise data operations. Organizations now require orchestration platforms that can deliver scalability, observability, governance, security, and integration flexibility across increasingly complex environments. Apache Airflow remains one of the strongest choices for highly customizable enterprise orchestration, while modern platforms like Prefect and Dagster focus on developer experience and workflow visibility. Cloud-native services such as AWS Step Functions and Azure Data Factory simplify orchestration management for organizations invested in hyperscaler ecosystems. The right platform ultimately depends on workflow complexity, infrastructure strategy, operational maturity, compliance requirements, and budget priorities. Instead of selecting a universal winner, organizations should shortlist two or three platforms, run pilot deployments, validate integrations and governance capabilities, and evaluate long-term operational fit before making a final decision.