Introduction

Edge AI Inference Platforms are software and hardware solutions that enable artificial intelligence models to run directly on edge devices instead of relying entirely on centralized cloud infrastructure. These platforms allow organizations to perform real-time AI inference on devices such as industrial gateways, smart cameras, autonomous systems, IoT sensors, robots, retail kiosks, and edge servers. By processing AI workloads closer to where data is generated, edge inference platforms reduce latency, lower bandwidth consumption, improve reliability, and strengthen privacy and compliance controls.

Real-world use cases include computer vision in manufacturing plants, predictive maintenance in industrial equipment, AI-powered surveillance systems, autonomous vehicles and drones, smart retail analytics, healthcare diagnostics at the edge, and AI-enabled telecom infrastructure. Modern edge AI inference platforms increasingly support GPU acceleration, containerized AI deployment, model optimization, AI orchestration, and real-time telemetry analytics to support scalable enterprise AI operations.

Evaluation Criteria for Buyers:

- AI model deployment and inference performance

- Hardware acceleration and GPU/TPU support

- Scalability across distributed edge environments

- AI model optimization and orchestration

- Security and device management capabilities

- Integration with cloud, IoT, and observability ecosystems

- Real-time telemetry and monitoring visibility

- Container and Kubernetes support

- Ease of deployment and developer workflows

- Vendor support and ecosystem maturity

Best for: AI engineering teams, industrial organizations, telecom operators, autonomous systems developers, retail analytics providers, healthcare technology companies, and enterprises deploying real-time AI workloads at the edge.

Not ideal for: Small organizations without AI workloads, businesses relying entirely on centralized cloud AI processing, or teams lacking AI model management expertise.

Key Trends in Edge AI Inference Platforms

- AI model optimization for low-power edge hardware

- GPU, TPU, and NPU acceleration for real-time inference

- Containerized AI deployment with Kubernetes support

- AI orchestration across distributed edge environments

- Real-time AI telemetry and observability

- Privacy-focused on-device AI processing

- Integration with IoT and industrial automation ecosystems

- Federated learning and distributed AI workflows

- AI inference acceleration for computer vision workloads

- Hybrid cloud-edge AI deployment architectures

How We Selected These Tools (Methodology)

- Evaluated enterprise adoption and AI ecosystem maturity

- Reviewed AI inference performance and optimization capabilities

- Assessed hardware acceleration and edge deployment support

- Analyzed scalability across distributed AI environments

- Evaluated integration with cloud, IoT, and Kubernetes ecosystems

- Reviewed security, telemetry, and observability capabilities

- Compared ease of deployment and developer experience

- Assessed support for real-time and low-latency inference

- Considered vendor support quality and community ecosystems

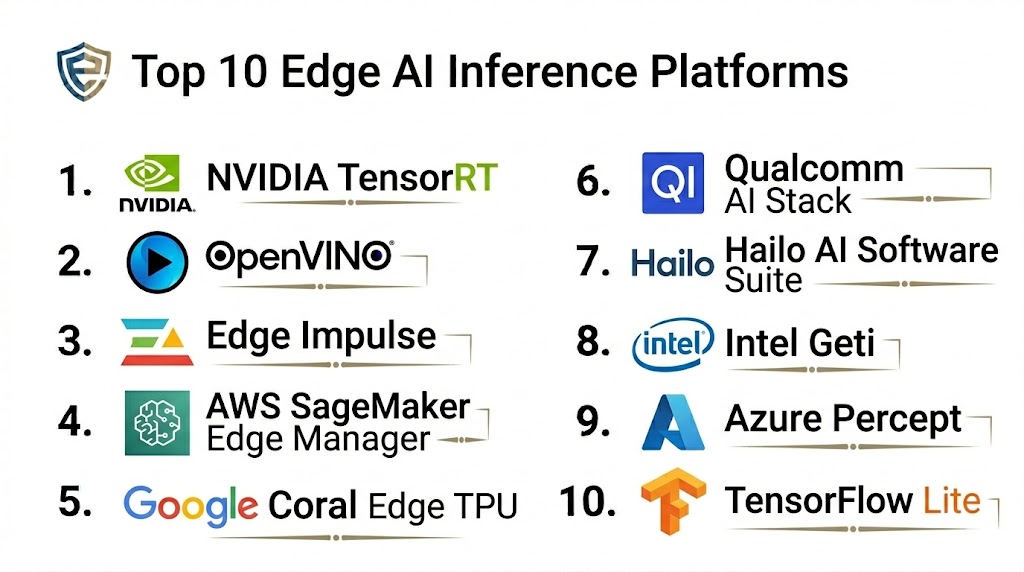

Top 10 Edge AI Inference Platforms

1 — NVIDIA Jetson Platform

Short description: NVIDIA Jetson provides GPU-accelerated edge AI computing for robotics, industrial automation, healthcare, smart cities, and autonomous systems.

Key Features

- GPU-accelerated AI inference

- CUDA and TensorRT optimization

- Computer vision and robotics support

- AI model deployment and orchestration

- Real-time inference processing

- Integration with NVIDIA AI ecosystem

Pros

- Industry-leading AI acceleration performance

- Strong AI developer ecosystem

- Excellent support for computer vision workloads

Cons

- Premium hardware pricing

- Power requirements for advanced models

- Requires GPU optimization expertise

Platforms / Deployment

- Linux-based edge devices

- Edge / Hybrid deployments

Security & Compliance

- Secure boot, encryption, RBAC

- Device management and policy controls

Integrations & Ecosystem

Integrates with NVIDIA AI and cloud ecosystems.

- TensorRT

- DeepStream

- Kubernetes and Docker support

Support & Community

Extensive AI community, enterprise support, and developer documentation.

2 — Intel OpenVINO

Short description: Intel OpenVINO optimizes AI inference across Intel CPUs, GPUs, VPUs, and edge hardware for industrial and enterprise deployments.

Key Features

- AI model optimization for Intel hardware

- Multi-device inference acceleration

- Computer vision and analytics support

- Edge deployment toolkits

- AI performance benchmarking

- Integration with Intel AI ecosystem

Pros

- Strong optimization for Intel hardware

- Flexible deployment support

- Good enterprise AI integration

Cons

- Best performance on Intel ecosystems

- Complex optimization workflows for beginners

- Limited non-Intel acceleration benefits

Platforms / Deployment

- Windows / Linux

- Edge / Hybrid / Cloud

Security & Compliance

- Encryption and secure inference workflows

- Access controls and policy support

Integrations & Ecosystem

Integrates with Intel AI, Kubernetes, and observability tools.

- OpenVINO Toolkit

- Intel DevCloud

- Docker and Kubernetes

Support & Community

Strong enterprise support and active developer ecosystem.

3 — Google Coral Edge TPU

Short description: Google Coral provides low-power TPU acceleration for AI inference workloads including vision, audio, and sensor analytics at the edge.

Key Features

- Edge TPU acceleration

- TensorFlow Lite optimization

- Low-latency inference processing

- Compact edge AI hardware support

- Real-time computer vision capabilities

- Low-power AI workloads

Pros

- Efficient low-power inference

- Compact deployment footprint

- Good TensorFlow integration

Cons

- Limited support outside TensorFlow ecosystem

- Lower performance for very large models

- Hardware availability constraints

Platforms / Deployment

- Linux / Embedded systems

- Edge deployments

Security & Compliance

- Secure device communication

- Policy and access controls

Integrations & Ecosystem

Integrates with TensorFlow Lite and edge AI workflows.

- TensorFlow Lite

- Edge AI pipelines

- IoT integrations

Support & Community

Developer community and Google AI documentation resources.

4 — AWS Panorama

Short description: AWS Panorama enables computer vision inference on edge appliances and cameras with centralized cloud management and analytics.

Key Features

- Computer vision edge inference

- Cloud-managed AI deployment

- Video analytics and processing

- AI model deployment automation

- Integration with AWS AI services

- Edge telemetry monitoring

Pros

- Strong AWS integration

- Simplified edge computer vision workflows

- Cloud-managed orchestration

Cons

- Best suited for AWS ecosystems

- Computer vision-focused platform

- Enterprise pricing structure

Platforms / Deployment

- Edge appliances / Cameras

- Cloud / Hybrid

Security & Compliance

- IAM integration, encryption

- AWS security controls and logging

Integrations & Ecosystem

Works with AWS cloud and AI ecosystems.

- SageMaker

- AWS IoT

- CloudWatch

Support & Community

AWS enterprise support and cloud developer ecosystem.

5 — Azure IoT Edge AI

Short description: Azure IoT Edge AI enables deployment of AI models, analytics, and cloud workloads to distributed edge devices with centralized orchestration.

Key Features

- AI workload orchestration

- Edge container deployment

- Real-time telemetry and analytics

- AI model lifecycle management

- IoT and cloud integration

- Kubernetes and Docker support

Pros

- Strong hybrid cloud-edge integration

- Enterprise-grade orchestration

- Broad Azure ecosystem compatibility

Cons

- Best suited for Azure customers

- Complexity for smaller teams

- Requires cloud infrastructure expertise

Platforms / Deployment

- Linux / Windows edge systems

- Cloud / Hybrid

Security & Compliance

- RBAC, encryption, secure provisioning

- Microsoft compliance frameworks

Integrations & Ecosystem

Integrates deeply with Azure cloud services.

- Azure ML

- Azure IoT Hub

- Defender for IoT

Support & Community

Enterprise support and extensive documentation ecosystem.

6 — Edge Impulse

Short description: Edge Impulse is a developer-focused edge AI platform for building, training, optimizing, and deploying ML models to embedded and IoT devices.

Key Features

- End-to-end TinyML workflows

- AI model optimization

- Edge device deployment pipelines

- Real-time data collection and telemetry

- Embedded AI development tools

- Low-power AI optimization

Pros

- Strong TinyML developer workflows

- Simplified AI deployment process

- Good embedded AI support

Cons

- Focused mainly on embedded AI workloads

- Smaller enterprise ecosystem

- Limited large-scale orchestration features

Platforms / Deployment

- Embedded devices / Linux

- Edge deployments

Security & Compliance

- Secure deployment workflows

- Access management controls

Integrations & Ecosystem

Works with embedded AI and IoT ecosystems.

- TensorFlow Lite

- Arduino

- Embedded Linux platforms

Support & Community

Active developer community and training resources.

7 — Red Hat OpenShift AI Edge

Short description: Red Hat OpenShift AI Edge provides Kubernetes-based AI inference orchestration and lifecycle management for distributed edge environments.

Key Features

- Kubernetes-native AI deployment

- AI workload orchestration

- Containerized inference pipelines

- Hybrid cloud-edge management

- GPU and accelerator support

- Observability and telemetry integration

Pros

- Strong Kubernetes ecosystem integration

- Scalable enterprise AI orchestration

- Hybrid deployment flexibility

Cons

- Requires Kubernetes expertise

- Enterprise-focused complexity

- Premium infrastructure requirements

Platforms / Deployment

- Linux / Kubernetes clusters

- Hybrid / Edge / Cloud

Security & Compliance

- RBAC, encryption, audit logging

- Secure container orchestration controls

Integrations & Ecosystem

Integrates with Red Hat and open-source AI ecosystems.

- OpenShift

- Kubernetes

- Prometheus and Grafana

Support & Community

Enterprise support and large open-source ecosystem.

8 — Hailo AI Platform

Short description: Hailo provides AI acceleration processors and software for edge inference with a focus on high-performance, low-power AI workloads.

Key Features

- AI accelerator hardware

- Real-time inference optimization

- Computer vision acceleration

- Edge AI deployment tools

- Low-power inference workflows

- AI pipeline optimization

Pros

- Efficient low-power inference performance

- Good computer vision acceleration

- Scalable edge AI hardware options

Cons

- Specialized hardware ecosystem

- Smaller software ecosystem than hyperscalers

- Advanced optimization expertise required

Platforms / Deployment

- Embedded Linux / Edge systems

- Edge deployments

Security & Compliance

- Secure inference workflows

- Hardware-level protections

Integrations & Ecosystem

Works with AI and edge inference toolchains.

- TensorFlow

- ONNX

- Edge AI frameworks

Support & Community

Developer ecosystem and enterprise support programs.

9 — Qualcomm AI Stack

Short description: Qualcomm AI Stack enables optimized AI inference across Qualcomm-powered edge and mobile devices with support for vision, speech, and AI analytics.

Key Features

- AI acceleration for Qualcomm hardware

- On-device inference optimization

- Low-power AI processing

- Mobile and edge AI support

- AI deployment frameworks

- Real-time telemetry visibility

Pros

- Excellent mobile AI efficiency

- Low-power edge inference optimization

- Strong embedded AI support

Cons

- Best suited for Qualcomm ecosystems

- Limited enterprise orchestration tools

- Complex optimization workflows

Platforms / Deployment

- Mobile / Embedded Linux / Android

- Edge deployments

Security & Compliance

- Secure inference execution

- Hardware-level protections

Integrations & Ecosystem

Integrates with Qualcomm AI and mobile ecosystems.

- TensorFlow Lite

- ONNX

- Mobile AI frameworks

Support & Community

Developer documentation and AI partner ecosystem.

10 — IBM Edge Application Manager

Short description: IBM Edge Application Manager provides autonomous edge AI deployment, orchestration, and lifecycle management for enterprise AI operations.

Key Features

- Autonomous edge AI deployment

- AI workload orchestration

- Edge policy management

- AI telemetry and monitoring

- Secure AI lifecycle management

- Multi-edge environment support

Pros

- Strong enterprise AI governance

- Autonomous edge orchestration

- Scalable distributed AI operations

Cons

- Enterprise complexity

- Requires infrastructure planning

- Premium licensing costs

Platforms / Deployment

- Linux / Kubernetes edge systems

- Hybrid / Cloud / Edge

Security & Compliance

- Encryption, RBAC, audit logging

- Policy enforcement and compliance controls

Integrations & Ecosystem

Integrates with IBM hybrid cloud and AI ecosystems.

- Red Hat OpenShift

- IBM Cloud

- Kubernetes and observability tools

Support & Community

Enterprise support and hybrid cloud ecosystem resources.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| NVIDIA Jetson | Computer vision AI | Linux edge systems | Edge / Hybrid | GPU AI acceleration | N/A |

| Intel OpenVINO | Intel-based AI inference | Windows / Linux | Hybrid | Intel optimization | N/A |

| Google Coral | Low-power AI | Embedded Linux | Edge | TPU acceleration | N/A |

| AWS Panorama | Edge computer vision | Edge appliances | Hybrid | AWS vision workflows | N/A |

| Azure IoT Edge AI | Hybrid AI orchestration | Windows / Linux | Cloud / Hybrid | Azure integration | N/A |

| Edge Impulse | TinyML and embedded AI | Embedded systems | Edge | TinyML workflows | N/A |

| OpenShift AI Edge | Kubernetes AI orchestration | Linux / Kubernetes | Hybrid | Containerized AI | N/A |

| Hailo AI Platform | Low-power vision AI | Embedded Linux | Edge | AI accelerator hardware | N/A |

| Qualcomm AI Stack | Mobile edge AI | Android / Embedded | Edge | Mobile AI optimization | N/A |

| IBM Edge Application Manager | Enterprise edge AI | Linux / Kubernetes | Hybrid | Autonomous orchestration | N/A |

Evaluation & Scoring

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| NVIDIA Jetson | 10 | 8 | 9 | 8 | 10 | 9 | 7 | 8.9 |

| Intel OpenVINO | 9 | 8 | 8 | 8 | 9 | 8 | 8 | 8.4 |

| Google Coral | 8 | 8 | 7 | 7 | 8 | 7 | 9 | 7.9 |

| AWS Panorama | 8 | 7 | 9 | 8 | 8 | 8 | 7 | 8.0 |

| Azure IoT Edge AI | 9 | 7 | 9 | 9 | 8 | 8 | 7 | 8.3 |

| Edge Impulse | 8 | 9 | 7 | 7 | 8 | 8 | 9 | 8.1 |

| OpenShift AI Edge | 9 | 7 | 9 | 9 | 8 | 8 | 6 | 8.2 |

| Hailo AI Platform | 8 | 7 | 7 | 7 | 9 | 7 | 8 | 7.8 |

| Qualcomm AI Stack | 8 | 7 | 7 | 7 | 8 | 7 | 8 | 7.6 |

| IBM Edge App Manager | 9 | 7 | 8 | 9 | 8 | 8 | 6 | 8.0 |

Which Edge AI Inference Platform Is Right for You?

Solo / Freelancer

Edge Impulse and Google Coral are excellent starting points for embedded AI experimentation, TinyML workflows, and lightweight edge AI projects with limited infrastructure requirements.

SMB

Intel OpenVINO, Edge Impulse, and Hailo AI Platform provide strong AI optimization and edge deployment capabilities without requiring highly complex enterprise orchestration.

Mid-Market

Azure IoT Edge AI and AWS Panorama balance edge AI orchestration, telemetry monitoring, and cloud integration for organizations deploying distributed AI workloads.

Enterprise

NVIDIA Jetson, OpenShift AI Edge, IBM Edge Application Manager, and Azure IoT Edge AI deliver enterprise-scale orchestration, AI lifecycle management, Kubernetes integration, and high-performance AI acceleration.

Budget vs Premium

Budget-friendly options include Edge Impulse and Google Coral for lightweight and embedded AI inference. Premium platforms such as NVIDIA Jetson, IBM Edge Application Manager, and OpenShift AI Edge focus on enterprise orchestration and large-scale AI deployment.

Feature Depth vs Ease of Use

Edge Impulse and Google Coral prioritize simplicity and developer experience, while OpenShift AI Edge and IBM Edge Application Manager provide deeper orchestration and enterprise AI governance capabilities.

Integrations & Scalability

Organizations deploying AI across distributed infrastructure should prioritize platforms with strong Kubernetes, observability, cloud, and IoT integrations. NVIDIA, Azure, IBM, and Red Hat perform strongly in scalable enterprise environments.

Security & Compliance Needs

Enterprises handling sensitive AI workloads or regulated data should prioritize secure inference execution, encrypted AI pipelines, RBAC, telemetry monitoring, and policy enforcement capabilities.

Frequently Asked Questions

1. What is edge AI inference?

Edge AI inference refers to running trained AI models directly on local edge devices instead of relying entirely on centralized cloud infrastructure.

2. Why is edge AI important?

Edge AI reduces latency, lowers bandwidth usage, improves reliability, and allows real-time AI processing closer to where data is generated.

3. What workloads commonly use edge AI inference?

Computer vision, predictive maintenance, autonomous systems, surveillance analytics, smart retail, industrial automation, and healthcare diagnostics commonly use edge AI.

4. What hardware accelerators are used?

Edge AI platforms commonly use GPUs, TPUs, NPUs, VPUs, and AI accelerator chips to improve inference performance and efficiency.

5. How does edge AI improve privacy?

By processing data locally on devices, sensitive information does not always need to be transmitted to centralized cloud environments.

6. Do edge AI platforms support containers?

Yes. Many modern platforms support Docker, Kubernetes, and containerized AI deployment workflows.

7. Can edge AI platforms integrate with cloud services?

Most enterprise edge AI platforms integrate with AWS, Azure, Google Cloud, and hybrid cloud infrastructures for centralized orchestration and monitoring.

8. What is AI model optimization?

Model optimization reduces the size, latency, and power requirements of AI models so they can run efficiently on edge hardware.

9. Which industries use edge AI inference the most?

Manufacturing, healthcare, retail, telecom, logistics, transportation, smart cities, robotics, and industrial automation are major adopters.

10. What deployment challenges should organizations expect?

Organizations should prepare for hardware compatibility issues, AI model optimization complexity, telemetry scaling, orchestration planning, and secure lifecycle management.

Conclusion

Edge AI Inference Platforms are transforming how enterprises deploy real-time AI workloads across distributed infrastructure, enabling faster decision-making, reduced latency, and improved operational efficiency. Smaller teams and embedded AI developers can benefit from platforms like Edge Impulse or Google Coral for lightweight AI inference and TinyML workflows, while enterprises often require advanced orchestration, GPU acceleration, and hybrid cloud-edge management from platforms such as NVIDIA Jetson, OpenShift AI Edge, Azure IoT Edge AI, or IBM Edge Application Manager. The ideal platform depends on workload complexity, hardware requirements, scalability goals, security controls, and cloud integration strategy. Organizations should shortlist a few platforms, validate AI inference performance in representative environments, test orchestration and telemetry workflows, and gradually scale deployments to build secure, reliable, and efficient edge AI operations.