Introduction

AI Evaluation & Benchmarking Frameworks are platforms and tools that allow organizations to measure the performance, reliability, and fairness of machine learning and AI models. These frameworks help compare models across tasks, datasets, and metrics to ensure that AI systems meet quality, safety, and performance standards before deployment. They are essential for responsible AI practices, model selection, and ongoing monitoring of deployed systems.

Real-world use cases include evaluating natural language processing models for accuracy and bias, benchmarking computer vision systems for detection and recognition performance, comparing recommendation algorithms across datasets, testing reinforcement learning policies in simulation environments, and monitoring deployed models for drift and fairness. These frameworks help organizations make informed decisions, optimize models, and maintain regulatory and ethical compliance.

Evaluation criteria for AI benchmarking frameworks include supported model types and tasks, ease of integration with ML pipelines, availability of prebuilt benchmarks and datasets, metric diversity, visualization and reporting, reproducibility and traceability, deployment support, open-source flexibility, performance, and community support.

Best for: ML engineers, AI researchers, data scientists, and enterprise teams responsible for evaluating model performance, monitoring fairness, and ensuring production-grade reliability.

Not ideal for: Casual AI developers or teams not deploying models in critical systems who do not require structured evaluation or standardized benchmarking.

Key Trends in AI Evaluation & Benchmarking Frameworks

- Standardized metrics for fairness, bias, robustness, and explainability

- Integration with ML pipelines and continuous evaluation workflows

- Support for multi-modal AI evaluation including vision, language, and audio

- Cloud-native evaluation for scalable model testing

- Simulation environments for reinforcement learning benchmarking

- Reproducible benchmarking with versioned datasets and configurations

- Automated model drift detection and performance monitoring

- Open-source datasets and reproducible benchmark suites

- AI-specific stress testing including adversarial robustness and safety

- Reporting dashboards for model performance, fairness, and risk metrics

How We Selected These Tools

- Reviewed adoption by AI research and enterprise teams

- Assessed support for multiple model types and tasks

- Evaluated availability of standard benchmarks and datasets

- Checked integration with ML pipelines and CI/CD workflows

- Considered metric diversity including fairness, robustness, and efficiency

- Examined reporting, visualization, and reproducibility features

- Reviewed simulation support for reinforcement learning

- Evaluated community adoption, documentation, and support

- Considered open-source flexibility and licensing

- Assessed scalability for cloud and multi-model evaluation

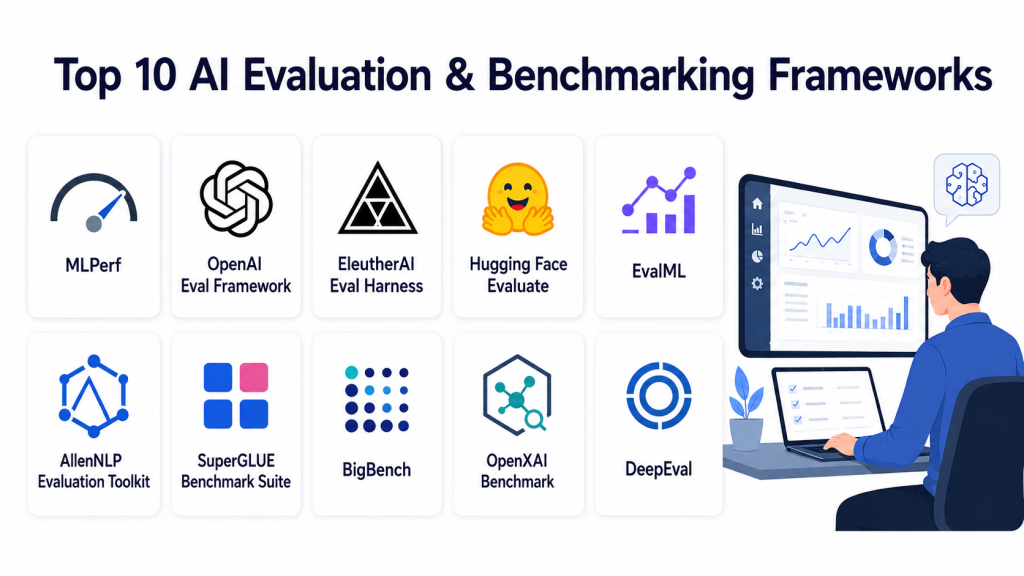

Top 10 AI Evaluation & Benchmarking Frameworks

#1 — MLPerf

Short description: MLPerf provides standardized benchmarking for machine learning hardware and models, focusing on performance metrics for training and inference across tasks and frameworks.

Key Features

- Standardized evaluation benchmarks

- Supports training and inference measurement

- Cross-framework compatibility

- Public leaderboard comparisons

- Dataset standardization

- Multi-task evaluation (vision, language, recommendation)

Pros

- Industry-standard benchmarking

- Comprehensive dataset and metric coverage

Cons

- Focused primarily on performance rather than fairness

- Requires familiarity with benchmarking procedures

Platforms / Deployment

- Linux, Cloud

- Local / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Compatible with TensorFlow, PyTorch, ONNX models

- Cloud deployment for large-scale benchmarking

- Leaderboard tracking

Support & Community

- Community-driven documentation

- Industry adoption

- Forums for discussion

#2 — OpenAI Evals

Short description: OpenAI Evals is a framework for evaluating AI model capabilities across NLP and reasoning tasks, supporting automated scoring and human-in-the-loop assessment.

Key Features

- Evaluation of language models

- Support for custom benchmarks

- Automated and human evaluation

- Dataset and metric flexibility

- Reporting and visualization

Pros

- Integrates automated and manual evaluation

- Supports customized task definitions

Cons

- Focused on NLP and reasoning

- Less support for multi-modal models

Platforms / Deployment

- Web, Cloud

- Managed

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- API for model evaluation

- Custom task import

- Reporting dashboards

Support & Community

- Documentation

- Community support

- Tutorials

#3 — EvaluAI

Short description: EvaluAI provides a platform for hosting AI challenges and evaluating models across datasets and tasks, supporting reproducibility and leaderboard tracking.

Key Features

- Challenge creation and hosting

- Standardized scoring metrics

- Leaderboard and ranking

- Support for multiple AI tasks

- Submission validation and reproducibility

Pros

- Facilitates benchmarking competitions

- Supports multiple task types

Cons

- Designed for challenge-based evaluation

- Less suited for production monitoring

Platforms / Deployment

- Web, Cloud

- Managed

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Submission APIs

- Dataset hosting

- Leaderboard visualization

Support & Community

- Documentation and tutorials

- Community forums

- Challenge examples

#4 — CheckList

Short description: CheckList is a behavioral testing framework for NLP models, enabling evaluation across capabilities such as robustness, consistency, and fairness.

Key Features

- Behavioral testing for NLP

- Test case templates and scenario generation

- Metric evaluation and visualization

- Integration with ML pipelines

- Supports robustness and bias testing

Pros

- Effective for detailed NLP analysis

- Flexible and extensible test framework

Cons

- NLP-focused

- Requires test case design expertise

Platforms / Deployment

- Python, Linux

- Local / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Integration with PyTorch and TensorFlow

- Custom test scenarios

- Reporting dashboards

Support & Community

- Documentation and tutorials

- GitHub community

- Examples

#5 — DeepChecks

Short description: DeepChecks provides pre-deployment and post-deployment evaluation for machine learning models, focusing on data integrity, model performance, and drift detection.

Key Features

- Pre-deployment and post-deployment checks

- Data integrity testing

- Performance and drift monitoring

- Reporting and visualization

- Multi-framework support

Pros

- Combines evaluation and monitoring

- Detects data and model drift

Cons

- Requires Python expertise

- Limited support for multi-modal evaluation

Platforms / Deployment

- Python, Linux

- Local / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- TensorFlow, PyTorch support

- CI/CD pipelines

- Reporting integrations

Support & Community

- Docs and tutorials

- GitHub community

- Use-case examples

#6 — Fiddler AI

Short description: Fiddler AI provides explainability and performance benchmarking tools for deployed ML models, emphasizing fairness, interpretability, and drift analysis.

Key Features

- Model explainability

- Fairness evaluation

- Performance monitoring

- Drift detection

- Dashboard visualization

Pros

- Strong focus on responsible AI

- Production monitoring support

Cons

- Enterprise-focused

- May be complex for small teams

Platforms / Deployment

- Cloud

- Managed / Hybrid

Security & Compliance

- TLS encryption and authentication

- Not publicly stated

Integrations & Ecosystem

- API access for model metrics

- Dashboard integrations

- Reporting pipelines

Support & Community

- Documentation

- Customer support

- Community examples

#7 — Weights & Biases Evaluate

Short description: W&B Evaluate enables performance tracking, comparison, and benchmarking across ML experiments, providing visual insights and metrics dashboards.

Key Features

- Model comparison and evaluation

- Experiment tracking

- Visualization dashboards

- Custom metrics

- Integration with ML pipelines

Pros

- Strong visualization and experiment tracking

- Easy integration with CI/CD

Cons

- Limited open-source customization

- Primarily Python-focused

Platforms / Deployment

- Web, Cloud

- Managed

Security & Compliance

- TLS, secure access

- Not publicly stated

Integrations & Ecosystem

- PyTorch, TensorFlow

- CI/CD pipelines

- Cloud storage

Support & Community

- Documentation

- Community forums

- Tutorials

#8 — EvalML

Short description: EvalML automates model evaluation for classical ML models, providing benchmarking metrics, comparison, and visualization to streamline workflow.

Key Features

- Automated model evaluation

- Benchmark metrics for regression, classification

- Visualization and reports

- Integration with ML pipelines

- Multi-model comparison

Pros

- Simplifies evaluation of multiple models

- Open-source and flexible

Cons

- Focused on tabular ML

- Less suited for deep learning and multi-modal tasks

Platforms / Deployment

- Python, Linux

- Local / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Scikit-learn, XGBoost

- Reporting integration

- Experiment pipelines

Support & Community

- Docs and tutorials

- GitHub community

- Examples

#9 — OpenML

Short description: OpenML is a collaborative platform for benchmarking ML models across datasets and tasks, supporting reproducible experiments and meta-learning research.

Key Features

- Dataset and task repository

- Model benchmarking

- Leaderboards

- Reproducible experiment sharing

- API access

Pros

- Open-source community platform

- Extensive dataset coverage

Cons

- Academic/research focus

- Less production integration

Platforms / Deployment

- Web, Python

- Cloud / Local

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python SDK

- API for benchmarking

- Leaderboards

Support & Community

- Community forums

- Documentation

- Research examples

#10 — IBM AI OpenScale

Short description: IBM AI OpenScale monitors AI models in production for fairness, bias, accuracy, and drift, enabling enterprise-grade evaluation and benchmarking.

Key Features

- Model monitoring for fairness and drift

- Explainability and interpretability metrics

- Automated alerts and dashboards

- Multi-framework support

- Enterprise-grade logging and reporting

Pros

- Comprehensive production monitoring

- Supports responsible AI metrics

Cons

- Enterprise-focused and complex

- Costly for small teams

Platforms / Deployment

- Cloud, Hybrid

- Managed

Security & Compliance

- Enterprise-grade security

- Not publicly stated for certifications

Integrations & Ecosystem

- IBM Cloud services

- CI/CD integration

- Reporting pipelines

Support & Community

- IBM documentation

- Support portal

- Community examples

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| MLPerf | Standardized ML benchmarks | Linux, Cloud | Local / Cloud | Training & inference benchmarking | N/A |

| OpenAI Evals | NLP and reasoning evaluation | Web, Cloud | Managed | Automated & human evaluation | N/A |

| EvaluAI | AI challenges and leaderboards | Web, Cloud | Managed | Challenge-based benchmarking | N/A |

| CheckList | Behavioral NLP testing | Python, Linux | Local / Cloud | Robust NLP scenario testing | N/A |

| DeepChecks | Pre/post-deployment checks | Python, Linux | Local / Cloud | Drift & data integrity monitoring | N/A |

| Fiddler AI | Model explainability & fairness | Cloud | Managed | Responsible AI monitoring | N/A |

| W&B Evaluate | Experiment tracking | Web, Cloud | Managed | Visual dashboards & model comparison | N/A |

| EvalML | Classical ML benchmarking | Python, Linux | Local / Cloud | Automated tabular model evaluation | N/A |

| OpenML | Collaborative benchmarking | Web, Python | Cloud / Local | Dataset and leaderboards | N/A |

| IBM AI OpenScale | Production AI monitoring | Cloud, Hybrid | Managed | Fairness & drift monitoring | N/A |

Evaluation & Scoring

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| MLPerf | 9 | 7 | 7 | 7 | 9 | 7 | 8 | 8.0 |

| OpenAI Evals | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| EvaluAI | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| CheckList | 8 | 7 | 7 | 7 | 8 | 6 | 7 | 7.4 |

| DeepChecks | 8 | 7 | 7 | 7 | 8 | 6 | 7 | 7.4 |

| Fiddler AI | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| W&B Evaluate | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| EvalML | 7 | 7 | 6 | 7 | 7 | 6 | 7 | 6.9 |

| OpenML | 7 | 7 | 6 | 7 | 7 | 6 | 7 | 6.9 |

| IBM AI OpenScale | 9 | 7 | 8 | 8 | 8 | 7 | 7 | 7.9 |

Which AI Evaluation & Benchmarking Framework Is Right for You?

Solo / Researchers

EvalML, OpenAI Evals, and CheckList provide flexible frameworks for experimentation and evaluation on NLP and tabular ML tasks.

SMB

DeepChecks and W&B Evaluate provide integrated evaluation and monitoring pipelines for teams deploying models in production.

Mid-Market

MLPerf, OpenML, and EvaluAI offer benchmarking, standardization, and challenge-based evaluation across tasks and datasets.

Enterprise

IBM AI OpenScale and Fiddler AI provide production-grade monitoring for fairness, drift detection, and responsible AI metrics.

Budget vs Premium

Open-source platforms like EvalML, CheckList, and OpenML reduce cost while providing flexible evaluation, whereas enterprise-grade solutions offer managed services at higher cost.

Feature Depth vs Ease of Use

Open-source frameworks provide depth and flexibility; managed platforms provide ease of deployment, dashboards, and integrated reporting.

Integrations & Scalability

Platforms supporting APIs and CI/CD pipelines scale effectively with multiple models and large teams.

Security & Compliance

Enterprise frameworks provide TLS, authentication, and access controls for regulated AI deployments.

Frequently Asked Questions

1. What is AI benchmarking?

AI benchmarking is evaluating models across datasets and metrics to assess performance, robustness, fairness, and reliability in production scenarios.

2. Do these platforms support multiple frameworks?

Yes, many frameworks support TensorFlow, PyTorch, ONNX, and other ML formats.

3. Can they handle multi-modal models?

Yes. Modern platforms like Fiddler AI, IBM AI OpenScale, and MLPerf support multi-modal evaluation including NLP, vision, and audio.

4. Are they suitable for production monitoring?

Enterprise-grade frameworks like IBM AI OpenScale and Fiddler AI provide ongoing monitoring of deployed models for drift, fairness, and performance.

5. Can I integrate with CI/CD pipelines?

Yes, most platforms provide APIs or deployment scripts to integrate with automated ML workflows.

6. How do they measure fairness?

Frameworks evaluate bias and fairness metrics across sensitive attributes and subpopulations using standardized or custom metrics.

7. Can evaluation be automated?

Yes. Many frameworks support automated evaluation, batch testing, and leaderboard generation.

8. Do they include visual reporting?

Yes. Dashboards, charts, and metrics reporting are provided for comparing model performance and behavior.

9. Are these frameworks open-source or commercial?

Several are open-source like EvalML, CheckList, and OpenML, while enterprise solutions like IBM AI OpenScale and Fiddler AI are commercial.

10. How do I choose the right framework?

Consider your model types, evaluation needs, integration requirements, and team expertise. Trial open-source tools for experimentation and enterprise solutions for production.

Conclusion

AI Evaluation & Benchmarking Frameworks enable reliable, reproducible, and responsible AI deployment by providing structured measurement of performance, fairness, and robustness. Open-source platforms such as EvalML and OpenML provide flexibility for experimentation, while enterprise-grade tools like IBM AI OpenScale and Fiddler AI ensure monitoring and accountability for production models. Teams should select frameworks based on scale, supported tasks, ease of integration, and reporting needs. The next step is to shortlist two or three frameworks, test evaluations on sample models, and validate their metrics and dashboards before full adoption. Proper benchmarking ensures trustworthy and high-performing AI systems in real-world applications.