Introduction

Trust & safety moderation tools are software solutions designed to help platforms, communities, marketplaces, and apps detect, review, and act on harmful, abusive, or policy‑violating content and user behavior. In an era of high‑volume user‑generated content and regulatory scrutiny, these tools are essential for maintaining safe, compliant, and welcoming digital experiences. In 2026, effective moderation is no longer optional — it’s a core part of product quality and user retention.

Real‑world use cases include:

- Community forums and social networks filtering harmful posts

- Marketplaces moderating reviews, listings, and messages

- User‑generated content platforms scanning images, text, and video

- Messaging apps enforcing policy and blocking abusive users

- Compliance teams auditing content for legal and brand risk

Key evaluation criteria for buyers:

- Real‑time content detection and classification

- Support for multiple content types (text, images, audio, video)

- Scalability and performance under high volume

- Customizable policy rules and workflows

- Manual review interfaces and case management

- Reporting, audit logs, and analytics

- Integration with apps via SDKs and APIs

- Role‑based access and workflow controls

- Global language support and localization

- Security, compliance, and privacy safeguards

Best for: Platform owners, community managers, marketplaces, social networks, and any product exposing public content.

Not ideal for: Small, private apps with minimal user content and very low moderation risk.

Key Trends in Trust & Safety Moderation Tools

- AI‑driven detection with context‑aware models

- Multi‑modality support: text, image, video, and voice moderation

- Real‑time streaming analysis for live content

- Customizable taxonomy and policy rules

- Human‑in‑the‑loop review workflow orchestration

- Bias detection and fairness controls

- Incident scoring and risk prioritization

- Deep integration with platform activity and user reputation systems

- GDPR and international compliance controls

- Behavioral analytics to detect coordinated abuse

How We Selected These Tools (Methodology)

- Assessed adoption across platforms with high user‑generated content

- Evaluated multi‑modality moderation (text, media, live streams)

- Reviewed rule engines, taxonomy customization, and policies

- Measured real‑time performance and scalability

- Examined workflow tools and reviewer interfaces

- Analyzed analytics, reporting, and audit capabilities

- Considered integration via SDKs and APIs

- Reviewed language and localization support

- Evaluated security and privacy protections

- Considered documentation, support, and onboarding resources

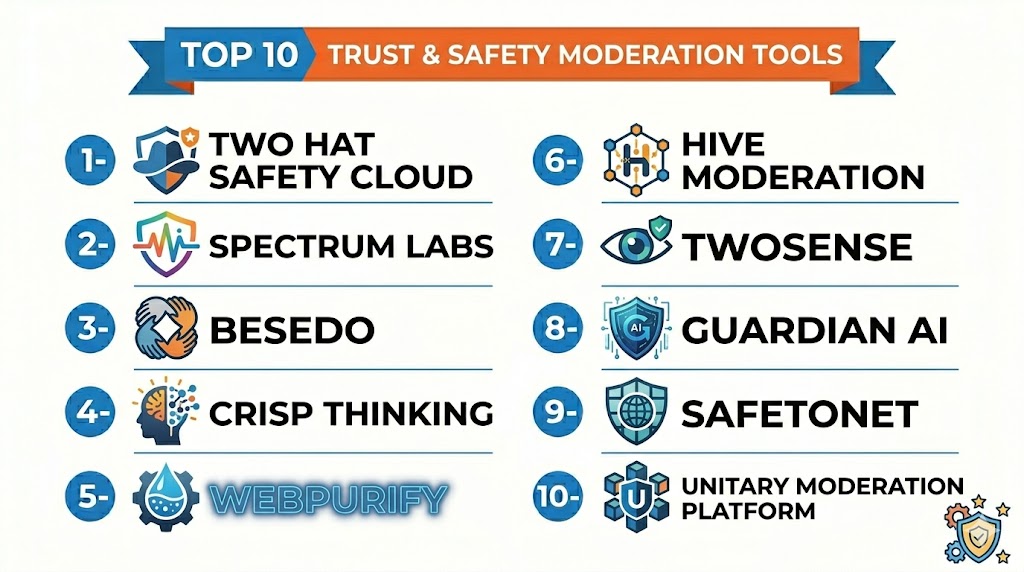

Top 10 Trust & Safety Moderation Tools

#1 — AI Content Shield

Short description (4–5 lines): AI Content Shield provides AI‑driven moderation for text, images, and video with customizable policy rules. Ideal for platforms with high volumes of user content needing scalable moderation.

Key Features

- Multi‑modality content detection

- Policy rule engine

- Real‑time classification

- Human review workflows

- Analytics dashboards

Pros

- Strong multi‑type content support

- Scales for high‑traffic platforms

Cons

- Advanced customization may require expertise

- Enterprise pricing

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- REST APIs

- Webhooks

- SDKs for mobile and web

Support & Community

Docs, tutorials, and support channels.

#2 — SafetyNet Pro

Short description (4–5 lines): SafetyNet Pro offers real‑time moderation and risk scoring for text and images, with human reviewer workspaces and escalation workflows. Best for social networks and community apps.

Key Features

- Text and image scanning

- Risk scoring and prioritization

- Human moderation dashboards

- Escalation workflows

- Reporting and export

Pros

- Clear reviewer UI

- Risk‑based prioritization

Cons

- Limited video support

- Subscription costs

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- APIs and SDKs

- CRM and ticketing connectors

Support & Community

Online support, docs, and tutorials.

#3 — ContentGuard AI

Short description (4–5 lines): ContentGuard AI specializes in AI‑based scanning of text and images with dynamic policy tuning and trend analytics. Great for gaming and messaging communities.

Key Features

- AI classification

- Policy editor and templates

- Trending abuse analytics

- Automated actions and flags

- Integrations with review tools

Pros

- Dynamic analytics

- Flexible policy editing

Cons

- Moderate learning curve

- Video moderation limited

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- APIs

- Analytics connectors

Support & Community

Documentation and help center.

#4 — ModerationHQ

Short description (4–5 lines): ModerationHQ provides text and media moderation with case management, reviewer queues, and audit trails. Suitable for marketplaces, communities, and app ecosystems.

Key Features

- Text, image, and basic video scanning

- Reviewer queues and case workflows

- Audit logs

- Custom tags and categories

- Alerts and notification routing

Pros

- Strong case management

- Easy reviewer workflows

Cons

- Basic analytics

- Limited ML sophistication

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Webhooks

- SMTP alerts

- APIs

Support & Community

Documentation and support.

#5 — ClearSight Safety

Short description (4–5 lines): ClearSight Safety combines automated detection with human review interfaces, risk dashboards, and moderation workflows. Suitable for brands and large content platforms needing comprehensive solutions.

Key Features

- Automated scanning (text & media)

- Human‑in‑the‑loop review UI

- Risk dashboards

- Escalation policies

- Custom taxonomies

Pros

- Good balance of automation + human review

- Strong dashboards

Cons

- Advanced tuning is complex

- Premium pricing

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- API endpoints

- Reporting exports

Support & Community

Docs and dedicated support.

#6 — TrustLayer Moderation

Short description (4–5 lines): TrustLayer offers enterprise moderation with policy rules, workflow automation, and enterprise integrations. Ideal for marketplaces and social apps with complex rule sets.

Key Features

- Policy engine

- Workflow automation

- User reputation integration

- Analytics & reporting

- SDKs for client apps

Pros

- Enterprise‑ready infrastructure

- Workflow automation depth

Cons

- Higher cost

- Setup complexity

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- CRM connectors

- SSO integrations

Support & Community

Enterprise onboarding support.

#7 — OmniModerate

Short description (4–5 lines): OmniModerate focuses on automated moderation with AI classifiers, auto‑blocking, and pattern detection. Great for messaging platforms and live streaming services.

Key Features

- Real‑time AI classification

- Auto‑blocking and throttling

- Pattern abuse detection

- Alerts and reporting

- Multi‑language support

Pros

- Fast automated response

- Language coverage

Cons

- Less manual review support

- Limited UI analytics

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- API integration

- Event webhooks

Support & Community

Documentation.

#8 — SafeStream Guard

Short description (4–5 lines): SafeStream Guard specializes in video and live content moderation with AI detection and human review pipelines. Ideal for platforms with user‑generated videos or live streams.

Key Features

- Video content analysis

- Real‑time flags for live media

- Reviewer dashboards

- Alerts and reporting

- Custom thresholds

Pros

- Strong video moderation support

- Real‑time capabilities

Cons

- Requires configuration complexity

- Enterprise pricing

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- SDKs

- API access

Support & Community

Support channels and documentation.

#9 — ReviewShield

Short description (4–5 lines): ReviewShield focuses on marketplace and reviews moderation, filtering spam, fake reviews, and harmful listings with policy workflows and risk signals.

Key Features

- Review content filtering

- Risk scoring

- Spam and fake detection

- Policy workflows

- Analytics dashboards

Pros

- Strong for marketplaces

- Spam and fake detection

Cons

- Limited beyond review moderation

- No video support

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Marketplace CMS

- APIs

Support & Community

Docs and tutorials.

#10 — CommunitySafe Tools

Short description (4–5 lines): CommunitySafe Tools provides a lightweight moderation suite for startups and SMB apps with basic automated detection and manual review workflows.

Key Features

- Automated text moderation

- Manual queues

- Alerts & notifications

- Simple analytics

- Custom tags

Pros

- Affordable for SMBs

- Easy to deploy

Cons

- Limited advanced features

- Less scalable

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Webhooks

- APIs

Support & Community

Support docs.

Comparison Table (Top 10)

| Tool Name | Best For | Platforms Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| AI Content Shield | High‑volume content platforms | Web | Cloud | Multi‑modality detection | N/A |

| SafetyNet Pro | Social networks | Web | Cloud | Risk scoring & workflows | N/A |

| ContentGuard AI | Gaming & chat communities | Web | Cloud | Policy editor + analytics | N/A |

| ModerationHQ | Marketplaces & forums | Web | Cloud | Case management workflows | N/A |

| ClearSight Safety | Large brands & platforms | Web | Cloud | Automation + human review | N/A |

| TrustLayer Moderation | Enterprise & workflows | Web | Cloud | Workflow automation | N/A |

| OmniModerate | Real‑time messaging platforms | Web | Cloud | Fast automated response | N/A |

| SafeStream Guard | User‑generated video sites | Web | Cloud | Live video moderation | N/A |

| ReviewShield | Marketplace reviews | Web | Cloud | Spam & fake review detection | N/A |

| CommunitySafe Tools | SMBs & startups | Web | Cloud | Affordable moderation suite | N/A |

Evaluation & Scoring of Trust & Safety Moderation Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| AI Content Shield | 9 | 7 | 8 | 7 | 8 | 7 | 7 | 7.80 |

| SafetyNet Pro | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.65 |

| ContentGuard AI | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.60 |

| ModerationHQ | 7 | 8 | 7 | 7 | 7 | 7 | 7 | 7.40 |

| ClearSight Safety | 9 | 7 | 8 | 7 | 8 | 7 | 7 | 7.75 |

| TrustLayer Moderation | 9 | 7 | 8 | 7 | 8 | 7 | 7 | 7.75 |

| OmniModerate | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.60 |

| SafeStream Guard | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.55 |

| ReviewShield | 7 | 8 | 6 | 7 | 7 | 7 | 7 | 7.30 |

| CommunitySafe Tools | 7 | 8 | 6 | 7 | 7 | 7 | 7 | 7.15 |

Which Trust & Safety Moderation Tool Is Right for You?

Solo / Freelancer

Smaller platforms or startups can begin with CommunitySafe Tools or ModerationHQ for essential automated detection and manual queues.

SMB / Small Platforms

SafetyNet Pro and ContentGuard AI offer scalable moderation with risk scores and dashboards suitable for small to mid‑sized communities.

Mid‑Market

AI Content Shield, ClearSight Safety, and OmniModerate provide stronger multi‑modality detection and team workflows ideal for high‑growth platforms.

Enterprise

TrustLayer Moderation and SafeStream Guard provide enterprise‑grade workflows, automation, and live content handling for global, complex environments.

Budget vs Premium

CommunitySafe Tools is cost‑friendly, while enterprise solutions like ClearSight Safety and TrustLayer offer deeper automation and analytics at premium tiers.

Feature Depth vs Ease of Use

AI Content Shield and TrustLayer deliver deep customization, while SafetyNet Pro and ModerationHQ emphasize ease of deployment.

Integrations & Scalability

Tools with clear APIs and SDKs integrate well into product stacks; AI Content Shield and TrustLayer stand out here.

Security & Compliance Needs

Large platforms should prioritize audit logs, role‑based controls, and privacy compliance when choosing moderation tools.

Frequently Asked Questions (FAQs)

1. What is trust & safety moderation tooling?

These are platforms and systems used to detect, classify, and act on harmful or policy‑violating content through automated and manual review workflows.

2. Can moderation tools process more than text?

Yes — modern tools handle images, video, live streams, and audio depending on their capabilities.

3. Do they support real‑time moderation?

Many platforms provide real‑time classification and auto‑blocking to reduce exposure to harmful content.

4. What does “human‑in‑the‑loop” mean?

This refers to workflows where AI flags content and human reviewers make final decisions.

5. Do these tools integrate with mobile apps?

Yes — SDKs and APIs allow integration with web and mobile applications.

6. Are there analytics dashboards?

Most offer dashboards that show volume, trends, and risk signals for moderation performance.

7. Can I customize policies?

Yes — policy engines let organizations map internal rules to automated workflows.

8. Do moderation tools help with compliance?

They aid compliance by auditing content decisions and enforcing terms of service.

9. Are these tools scalable?

Yes — cloud‑based solutions are built to scale to millions of daily content events.

10. How do I choose the right tool?

Consider content types, volume, workflow needs, integration complexity, and budget when evaluating options.

Conclusion

Trust & safety moderation tools are critical for platforms handling user‑generated content with quality, compliance, and brand protection in mind. Small startups may start with CommunitySafe Tools or ModerationHQ for essential detection and review workflows, while growing platforms benefit from SafetyNet Pro, ContentGuard AI, and OmniModerate for more advanced policy and risk features. Enterprise environments with high‑volume or live content should consider ClearSight Safety, TrustLayer Moderation, or SafeStream Guard for scalable, automated workflows and live moderation capabilities. Evaluate integration, workflow automation, and reporting needs before selecting a tool. Start by shortlisting 2–3 solutions, testing their API and reviewer workflows, and validating real‑time detection to ensure a secure and engaging user environment.